Chapter 13. Scopes and Arguments

Chapter 12 looked at basic function definition and calls. As we’ve seen, the basic function model is simple to use in Python. This chapter presents the details behind Python’s scopes—the places where variables are defined, as well as argument passing—the way that objects are sent to functions as inputs.

Scope Rules

Now that you will begin to write your own functions, we need to get more formal about what names mean in Python. When you use a name in a program, Python creates, changes, or looks up the name in what is known as a namespace—a place where names live. When we talk about the search for a name’s value in relation to code, the term scope refers to a namespace—the location of a name’s assignment in your code determines the scope of the name’s visibility to your code.

Just about everything related to names happens at assignment in Python—even scope classification. As we’ve seen, names in Python spring into existence when they are first assigned a value, and must be assigned before they are used. Because names are not declared ahead of time, Python uses the location of the assignment of a name to associate it with (i.e., bind it to) a particular namespace. That is, the place where you assign a name determines the namespace it will live in, and hence its scope of visibility.

Besides packaging code, functions add an extra namespace layer to your programs—by default, all names assigned inside a function are associated with that function’s namespace, and no other. This means that:

Names defined inside a

defcan only be seen by the code within thatdef. You cannot even refer to such names from outside the function.Names defined inside a

defdo not clash with variables outside thedef, even if the same name is used elsewhere. A nameXassigned outside adefis a completely different variable than a nameXassigned inside thedef.

The net effect is that function scopes help avoid name clashes in your programs, and help to make functions more self-contained program units.

Python Scope Basics

Before you started writing functions, all code was written at the

top-level of a module (i.e., not nested in a def),

so the names either lived in the module itself, or were built-ins

that Python predefines (e.g., open).[1] Functions provide a

nested namespace (i.e.,

a

scope), which localizes the names they use, such that names inside

the function won’t clash with those outside (in a

module or other function). Functions define a local scope, and

modules define a global scope. The two scopes are related as follows:

The enclosing module is a global scope. Each module is a global scope—a namespace where variables created (assigned) at the top level of a module file live. Global variables become attributes of a module object to the outside world, but can be used as simple variables within a file.

The global scope spans a single file only. Don’t be fooled by the word “global” here—names at the top level of a file are only global to code within that single file. There is really no notion of a single, all-encompassing global file-based scope in Python. Instead, names are partitioned into modules, and you always must import a file explicitly if you want to be able to use the names its file defines. When you hear “global” in Python, think “my module.”

Each call to a function is a new local scope. Every time you call a function, you create a new local scope—a namespace where names created inside the function usually live. You can roughly think of this as though each

defstatement (andlambdaexpression) defines a new local scope. However, because Python allows functions to call themselves to loop—an advanced technique known as recursion—the local scope technically corresponds to a function call. Each call creates a new local namespace. Recursion is useful when processing structures whose shape can’t be predicted ahead of timeAssigned names are local, unless declared global. By default, all the names assigned inside a function definition are put in the local scope (the namespace associated with the function call). If you need to assign a name that lives at the top-level of the module enclosing the function, you can do so by declaring it in a

globalstatement inside the function.All other names are enclosing locals, globals, or built-ins. Names not assigned a value in the function definition are assumed to be enclosing scope locals (in an enclosing

def), globals (in the enclosing module’s namespace) or built-in (in the predefined__builtin__names module Python provides).

Note that any type of assignment within a function classifies a name

as local: = statements, imports,

defs, argument passing, and so on. Also notice

that in-place changes to objects do not classify names as locals;

only actual name assignments do. For instance, if name

L is assigned to a list at the top level of a

module, a statement like L.append(X) within a

function will not classify L as a local, whereas

L = X will. In the former case,

L will be found in the global scope as usual, and

change the global list.

Name Resolution: The LEGB Rule

If the prior section sounds confusing, it really boils down to three simple rules:

Name references search at most four scopes: local, then enclosing functions (if any), then global, then built-in.

Name assignments create or change local names by default.

Global declarations map assigned names to an enclosing module’s scope.

In other words, all names assigned inside a function

def statement (or

lambda—an expression we’ll

meet later) are locals by default; functions can use both names in

lexically (i.e.,

physically)

enclosing functions and the global scope, but they must declare

globals to change them. Python’s name resolution is

sometimes called the

LEGB

rule, after the scope names:

When you use an unqualified name inside a function, Python searches up to four scopes—the local (L), then the local scope of any enclosing (E)

defs andlambdas, then the global (G), and then the built-in (B)—and stops at the first place the name is found. If it is not found during this search, Python reports an error. As we learned in Chapter 4, names must be assigned before they can be used.When you assign a name in a function (instead of just referring to it in an expression), Python always creates or changes the name in the local scope, unless it’s declared to be global in that function.

When outside a function (i.e., at the top-level of a module or at the interactive prompt), the local scope is the same as the global—a module’s namespace.

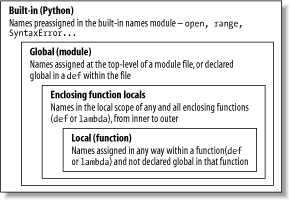

Figure 13-1 illustrates Python’s

four scopes. Note that the second

“E” scope lookup

layer—enclosing defs or

lambdas—technically can correspond to more

than one lookup layer. It only comes into play when you nest

functions within functions.[2]

Also, keep in mind that these rules only apply to simple

variable names (such as

spam). In Parts Part V and

Part VI, we’ll see that the

rules for qualified attribute names (such as

object.spam) live in a particular object and

follow a completely different set of lookup rules than the scope

ideas covered here. Attribute references (names following periods)

search one or more objects, not scopes, and may invoke something

called inheritance discussed in Part VI.

Scope Example

Let’s look at an example that demonstrates scope ideas. Suppose we write the following code in a module file:

# Global scope

X = 99 # X and func assigned in module: global

def func(Y): # Y and Z assigned in function: locals

# local scope

Z = X + Y # X is a global.

return Z

func(1) # func in module: result=100This module, and the function it contains, use a number of names to do their business. Using Python’s scope rules, we can classify the names as follows:

- Global names:

X,func Xis a global because it’s assigned at the top level of the module file; it can be referenced inside the function without being declared global.funcis global for the same reason; thedefstatement assigns a function object to the namefuncat the top level of the module.- Local names:

Y,Z YandZare local to the function (and exist only while the function runs), because they are both assigned a value in the function definition;Zby virtue of the=statement, andYbecause arguments are always passed by assignment.

The whole point behind this name segregation scheme is that local

variables serve as temporary names you need only while a function is

running. For instance, the argument Y and the

addition result Z exist only inside the function;

these names don’t interfere with the enclosing

module’s namespace (or any other function, for that

matter).

The local/global distinction also makes a function easier to understand; most of the names it uses appear in the function itself, not at some arbitrary place in a module. Because you can be sure that local names are not changed by some remote function in your program, they also tend to make programs easier to debug.

The Built-in Scope

We’ve been talking about the

built-in

scope in the abstract, but it’s a bit simpler than

you may think. Really, the built-in scope is just a prebuilt standard

library module called __builtin__, which you can

import and inspect if you want to see which names are predefined:

>>>import __builtin__>>>dir(__builtin__)['ArithmeticError', 'AssertionError', 'AttributeError', 'DeprecationWarning', 'EOFError', 'Ellipsis', ...many more names ommitted... 'str', 'super', 'tuple', 'type', 'unichr', 'unicode', 'vars', 'xrange', 'zip']

The names in this list are the built-in scope in Python; roughly the first half are built-in exceptions, and the second are built-in functions. Because Python automatically searches this module last in its LEGB lookup rule, you get all the names in this list for free—they can be used without importing any module. In fact, there are two ways to refer to a built-in function: by the LEGB rule, or by manually importing:

>>>zip # The normal way<built-in function zip> >>>import __builtin__ # The hard way>>>__builtin__.zip<built-in function zip>

The second of these is sometimes useful in advanced work. The careful

reader might also notice that, because the LEGB lookup procedure

takes the first occurrence of a name that it finds, names in the

local scope may override variables of the same name in both the

global and built-in scopes, and global names may override built-ins.

A function can, for instance, create a local variable called

open by assigning to it:

def hider( ):

open = 'spam' # Local variable, hides built-in

...However, this will hide the built-in function called

open that lives in the built-in (outer) scope.

It’s also usually a bug, and a nasty one at that,

because Python will not issue a message about it—there are

times in advanced programming where you may really want to replace a

built-in name by redefining it in your code.

Functions can similary hide global variables of the same name with locals:

X = 88 # Global X

def func( ):

X = 99 # Local X: hides global

func( )

print X # Prints 88: unchangedHere, the assignment within the function creates a local

X that is a competely different variable than the

global X in the module outside the function.

Because of this, there is no way to change a name outside the

function, without adding a global declaration to

the def—as described in the next

section.

The global Statement

The global

statement

is the only thing

that’s remotely like a declaration statement in

Python. It’s not a type or size declaration, though,

it’s a namespace declaration. It tells Python that a

function plans to change global names—names that live in the

enclosing module’s scope (namespace).

We’ve talked about global in

passing already; as a summary:

globalmeans “a name at the top-level of the enclosing module file.”Global names must be declared only if they are assigned in a function.

Global names may be referenced in a function without being declared.

The global statement is just the keyword global,

followed by one or more names separated by commas. All the listed

names will be mapped to the enclosing module’s scope

when assigned or referenced within the function body. For instance:

X = 88 # Global X

def func( ):

global X

X = 99 # Global X: outside def

func( )

print X # Prints 99We’ve added a global declaration

to the example here, such that the X inside the

def now refers to the X outside

the def; they are the same variable this time.

Here is a slightly more involved example of global

at work:

y, z = 1, 2 # Global variables in module

def all_global( ):

global x # Declare globals assigned.

x = y + z # No need to declare y,z: LEGB ruleHere, x, y, and

z are all globals inside the function

all_global. y and

z are global because they aren’t

assigned in the function; x is global because it

was listed in a global statement to map it to the

module’s scope explicitly. Without the

global here, x would be

considered local by virtue of the assignment.

Notice that y and z are not

declared global; Python’s LEGB lookup rule finds

them in the module automatically. Also notice that

x might not exist in the enclosing module before

the function runs; if not, the assignment in the function creates

x in the module.

If you want to change names outside functions, you have to write

extra code (global statements); by default, names

assigned in functions are locals. This is by design—as is

common in Python, you have to say more to do the

“wrong” thing. Although there are

exceptions, changing globals can lead to well-known software

engineering problems: because the values of variables are dependent

on the order of calls to arbitrarily distant functions, programs can

be difficult to debug. Try to minimize use of globals in your code.

Scopes and Nested Functions

It’s time to take a

deeper look at the letter

“E” in the LEGB lookup rule. The

“E” layer takes the form of the

local scopes of any and all enclosing function

defs. This layer is a relatively new addition to

Python (added in Python 2.2), and is sometimes called

statically nested scopes. Really, the nesting is

a lexical one—nested scopes correspond to physically nested

code structures in your program’s source code.

Nested Scope Details

With the addition of nested function scopes, variable lookup rules become slightly more complex. Within a function:

- Assignment:

X=value Creates or changes name

Xin the current local scope by default. IfXis declared global within the function, it creates or changes nameXin the enclosing module’s scope instead.- Reference:

X Looks for name

Xin the current local scope (function), then in the local scopes of all lexically enclosing functions from inner to outer (if any), then in the current global scope (the module file), and finally in the built-in scope (module__builtin__).globaldeclarations make the search begin in the global scope instead.

Notice that the global declaration still maps

variables to the enclosing module. When nested functions are present,

variables in enclosing functions may only be referenced, not changed.

Let’s illustrate all this with some real code.

Nested Scope Examples

Here is an example of a nested scope:

def f1( ):

x = 88

def f2( ):

print x

f2( )

f1( ) # Prints 88First off, this is legal Python code: the def is simply an executable

statement that can appear anywhere any other statement

can—including nested in another def. Here,

the nested def runs while a call to function f1 is

running; it generates a function and assigns it to name

f2, a local variable within

f1’s local scope. In a sense,

f2 is a temporary function, that only lives during

the execution of (and is only visible to code in) the enclosing

f1.

But notice what happens inside f2: when it prints

variable x, it refers to the x

that lives in the enclosing f1

function’s local scope. Because functions can access

names in all physically enclosing def statements,

the x in f2 is automatically

mapped to the x in

f1, by the LEGB lookup rule.

This enclosing scope lookup works even if the enclosing function has already returned. For example, the following code defines a function that makes and returns another:

def f1( ):

x = 88

def f2( ):

print x

return f2

action = f1( ) # Make, return function.

action( ) # Call it now: prints 88In this code, the call to action is really running

the function we named f2 when

f1 ran. f2

remembers the enclosing scope’s x

in f1, even though f1 is no

longer active. This sort of behavior is also sometimes called a

closure—an object that remembers values in enclosing scopes,

even though those scopes may not be around any more. Although classes

(described in Part VI) are usually best at

remembering state, such functions provide another alternative.

In earlier versions of Python, all this sort of code failed, because

nested defs did not do anything about

scopes—a reference to a variable within f2

would search local (f2), then global (the code

outside f1), and then built-in. Because it skipped

the scopes of enclosing functions, an error would result. To work

around this, programmers typically used default argument

values

to pass-in (remember) the objects

in an enclosing scope:

def f1( ):

x = 88

def f2(x=x):

print x

f2( )

f1( ) # Prints 88This code works in all Python releases, and you’ll

still see this pattern in much existing Python code.

We’ll meet defaults in more detail later in this

chapter; in short, the syntax arg=val in a

def header means that argument

arg will default to value val,

if no real value is passed to arg in a call.

In the modified f2, the x=x

means that argument x will default to the value of

x in the enclosing scope—because the second

x is evaluated before Python steps into the nested

def, it still refers to the x

in f1. In effect, the default remembers what

x was in f1, the object

88.

That’s fairly complex, and depends entirely on the

timing of default value evaluations. That’s also why

the nested scope lookup rule was added to Python, to make defaults

unnecessary for this role. Today, Python automatically remembers any

values required in the enclosing scope, for use in nested

defs.

Of course, the best prescription here is probably just

don’t do that. Programs are much simpler if you do

not nest defs within defs.

Here’s an equivalent of the prior example, which

banishes the notion of nesting; notice that it’s

okay to call a function (see following) defined after the one that

contains the call like this, as long as the second

def runs before the call of the first

function—code inside a def is never

evaluated until the function is actually called:

>>>def f1( ):...x = 88...f2(x)... >>>def f2(x):...print x... >>>f1( )88

If you avoid nesting this way, you can almost forget about the nested

scopes concept in Python, at least for defs.

However, you are even more likely to care about such things when you

start coding lambda expressions. We also

won’t meet lambda in depth until

Chapter 14; in short, lambda is

an expression that generates a new function to be called later, much

like a def statement (because

it’s an expression, it can be used in places that

def cannot, such as within list and dictionary

literals).

Also like a def, lambda

expressions introduce a new local scope. With the enclosing scopes

lookup layer, they can see all the variables that live in the

function in which they are coded. The following works today, only

because the nested scope rules are now applied:

def func( ):

x = 4

action = (lambda n: x ** n) # x in enclosing def

return action

x = func( )

print x(2) # Prints 16Prior to the introduction of nested function scopes, programmers used

defaults to pass values from an enclosing scope into

lambdas, as for defs. For

instance, the following works on all Python releases:

def func( ):

x = 4

action = (lambda n, x=x: x ** n) # Pass x in manually.Because lambdas are expressions, they naturally

(and even normally) nest inside enclosing defs.

Hence, they are perhaps the biggest beneficiary of the addition of

enclosing function scopes in the lookup rules; in most cases, it is

no longer necesary to pass values into lambdas

with defaults. We’ll have more to say about both

defaults and lambdas later, so you may want to

return and review this section later.

Before ending this discussion, note that scopes nest arbitrarily, but only enclosing functions (not classes, described in Part VI) are searched:

>>>def f1( ):...x = 99...def f2( ):...def f3( ):...print x # Found in f1's local scope!...f3( )...f2( )... >>>f1( )99

Python will search the local scopes of all enclosing defs, after the referencing function’s local scope, and before the module’s global scope. However, this sort of code seems less likely in practice.

Passing Arguments

Let’s expand on the notion of argument passing in Python. Earlier, we noted that arguments are passed by assignment; this has a few ramifications that aren’t always obvious to beginners:

Arguments are passed by automatically assigning objects to local names. Function arguments are just another instance of Python assignment at work. Function arguments are references to (possibly) shared objects referenced by the caller.

Assigning to argument names inside a function doesn’t affect the caller. Argument names in the function header become new, local names when the function runs, in the scope of the function. There is no aliasing between function argument names and names in the caller.

Changing a mutable object argument in a function may impact the caller. On the other hand, since arguments are simply assigned to passed-in objects, functions can change passed-in mutable objects, and the result may affect the caller.

Python’s pass-by-assignment scheme isn’t the same as C++’s reference parameters, but it turns out to be very similar to C’s arguments in practice:

Immutable arguments act like C’s “by value” mode. Objects such as integers and strings are passed by object reference (assignment), but since you can’t change immutable objects in place anyhow, the effect is much like making a copy.

Mutable arguments act like C’s “by pointer” mode. Objects such as lists and dictionaries are passed by object reference, which is similar to the way C passes arrays as pointers—mutable objects can be changed in place in the function, much like C arrays.

Of course, if you’ve never used C, Python’s argument-passing mode will be simpler still—it’s just an assignment of objects to names, which works the same whether the objects are mutable or not.

Arguments and Shared References

Here’s an example that illustrates some of these properties at work:

>>>def changer(x, y): # Function...x = 2 # Changes local name's value only...y[0] = 'spam' # Changes shared object in place... >>>X = 1>>>L = [1, 2] # Caller>>>changer(X, L) # Pass immutable and mutable>>>X, L # X unchanged, L is different(1, ['spam', 2])

In this code, the changer function assigns to

argument name x and a component in the object

referenced by argument y. The two assignments

within the function are only slightly different in syntax, but have

radically different results:

Since

xis a local name in the function’s scope, the first assignment has no effect on the caller—it simply changes local variablex, and does not change the binding of nameXin the caller.Argument

yis a local name too, but it is passed a mutable object (the list calledLin the caller). Since the second assignment is an in-place object change, the result of the assignment toy[0]in the function impacts the value ofLafter the function returns.

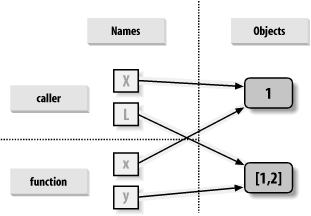

Figure 13-2 illustrates the name/object bindings that exist immediately after the function has been called, and before its code has run.

If you recall some of the discussion about shared mutable objects in Chapter 4 and Chapter 7, you’ll recognize that this is the exact same phenomenon at work: changing a mutable object in-place can impact other references to the object. Here, its effect is to make one of the arguments work like an output of the function.

Avoiding Mutable Argument Changes

If you don’t want in-place changes within functions to impact objects you pass to them, simply make explicit copies of mutable objects, as we learned in Chapter 7. For function arguments, we can copy the list at the point of call:

L = [1, 2]

changer(X, L[:]) # Pass a copy, so my L does not change.We can also copy within the function itself, if we never want to change passed-in objects, regardless of how the function is called:

def changer(x, y):

y = y[:] # Copy input list so I don't impact caller.

x = 2

y[0] = 'spam' # Changes my list copy onlyBoth of these copying schemes don’t stop the function from changing the object—they just prevent those changes from impacting the caller. To really prevent changes, we can always convert to immutable objects to force the issue. Tuples, for example, throw an exception when changes are attempted:

L = [1, 2]

changer(X, tuple(L)) # Pass a tuple, so changes are errors.This scheme uses the built-in tuple function,

which builds a new tuple out of all the items in a sequence.

It’s also something of an extreme—because it

forces the function to be written to never change passed in

arguments, it might impose more limitation on the function than it

should, and should often be avoided. You never know when changing

arguments might come in handy for other calls in the future. The

function will also lose the ability to call any list-specific methods

on the argument, even methods that do not change the object in-place.

Simulating Output Parameters

We’ve already discussed the

return statement, and used it in a few examples.

But here’s a trick: because

return sends back any sort of object, it can

return multiple values, by packaging them in a tuple. In fact,

although Python doesn’t have what some languages

label call-by-reference argument passing, we

can usually simulate it by returning tuples, and assigning results

back to the original argument names in the caller:

>>>def multiple(x, y):...x = 2 # Changes local names only...y = [3, 4]...return x, y # Return new values in a tuple.... >>>X = 1>>>L = [1, 2]>>>X, L = multiple(X, L) # Assign results to caller's names.>>>X, L(2, [3, 4])

It looks like the code is returning two values here, but

it’s just one—a two-item tuple, with the

optional surrounding parentheses omitted. After the call returns, use

tuple assignment to unpack the parts of the returned tuple. (If

you’ve forgotten why, flip back to

Section 7.1 in

Chapter 7 and Section 8.1 in Chapter 8.)The net effect of this coding pattern is to

simulate the output parameters of other languages, by explicit

assignments. X and L change

after the call, but only because the code said so.

Special Argument Matching Modes

Arguments are always passed

by

assignment in Python; names in the

def header are assigned to passed-in objects. On

top of this model, though, Python provides additional tools that

alter the way the argument objects in the call are

matched with argument names in the header prior

to assignment. These tools are all optional, but allow you to write

functions that support more flexible calling patterns.

By default, arguments are matched by position, from left to right, and you must pass exactly as many arguments as there are argument names in the function header. But you can also specify a match by name, default values, and collectors for extra arguments.

Some of this section gets complicated, and before going into syntactic details, we’d like to stress that these special modes are optional and only have to do with matching objects to names; the underlying passing mechanism is still assignment, after the matching takes place. But here’s a synopsis of the available matching modes:

- Positionals: matched left to right

The normal case used so far is to match arguments by position.

- Keywords: matched by argument name

Callers can specify which argument in the function is to receive a value by using the argument’s name in the call, with a

name=valuesyntax.- Varargs: catch unmatched positional or keyword arguments

Functions can use special arguments preceded with

*characters to collect arbitrarily extra arguments (much like, and often named for, the varargs feature in the C language, which supports variable-length argument lists).- Defaults: specify values for arguments that aren’t passed

Functions may also specify default values for arguments to receive if the call passes too few values, using a

name=valuesyntax.

Table 13-1 summarizes the syntax that invokes the special matching modes.

In a call (the first two rows of the table), simple names are matched

by position, but using the name=value form tells

Python to match by name instead; these are called keyword

arguments.

In a function header, a simple name is matched by position or name

(depending on how the caller passes it), but the

name=value form specifies a default value, the

*name collects any extra positional arguments in a

tuple, and the **name form collects extra keyword

arguments in a dictionary.

As a result, special matching modes let you be fairly liberal about how many arguments must be passed to a function. If a function specifies defaults, they are used if you pass too few arguments. If a function uses the varargs (variable argument list) form, you can pass too many arguments; the varargs names collect the extra arguments in a data structure.

Keyword and Default Examples

Python matches names by position by default, like most other languages. For instance, if you define a function that requires three arguments, you must call it with three arguments:

>>> def f(a, b, c): print a, b, c

...Here, we pass them by position: a is matched to 1,

b is matched to 2, and so on:

>>> f(1, 2, 3)

1 2 3In Python, though, you can be more specific about what goes where when you call a function. Keyword arguments allow us to match by name, instead of position:

>>> f(c=3, b=2, a=1)

1 2 3Here, the c=3 in the call means match this against

the argument named c in the header. The net effect

of this call is the same as the prior, but notice that the left to

right order no longer matters when keywords are used, because

arguments are matched by name, not position. It’s

even possible to combine positional and keyword arguments in a single

call—all positionals are matched first from left to right in

the header, before keywords are matched by name:

>>> f(1, c=3, b=2)

1 2 3When most people see this the first time, they wonder why one would

use such a tool. Keywords typically have two roles in Python. First

of all, they make your calls a bit more self-documenting (assuming

that you use better argument names than a,

b, and c). For example, a call

of this form:

func(name='Bob', age=40, job='dev')

is much more meaningful than a call with three naked values separated by commas—the keywords serve as labels for the data in the call. The second major use of keywords occurs in conjunction with defaults.

We introduced defaults earlier when discussing nested function scopes. In short, defaults allow us to make selected function arguments optional; if not passed a value, the argument is assigned its default before the function runs. For example, here is a function that requires one argument, and defaults two:

>>> def f(a, b=2, c=3): print a, b, c

...When called, we must provide a value for a, either

by position or keyword; if we don’t pass values to

b and c, they default to 2 and

3, respectively:

>>>f(1)1 2 3 >>>f(a=1)1 2 3

If we pass two values, only c gets its default;

with three values, no defaults are used:

>>>f(1, 4)1 4 3 >>>f(1, 4, 5)1 4 5

Finally, here is how the keyword and default features interact: because they subvert the normal left-to-right positional mapping, keywords allow us to essentially skip over arguments with defaults:

>>> f(1, c=6)

1 2 6Here a gets 1 by position, c

gets 6 by keyword, and b, in between, defaults to

2.

Arbitrary Arguments Examples

The last two matching extensions, * and

**, are designed to support functions that take

any number of arguments. The first collects unmatched positional

arguments into a tuple:

>>> def f(*args): print argsWhen this function is called, Python collects all the positional

arguments into a new tuple, and assigns the variable

args to that tuple. Because it is a normal tuple

object, it might be indexed, stepped through with a

for loop, and so on:

>>>f( )( ) >>>f(1)(1,) >>>f(1,2,3,4)(1, 2, 3, 4)

The ** feature is similar, but only works for

keyword arguments—it collects them into a new dictionary, which

can then be processed with normal dictionary tools. In a sense, the

** form allows you to convert from keywords to

dictionaries:

>>>def f(**args): print args... >>>f( ){ } >>>f(a=1, b=2){'a': 1, 'b': 2}

Finally, function headers can combine normal arguments, the

*, and the **, to implement

wildly flexible call signatures:

>>>def f(a, *pargs, **kargs): print a, pargs, kargs... >>>f(1, 2, 3, x=1, y=2)1 (2, 3) {'y': 2, 'x': 1}

In fact, these features can be combined in more complex ways that almost seem ambiguous at first glance—an idea we will revisit later in this chapter.

Combining Keywords and Defaults

Here is a

slightly larger example that demonstrates both keywords and defaults

in action. In the following, the caller must always pass at least two

arguments (to match spam and

eggs), but the other two are optional; if omitted,

Python assigns toast and ham to

the defaults specified in the header:

def func(spam, eggs, toast=0, ham=0): # First 2 required

print (spam, eggs, toast, ham)

func(1, 2) # Output: (1, 2, 0, 0)

func(1, ham=1, eggs=0) # Output: (1, 0, 0, 1)

func(spam=1, eggs=0) # Output: (1, 0, 0, 0)

func(toast=1, eggs=2, spam=3) # Output: (3, 2, 1, 0)

func(1, 2, 3, 4) # Output: (1, 2, 3, 4)Notice that when keyword arguments are used in the call, the order in

which arguments are listed doesn’t matter; Python

matches by name, not position. The caller must supply values for

spam and eggs, but they can be

matched by position or name. Also notice that the form

name=value means different things in the call and

def: a keyword in the call, and a default in the

header.

The min Wakeup Call

To make this more concrete, let’s work through an

exercise that demonstrates a practical application for argument

matching tools: suppose you want to code a function that is able to

compute the minimum value from an arbitrary set of arguments, and an

arbitrary set of object datatypes. That is, the function should

accept zero or more arguments—as many as you wish to pass.

Moreover, the function should work for all kinds of Python object

types—numbers, strings, lists, lists of dictionaries, files,

even None.

The first part of this provides a natural example of how the

* feature can be put to good use—we can

collect arguments into a tuple, and step over each in turn with a

simple for loop. The second part of this problem

definition is easy: because every object type supports comparisons,

we don’t have to specialize the function per type

(an application of

polymorphism

);

simply compare objects blindly, and let Python perform the correct

sort of comparison.

Full credit

The following file shows three ways to code this operation, at least one of which was suggested by a student at some point along the way:

In the first function, take the first argument (

argsis a tuple), and traverse the rest by slicing off the first (there’s no point in comparing an object to itself, especially if it might be a large structure).The second version lets Python pick off the first and rest of the arguments automatically, and so avoids an index and a slice.

The third converts from tuple to list with the built-in

listcall, and employing the listsortmethod.

Because the sort method is so quick, this third

scheme is usually fastest, when this has been timed. File

mins.py contains the solution code:

def min1(*args):

res = args[0]

for arg in args[1:]:

if arg < res:

res = arg

return res

def min2(first, *rest):

for arg in rest:

if arg < first:

first = arg

return first

def min3(*args):

tmp = list(args)

tmp.sort( )

return tmp[0]

print min1(3,4,1,2)

print min2("bb", "aa")

print min3([2,2], [1,1], [3,3])All three produce the same result, when we run this file. Try typing a few calls interactively to experiment with these on your own.

C:Python22>python mins.py

2

aa

[1, 1]Notice that none of these three variants test for the case where no arguments are passed in. They could, but there’s no point in doing so here—in all three, Python will automatically raise an exception if no arguments are passed in. The first raises an exception when we try to fetch item 0; the second, when Python detects an argument list mismatch; and the third, when we try to return item 0 at the end.

This is exactly what we want to happen, though—because these functions support any data type, there is no valid sentinel value that we could pass back to designate an error. There are exceptions to this rule (e.g., if you have to run expensive actions before you reach the error); but in general, it’s better to assume that arguments will work in your functions’ code, and let Python raise errors for you when they do not.

Bonus points

Students and readers can get bonus points here for changing these

functions to compute the maximum, rather

than

minimum values. Alas, this one is too easy: the first two versions

only require changing < to

>, and the third only requires that we return

tmp[-1] instead of tmp[0]. For

extra points, be sure to set the function name to

“max” as well (though this part is

strictly optional).

It is possible to generalize a single function like this to compute

either a minimum or maximum, by either evaluating comparison

expression strings with tools like the eval

built-in function to evaluate a dynamically constructed string of

code (see the library manual), or by passing in an arbitrary

comparison function. File minmax.py shows how to

implement the latter scheme:

def minmax(test, *args):

res = args[0]

for arg in args[1:]:

if test(arg, res):

res = arg

return res

def lessthan(x, y): return x < y # see also: lambda

def grtrthan(x, y): return x > y

print minmax(lessthan, 4, 2, 1, 5, 6, 3)

print minmax(grtrthan, 4, 2, 1, 5, 6, 3)

% python minmax.py

1

6Functions are another kind of object that can be passed into another

function like this. To make this a max (or other),

we simply pass in the right sort of test function.

The punch line

Of course, this is just a coding exercise. There’s

really no reason to code either min or

max functions, because both are built-ins in

Python! The built-in versions work almost exactly like ours, but are

coded in C for optimal speed.

A More Useful Example: General Set Functions

Here’s a more useful

example of special argument-matching modes

at work. Earlier in the chapter, we wrote a function that returned

the intersection of two sequences (it picked out items that appeared

in both). Here is a version that intersects an arbitrary number of

sequences (1 or more), by using the varargs matching form

*args to collect all arguments passed. Because the

arguments come in as a tuple, we can process them in a simple

for loop. Just for fun, we’ve

also coded an arbitrary-number-arguments union function too; it

collects items that appear in any of the operands:

def intersect(*args):

res = [ ]

for x in args[0]: # Scan first sequence

for other in args[1:]: # for all other args.

if x not in other: break # Item in each one?

else: # No: break out of loop

res.append(x) # Yes: add items to end

return res

def union(*args):

res = [ ]

for seq in args: # For all args

for x in seq: # For all nodes

if not x in res:

res.append(x) # Add new items to result.

return resSince these are tools worth reusing (and are too big to retype

interactively), we’ve stored the functions in a

module file called inter2.py (more on modules in

Part V). In both functions, the arguments

passed in at the call come in as the args tuple.

As in the original intersect, both work on any kind of sequence. Here

they are processing strings, mixed types, and more than two

sequences:

%python>>>from inter2 import intersect, union>>>s1, s2, s3 = "SPAM", "SCAM", "SLAM">>>intersect(s1, s2), union(s1, s2) # Two operands(['S', 'A', 'M'], ['S', 'P', 'A', 'M', 'C']) >>>intersect([1,2,3], (1,4)) # Mixed types[1] >>>intersect(s1, s2, s3) # Three operands['S', 'A', 'M'] >>>union(s1, s2, s3)['S', 'P', 'A', 'M', 'C', 'L']

Argument Matching: The Gritty Details

If you choose to use and combine the special argument matching modes, Python will ask you to follow two ordering rules:

In a call, all keyword arguments must appear after all non-keyword arguments.

In a function header, the

*namemust appear after normal arguments and defaults, and**namemust appear last.

Moreover, Python internally carries out the following steps to match arguments before assignment:

Assign non-keyword arguments by position.

Assign keyword arguments by matching names.

Assign extra non-keyword arguments to

*nametuple.Assign extra keyword arguments to

**namedictionary.Assign default values to unassigned arguments in header.

This is as complicated as it looks, but tracing Python’s matching algorithm helps to understand some cases, especially when modes are mixed. We’ll postpone additional examples of these special matching modes until we do the exercises at the end of Part IV.

As you can see, advanced argument matching modes can be complex. They are also entirely optional; you can get by with just simple positional matching, and it’s probably a good idea to do so if you’re just starting out. However, because some Python tools make use of them, they’re important to know in general.

[1] Code typed at the interactive command line is really entered

into a built-in module called __main__, so

interactively created names live in a module too, and thus follow the

normal scope rules. There’s more about modules in

Part V.

[2] The scope lookup rule was

known as the “LGB” rule in the

first edition of this book. The enclosing def

layer was added later in Python, to obviate the task of passing in

enclosing scope names explicitly—something usually of marginal

interest to Python beginners.