Hour 24. Understanding Threads, Concurrency, and Parallelism

What You’ll Learn in This Hour

• Understanding threads and threading

• Concurrency and synchronization

• Understanding the Task Parallel Library

• Working with Parallel LINQ (PLINQ)

So far, all the applications you have written, and most software that exists today, were designed for single-threaded execution. This is mainly because the programming model of single-threaded execution reduces complexity and is easier to code. However, as processor technology continues to evolve from single-core to multicore architectures, it is more common for applications to begin taking advantage of the benefits of multiple threads and multiple cores. Unfortunately, using multiple threads and cores brings with it an entirely new set of problems and complexity. The .NET Framework, through the parallel computing platform, simplifies the task of writing applications that can take advantage of multiple threads and cores. This platform forms the basis of the multithreading capabilities provided by the .NET Framework, such as the managed thread pool, and includes parallel implementations of the common loop instructions, LINQ to Objects, and new thread-safe collections.

In this hour, you learn the basics of writing multithreaded applications and how the parallel computing platform enables you to write efficient and scalable code that takes advantage of multiple processors in a natural and simple way.

Understanding Threads and Threading

The Windows operating system (and most modern operating systems) separates different running applications into processes; a process can have one or more threads executing inside it. Threads form the basic unit of work to which the operating system can allocate processor time. A thread maintains its own exception handlers, a scheduling priority, and a way to save its context until it is scheduled.

The Windows operating system supports a threading strategy called preemptive multitasking, which creates the effect of simultaneous execution of multiple threads from multiple processors. To do this, the operating system divides the available processor time across each of the threads that need it, sequentially allocating each thread a slice of that time. When a thread’s time slice elapses, it is suspended, and another thread resumes running. When this transfer, known as a context switch, occurs, the context of the preempted thread is saved so that when it resumes, it can continue execution with the same context. On a multiprocessor system, the operating system can take advantage of having multiple processors and schedule more threads to execute more efficiently, but the basic strategy remains the same.

The .NET Framework further expands processes into application domains (represented through the AppDomain class), which are lightweight managed subprocesses. A single process might have multiple application domains, and each application domain might have one or more managed threads (represented by the Thread class). Managed threads are free to move between application domains in the same process, which means you might have one thread moving among several application domains or multiple threads executing in a single application domain.

Using multiple threads is the most powerful technique available to increase the responsiveness of your application by allowing it to process data and respond to user input at almost the same time. For example, you can use multiple threads to do the following:

• Communicate to a web server or database.

• Perform long-running or complex operations.

• Allow the user interface to remain responsive while performing other tasks in the background.

This same application, when run on a computer with multiple processors, could also exhibit dramatic performance improvements without requiring modification.

There is, however, a trade-off. Threading consumes operating system resources to store the context information required by processes, application domains, and threads. The more threads you create, the more time the processor must spend keeping track of those threads. Controlling code execution and knowing when threads should be destroyed can be complex and can be a source of frequent bugs.

Concurrency and Synchronization

A simple definition for concurrency is simultaneously performing multiple tasks that can potentially interact with each other. Because of this interaction, it is possible for multiple threads to access the same resource simultaneously, which can lead to problems such as deadlocking and starvation.

A deadlock refers to the condition when two or more threads are waiting for each other to release a resource (or more than two threads are waiting for resources in a circular chain). Starvation, similar to a deadlock, is the condition when one or more threads are perpetually denied access to a resource.

Thread safety refers to protecting resources from concurrent access by multiple threads. A class whose members are protected is called thread-safe.

Because of these potential concurrency problems, when multiple threads can access the same resource, it is essential that those calls be synchronized. This prevents one thread from being interrupted while it is accessing that resource. The common language runtime (CLR) provides several different synchronization primitives that enable you to control thread interactions. Although many of the synchronization primitives share characteristics, they can be loosely divided into the following three categories:

• Locks

• Signals

• Interlocked operations

Working with Locks

What would happen if multiple threads attempted to access the same resource simultaneously? Imagine this resource is an instance of a Stack<int>. Without any type of protection, multiple threads could manipulate the stack at the same time. If one thread attempts to peek at the top value at the same time another thread is pushing a new value, what is the result of the peek operation?

Locks protect a resource by giving control to one thread at a time. Locks are generally exclusive, although they need not be. Nonexclusive locks are often useful to allow a limited number of threads access to a resource. When a thread requests access to a resource that is locked, it goes to sleep (commonly referred to as blocking) until the lock becomes available.

Exclusive locks, most easily accomplished using the lock statement, control access to a block of code, commonly called a critical section. The lock statement is best used to protect small blocks of code that do not span more than a single method. The syntax of the lock statement is as follows:

lock ( expression )

embedded-statement

The expression of a lock statement must always be a reference type value.

Caution: Lock Expressions to Avoid

You should not lock on a public type, using lock(typeof(PublicType)), or instances of a type, using lock(this). If outside code also attempts to lock on the same public type or instance, it could create a deadlock.

Locking on string literals, using lock("typeName"), is also problematic due to the string interning performed by the CLR. Because only a single instance is shared across the assembly, placing a lock on a string literal causes any location where that string is accessed to also be locked.

The best practice is to define a read-only private or private static object on which to lock.

Listing 24.1 shows an example of using locks to create a thread-safe increment and decrement operation.

Listing 24.1. The lock Statement

public class ThreadSafeClass

{

private int counter;

private static readonly object syncLock = new object();

public int Increment()

{

lock(syncLock)

{

return this.counter++;

}

}

public int Decrement()

{

lock(syncLock)

{

return this.counter--;

}

}

}

The Monitor class also protects a resource through the Enter, TryEnter, and Exit methods, and can be used with the lock statement to provide additional functionality.

The Enter method enables a single thread access to the protected resource at a time. If you want the blocked thread to give up after a specified interval, you can use the TryEnter method instead. Using TryEnter can help detect and avoid potential deadlocks.

Although the Monitor class is more powerful than the simple lock statement, it is prone to orphaned locks and deadlocks. In general, you should use the lock statement when possible.

The lock statement is more concise and guarantees a correct implementation of calling the Monitor methods because the compiler generates the expansion on your behalf.

The compiler expands the lock statement shown in Listing 24.1 to the code shown here:

bool needsExit = false;

try

{

System.Threading.Monitor.Enter(syncLock, ref needsExit);

this.counter = value;

}

finally

{

if (needsExit)

{

System.Threading.Monitor.Exit(syncLock);

}

}

By making use of a try-finally block, the lock statement helps ensure that the lock will be released even if an exception is thrown.

A thread uses the Wait method from within a critical section to give up control of the resource and block until the resource is available again. The Wait method is typically used in combination with the Pulse and PulseAll methods, which enable a thread that is about to release a lock or call Wait to put one or more threads into the ready queue so that they can acquire the lock.

If you hold a lock for a short period, you might want to use a SpinLock instead of Monitor. Rather than blocking when it encounters a locked critical section, SpinLock simply spins in a loop until the lock becomes available. When used with locks held for more than a few tens of cycles, SpinLock performs just as well as Monitor but uses more CPU cycles.

Using Signals

If you need to allow a thread to communicate an event to another, you cannot use locks. Instead you need to use synchronization events, or signals, which are objects having either a signaled or unsignaled state. Threads can be suspended by waiting on an unsignaled synchronization event and can be activated by signaling the event.

There are two primary types of synchronization events. Automatic reset events, implemented by the AutoResetEvent class, behave like amusement park turnstiles and enable a single thread through the turnstile each time it is signaled. These events automatically change from signaled to unsignaled each time a thread is activated. Manual reset events, implemented by the ManualResetEvent and ManualResetEventSlim classes, on the other hand, behave more like a gate; when signaled, it is opened and remains open until it is closed again.

By calling one of the Wait methods, such as WaitOne, WaitAny, or WaitAll, the thread waits on an event to be signaled. WaitOne causes the thread to wait until a single event is signaled, whereas WaitAny causes it to wait until one or more of the indicated events are signaled. On the other hand, WaitAll causes the thread to wait until all the indicated events have been signaled. To signal an event, call the Set method. The Reset method causes the event to revert to an unsignaled state.

Interlocked Operations

Interlocked operations are provided through the Interlocked class, which contains static methods that can be used to synchronize access to a variable shared by multiple threads. Interlocked operations are atomic, meaning the entire operation is one unit of work that cannot be interrupted, and are native to the Windows operating system, so they are extremely fast.

Interlocked operations, when used with volatile memory guarantees (provided through the volatile keyword on a field), can create applications that provide powerful nonblocking concurrency; however, they do require more sophisticated, low-level programming. For most purposes, simple locks are the better choice.

Other Synchronization Primitives

Although the lock statement and Monitor and SpinLock classes are the most common synchronization primitives, the .NET Framework provides several other synchronization primitives. A detailed explanation of the remaining primitives is beyond the scope of this book, but the following sections introduce the basic concepts of each one.

Mutex

If you need to synchronize threads in different processes or across application domains, you can use a Mutex, which is an abbreviated form of the term “mutually exclusive.” A global mutex is called a named mutex because it must be given a unique name so that multiple processes can access the same object.

Reader-Writer Locks

A common multithreaded scenario is one in which a particular thread, typically called the writer thread, changes data and must have exclusive access to the resource. As long as the writer thread is not active, any number of reader threads can access the resource. This scenario can be easily accomplished using the ReaderWriterLockSlim class, which provides the EnterReaderLock and EnterWriterLock methods to acquire and release the lock.

Semaphore

A semaphore enables only a specified number of threads access to a resource. When that limit is reached, additional threads requesting access wait until a thread releases the semaphore. Like a mutex, a semaphore can be either global or local and can be used across application domains. Unlike Monitor, Mutex, and ReaderWriterLockSlim, a semaphore can also be used when one thread acquires the semaphore and another thread releases it.

Understanding the Task Parallel Library

The preferred way to write multithreaded and parallel code is using the Task Parallel Library (TPL). The TPL simplifies the process of adding parallelism and concurrency to your application, allowing you to be more productive. Rather than requiring you to understand the complexities of scaling processes to most efficiently use multiple processors, the TPL handles that task for you.

Caution: Understanding Concurrency

Even though the TPL simplifies writing multithreaded and parallel applications, not all code is suited to run in parallel. It still requires you to have an understanding of basic threading concepts, such as locking and deadlocks, to use the TPL effectively.

Data Parallelism

When the same operation is performed concurrently on elements in a source collection (or array), it is referred to as data parallelism. Data parallel operations partition the source collection so that multiple threads can operate on different segments concurrently. The System.Threading.Tasks.Parallel class supports data parallel operations through the For and ForEach methods, which provide method-based parallel implementations of for and foreach loops, respectively.

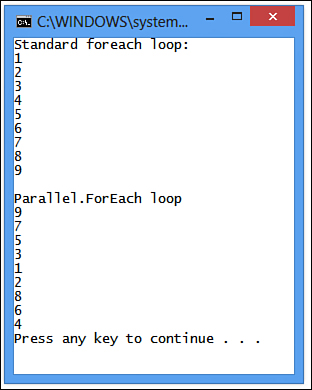

Listing 24.2 shows an example of using a traditional foreach statement and a Parallel.ForEach statement that will print out the numbers 1 through 9. The output is shown in Figure 24.1.

Figure 24.1. Output of a standard foreach loop and a Parallel.ForEach loop.

Listing 24.2. Comparison of foreach and Parallel.ForEach

class Program

{

static void Main(string[] args)

{

int[] source = new int[] { 1, 2, 3, 4, 5, 6, 7, 8, 9 };

Console.WriteLine("Standard foreach loop:");

foreach (var item in source)

{

Console.WriteLine(item);

}

Console.WriteLine();

Console.WriteLine("Parallel.ForEach loop");

System.Threading.Tasks.Parallel.ForEach(source, item => Process(item));

}

private static void Process(int item)

{

System.Threading.Thread.Sleep(1000);

Console.WriteLine(item);

}

}

Using Parallel.For or Parallel.ForEach, you write the loop logic in much the same way as you would write a traditional for or foreach loop. The TPL handles the low-level work of creating threads and queuing work items.

Because Parallel.For and Parallel.ForEach are methods, you can’t use the break and continue statements to control loop execution. To support these features, several overloads to both methods enable you to stop or break loop execution, among other things. These overloads use helper types to enable this functionality, including ParallelLoopState, ParallelOptions, ParallelLoopResult, CancellationToken, and CancellationTokenSource.

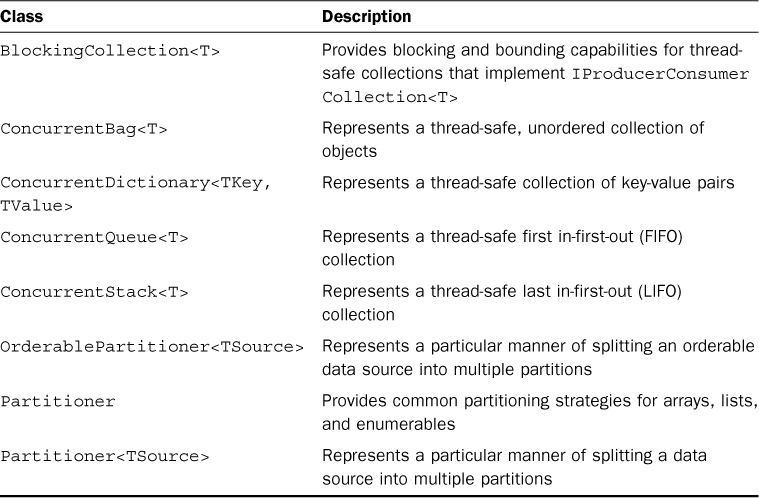

Thread-Safe Collections

Whenever multiple threads need to access a collection, that collection must be made thread-safe. The collections provided in the System.Collection.Concurrent namespace are specially designed thread-safe collection classes that should be used in favor of their generic counterparts.

Caution: Thread-Safe Collections

Although these collection classes are thread-safe, this simply means that they won’t produce undefined results when used from multiple threads. However, you still need to pay attention to locking and thread-safety concerns.

For example, using ConcurrentStack, you have no guarantee that the following code would succeed:

if (!stack.IsEmpty)

{

stack.Pop();

}

Even in this example, you still need to lock the stack instance to make sure that no other thread can access it between the IsEmpty check and the Pop operation, as shown here:

lock(syncLock)

{

if (!stack.IsEmpty)

{

stack.Pop();

}

}

The concurrent collection classes are shown in Table 24.1.

Table 24.1. Concurrent Collections

Task Parallelism

You can think of a task as representing an asynchronous operation. You can easily create and run implicit tasks using the Parallel.Invoke method, which enables you to run any number of arbitrary statements concurrently, as shown here:

Parallel.Invoke(() => DoSomeWork(), () => DoSomeOtherWork());

Parallel.Invoke accepts an array of Action delegates, each representing a single task to perform. The simplest way to create the delegates is to use lambda expressions.

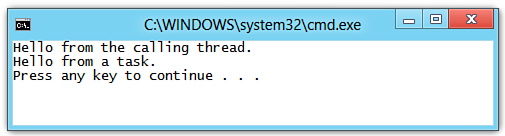

As Listing 24.3 shows, you can also explicitly create and run a task by instantiating the Task or Task<TResult> class and passing the delegate that encapsulates the code the task executes.

Listing 24.3. Explicitly Creating New Tasks

class Program

{

public static void Main(string[] args)

{

var task = new Task(() => Console.WriteLine("Hello from a task."));

task.Start();

Console.WriteLine("Hello from the calling thread.");

}

}

Figure 24.2 shows the output of the simple console application from Listing 24.3.

Figure 24.2. Output of creating tasks.

If the task creation and scheduling do not need to be separated, the preferred method is to use the Task.Factory.StartNew method, as shown in Listing 24.4.

Listing 24.4. Creating Tasks Using Task.Factory

Task<double>[] tasks = new Task<double>[2]

{

Task.Factory.StartNew( () => Method1() ),

Task.Factory.StartNew( () => Method2() )

};

Waiting on Tasks

To wait for a task to complete, the Task class provides a Wait, WaitAll, and WaitAny method. The Wait method enables you to wait for a single task to complete, whereas the WaitAll and WaitAny methods enable you to wait for any or all of an array of tasks to complete.

The most common reasons for waiting on a task to complete are as follows:

• The main thread depends on the result of the work performed by the task.

• You need to handle exceptions that might be thrown from the task. Any exceptions raised by a task will be thrown by a Wait method, even if that method was called after the task completed.

Listing 24.5 shows a simple example of waiting for an array of tasks to complete using the Task.WaitAll method.

Listing 24.5. Waiting on Tasks

Task[] tasks = new Task[2]

{

Task.Factory.StartNew( () => Method1() ),

Task.Factory.StartNew( () => Method2() )

};

Task.WaitAll(tasks);

Handling Exceptions

When a task raises exceptions, they are wrapped in an AggregateException and propagated back to the thread that joins with the task. The calling code (that is, the code that waits on the task or attempts to access the task’s Result property) would handle the exceptions by using the Wait, WaitAll, WaitAny method or the Result property. Listing 24.6 shows one way in which you might handle exceptions in a task.

Listing 24.6. Handling Exceptions in a Task

var task1 = Task.Factory.StartNew(() =>

{

throw new InvalidOperationException();

});

try

{

task1.Wait();

}

catch (AggregateException ae)

{

foreach (var e in ae.InnerExceptions)

{

if (e is InvalidOperationException)

{

Console.WriteLine(e.Message);

}

else

{

throw;

}

}

}

If you don’t use the TPL for your multithreaded code, you should handle exceptions in your worker threads. In most cases, exceptions occurring within a worker thread that are not handled can cause the application to terminate. However, if a ThreadAbortException or an AppDomainUnloadedException is unhandled in a worker thread, only that thread terminates.

Note: AggregateException and InnerExceptions

It is recommended that you catch an AggregateException and enumerate the InnerExceptions property to examine all the original exceptions thrown. Not doing so is equivalent to catching the base Exception type in nonparallel code.

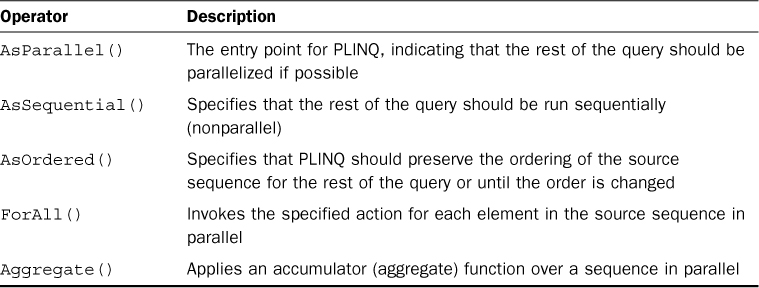

Working with Parallel LINQ (PLINQ)

Parallel LINQ is a parallel implementation of LINQ to Objects with additional operators for parallel operations. By utilizing the Task Parallel Library, PLINQ queries can scale in the degree of concurrency and can increase the speed of LINQ to Objects queries by more efficiently using the available processor cores.

The System.Linq.ParallelEnumerable class provides almost all the functionality for PLINQ. Table 24.2 shows the common PLINQ operators.

Table 24.2. Common ParallelEnumerable Operators

To create a PLINQ query, you invoke the AsParallel() extension method on the data source, as shown in Listing 24.7.

Listing 24.7. A Simple PLINQ Query

var source = Enumerable.Range(1, 10000);

var evenNums = from num in source.AsParallel()

where Compute(num) > 0

select num;

Potential Pitfalls

At this point, you might be tempted to take full advantage of the TPL and parallelize all your for loops, foreach loops, and LINQ to Objects queries; but don’t. Parallelizing query and loop execution introduces complexity that can lead to problems that aren’t common (or even possible) in sequential code. As a result, you want to carefully evaluate each loop and query to ensure that it is a good candidate for parallelization.

When deciding whether to use parallelization, you should keep the following guidelines in mind:

• Don’t assume parallel is always faster. It is recommended that you always measure actual performance results before deciding to use PLINQ. A basic rule of thumb is that queries having few source elements and fast user delegates are unlikely to speed up.

• Don’t overparallelize the query by making too many data sources parallel. This is most common in nested queries, where it is typically best to parallelize only the outer data source.

• Don’t make calls to non-thread-safe methods and limit calls to thread-safe methods. Calling non-thread-safe methods can lead to data corruption, which might or might not go undetected. Calling many thread-safe methods (including static thread-safe methods) can lead to a significant slowdown in the query. (This includes calls to Console.WriteLine. The examples use this method for demonstration purposes, but you shouldn’t use it in your own PLINQ queries.)

• Do use Parallel.ForAll when possible instead of foreach or Parallel.ForEach, which must merge the query results back into one thread to be accessed serially by the enumerator.

• Don’t assume that iterations of Parallel.ForAll, Parallel.ForEach, and Parallel.For will actually execute in parallel. As a result, you shouldn’t write code that depends on parallel execution of iterations for correctness.

• Don’t write to shared memory locations, such as static variables or class fields. Although this is common in sequential code, doing so from multiple threads can lead to race conditions. You can help prevent this by using lock statements, but the cost of synchronization can actually hurt performance.

• Don’t execute parallel loops on the UI thread because doing so can make your application’s user interface nonresponsive. If the operation is complex enough to require parallelization, it should off-load that operation to be run on a background thread using either the BackgroundWorker component or by running the loop inside a task instance (commonly started by calling Task.Factory.StartNew).

Summary

Creating applications that efficiently scale to multiple processors can be quite challenging, can add additional complexities to your application logic, and can introduce bugs, in the form of deadlocks or other race conditions, which can be difficult to find.

The Task Parallel Library in the .NET Framework provides an easy way to handle the low-level details of thread management and provides a high level of abstraction for working with tasks and queries in a parallel manner. The managed thread pool used by the .NET Framework for many tasks (such as asynchronous I/O completion, timer callbacks, System.Net socket connections, and asynchronous delegate calls) uses the task and threading capabilities provided by the Task Parallel Library.

Through the course of this book, you have learned the fundamentals of the C# programming language. From those fundamentals, you learned advanced concepts such as working with files, streams, and XML data and learned how to query databases. You then used those skills to create a Windows desktop, Windows Store, and web application. After that, you were introduced to parallel programming with the Task Parallel Library, how to interact with dynamic languages, and how to interoperate with other languages and technologies, such as the component object model (COM) and the Windows application programming interfaces (APIs).

Although you have reached the end of this book, your career as a C# developer is just beginning. I encourage you to continue learning and expanding your knowledge just as the .NET Framework and the C# programming language continue to evolve.

Q&A

Q. What is the benefit of the Task Parallel Library?

A. The Task Parallel Library simplifies the process of adding parallelism and concurrency to your application, enabling you to be more productive by focusing on your application logic rather than requiring you to understand the complexities of scaling processes to most efficiently use multiple processors.

Q. What is an application domain?

A. An application domain is a lightweight managed subprocess.

Q. What are some common reasons for using multiple threads?

A. Some common reasons for using multiple threads follow:

a. Communicate to a web server or database.

b. Perform long-running or complex operations.

c. Allow the user interface to remain responsive while performing other tasks in the background.

Q. What is concurrency?

A. Concurrency is simultaneously performing multiple tasks that can potentially interact with each other.

Q. What are locks?

A. Exclusive locks protect a resource by giving control to one thread at a time. Nonexclusive locks enable access to a limited number of threads at a time.

Q. What is the benefit of using one of the collections provided in the System.Collection.Concurrent namespace?

A. The collections provided in the System.Collection.Concurrent namespace are specially designed thread-safe collection classes and should be used in favor of their generic counterparts when writing multithreaded applications.

Q. What is Parallel LINQ (PLINQ)?

A. PLINQ is a parallel implementation of LINQ to Objects with additional operators for parallel operations.

Workshop

Quiz

1. What is a deadlock?

2. What are the three categories of synchronization primitives provided by the .NET Framework?

3. How is the lock statement in C# expanded by the compiler?

4. Why should you not lock on a string literal?

5. What are the three primary methods provided by the Parallel class?

Answers

1. A deadlock refers to the condition when two or more threads are waiting for each other to release a resource, or more than two threads are waiting for a resource in a circular chain.

2. The synchronization primitives provided by the .NET Framework can be loosely divided into the following categories:

a. Locks

b. Signals

c. Interlocked operations

3. The lock statement is expanded by the compiler to the following code:

bool needsExit = false;

try

{

System.Threading.Monitor.Enter(syncLock, ref needsExit);

this.counter = value;

}

finally

{

if (needsExit)

{

System.Threading.Monitor.Exit(syncLock);

}

}

4. Locking on string literals is problematic due to the string interning performed by the CLR. Because only a single instance is shared across the assembly, placing a lock on a string literal causes any location where that string is accessed to also be locked.

5. The Parallel class provides the For and ForEach methods for executing parallel loops and the Invoke method for executing tasks in parallel.

Exercises

There are no exercises for this hour.