Appendix A. Exploring the HBase system

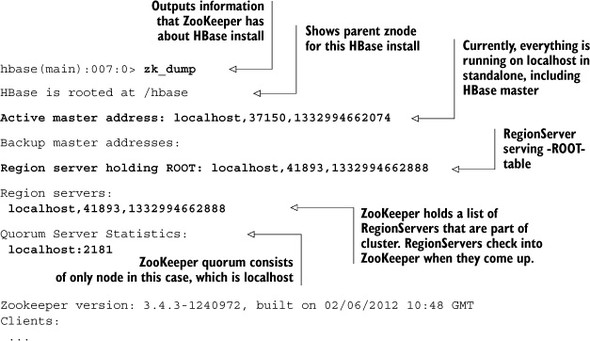

Over the course of the book, you’ve learned a bit of theory about how HBase is designed and how it distributes the load across different servers. Let’s poke around the system a little and get familiar with how these things work in practice. First, we’ll look at what ZooKeeper says about your HBase instance. When running HBase in standalone mode, ZooKeeper runs in the same JVM as the HBase Master. In chapter 9, you learned about fully distributed deployments, where ZooKeeper runs as a separate service. For now, let’s examine the commands and see what ZooKeeper has to tell you about your deployment.

A.1. Exploring ZooKeeper

Your main interface to interact directly with ZooKeeper is through the HBase shell. Launch the shell, and issue the zk_dump command.

Let’s look at a zk_dump from a fully distributed HBase instance. We’ve included the output from a running cluster and masked the server names. The portions in bold are analogous to the things we mentioned:

hbase(main):030:0> > zk_dump

HBase is rooted at /hbase

Master address: 01.mydomain.com:60000

Region server holding ROOT: 06.mydomain.com:60020

Region servers:

06.mydomain.com:60020

04.mydomain.com:60020

02.mydomain.com:60020

05.mydomain.com:60020

03.mydomain.com:60020

Quorum Server Statistics:

03.mydomain.com:2181

Zookeeper version: 3.3.4-cdh3u3--1, built on 01/26/2012 20:09 GMT

Clients:

...

02.mydomain.com:2181

Zookeeper version: 3.3.4-cdh3u3--1, built on 01/26/2012 20:09 GMT

Clients:

...

01.mydomain.com:2181

Zookeeper version: 3.3.4-cdh3u3--1, built on 01/26/2012 20:09 GMT

Clients:

...

If you have access to one, run the command on the shell and see what it tells you about the system. This is useful information when you’re trying to understand the state of the system—what hosts are participating in the cluster, which host is playing what role, and, most important, which host is serving the -ROOT- table. The HBase client needs all this information to perform reads and writes on your application’s behalf. Note that your application code isn’t involved in this process; the client library handles all of this for you.

The client automatically handles communicating with ZooKeeper and finding the relevant RegionServer with which to interact. Let’s still examine the -ROOT- and .META. tables to get a better understanding of what information they contain and how the client uses that information.

A.2. Exploring -ROOT-

Let’s look at our standalone instance we’ve used so far for TwitBase. Here’s -ROOT- for the standalone instance:

hbase(main):030:0> scan '-ROOT-'

ROW COLUMN+CELL

.META.,, column=info:regioninfo, timestamp=1335465653682,

1 value={NAME => '.META.,,1', STARTKEY => '', ENDKEY

=> '', ENCODED => 1028785192,}

.META.,, column=info:server, timestamp=1335465662307,

1 value=localhost:58269

.META.,, column=info:serverstartcode,

1 timestamp=1335465662307, value=1335465653436

.META.,, column=info:v, timestamp=1335465653682,

1 value=x00x00

1 row(s) in 5.4620 seconds

Let’s examine the contents of the -ROOT- table. The -ROOT- table stores information about the .META. table. It’s the first place a client application looks when it needs to locate data stored in HBase. In this example, there is only one row in -ROOT-, and it corresponds to the entire .META. table. Just like a user-defined table, .META. will split once it has more data to hold than can go into a single region. The difference between a user-defined table and .META. is that .META. stores information about the regions in HBase, which means it’s managed entirely by the system. In the current example, a single region in .META. can hold everything. The corresponding entry in -ROOT- has the rowkey defined by the table name and the start key for that region. Because there is only one region, the start key and end key for that region are empty. This indicates the entire key range is hosted by that region.

A.3. Exploring .META.

The previous entry in the -ROOT- table also tells you which server the .META. region is hosted on. In this case, because it’s a standalone instance and everything is on local-host, that column contains the value localhost:port. There is also a column for regioninfo that contains the name of the region, start key, end key, and encoded name. (The encoded name is used internally in the system and isn’t of any consequence to you.) The HBase client library uses all this information to locate the correct region to talk to while performing the operations in your application code. When no other table exists in the system, .META. looks like the following:

hbase(main):030:0> scan '.META.' ROW COLUMN+CELL 0 row(s) in 5.4180 seconds

Notice that there are no entries in the .META. table. This is because there are no user-defined tables in this HBase instance. On instantiating the users table, .META. looks like the following:

hbase(main):030:0> scan '.META.'

ROW COLUMN+CELL

users,,1335466383956.4a1 column=info:regioninfo,

5eba38d58db711e1c7693581 timestamp=1335466384006, value={NAME =>

af7f1. 'users,,1335466383956.4a15eba38d58

db711e1c7693581af7f1.', STARTKEY => '',

ENDKEY => '', ENCODED =>

4a15eba38d58db711e1c7693581af7f1,}

users,,1335466383956.4a1 column=info:server, timestamp=1335466384045,

5eba38d58db711e1c7693581 value=localhost:58269

af7f1.

users,,1335466383956.4a1 column=info:serverstartcode,

5eba38d58db711e1c7693581 timestamp=1335466384045, value=1335465653436

af7f1.

1 row(s) in 0.4540 seconds

In your current setup, that’s probably what it looks like. If you want to play around a bit, you can disable and delete the users table, examine .META., and re-create and repopulate the users table. Disabling and deletion can be done in the HBase shell just like creating a table.

Similar to what you saw in -ROOT-, .META. contains information about the users table and other tables in your system. Information for all the tables you instantiate goes here. The structure of .META. is similar to that of -ROOT-.

Once you’ve examined the .META. table after instantiating the TwitBase users table, populate some users into the system using the LoadUsers command provided in the sample application code. When the users table outgrows a single region, that region will split. You can also split the table manually for experimentation right now:

hbase(main):030:0> split 'users'

0 row(s) in 6.1650 seconds

hbase(main):030:0> scan '.META.'

ROW COLUMN+CELL

users,,1335466383956.4a15eba column=info:regioninfo,

38d58db711e1c7693581af7f1. timestamp=1335466889942, value={NAME =>

'users,,1335466383956.4a15eba38d58db711e

1c7693581af7f1.', STARTKEY => '', ENDKEY

=> '', ENCODED =>

4a15eba38d58db711e1c7693581af7f1,

OFFLINE => true, SPLIT => true,}

users,,1335466383956.4a15eba column=info:server,

38d58db711e1c7693581af7f1. timestamp=1335466384045,

value=localhost:58269

users,,1335466383956.4a15eba column=info:serverstartcode,

38d58db711e1c7693581af7f1. timestamp=1335466384045,

value=1335465653436

users,,1335466383956.4a15eba column=info:splitA,

38d58db711e1c7693581af7f1. timestamp=1335466889942, value=

{NAME =>

'users,,1335466889926.9fd558ed44a63f016

c0a99c4cf141eb5.', STARTKEY => '',

ENDKEY => '}7

x8ExC3xD1xE3x0Fx0DxE9xFE'fIKxB7

xD6', ENCODED =>

9fd558ed44a63f016c0a99c4cf141eb5,}

users,,1335466383956.4a15eba column=info:splitB,

38d58db711e1c7693581af7f1. timestamp=1335466889942, value={NAME =>

'users,}7x8ExC3xD1xE3x0F

x0DxE9xFE'fIKxB7xD6,1335466889926.a3

c3a9162eeeb8abc0358e9e31b892e6.',

STARTKEY => '}7x8E

xC3xD1xE3x0Fx0DxE9xFE'fIKxB7xD6'

, ENDKEY => '', ENCODED =>

a3c3a9162eeeb8abc0358

e9e31b892e6,}

users,,1335466889926.9fd558e column=info:regioninfo,

d44a63f016c0a99c4cf141eb5. timestamp=1335466889968, value={NAME =>

'users,,1335466889926.9fd558ed44a63f016c

0a99c4cf141eb5.', STARTKEY => '', ENDKEY

=> '}7x8ExC3

xD1xE3x0Fx0DxE9xFE'fIKxB7xD6',

ENCODED =>

9fd558ed44a63f016c0a99c4cf141eb5,}

users,,1335466889926.9fd558e column=info:server,

d44a63f016c0a99c4cf141eb5. timestamp=1335466889968,

value=localhost:58269

users,,1335466889926.9fd558e column=info:serverstartcode,

d44a63f016c0a99c4cf141eb5. timestamp=1335466889968,

value=1335465653436

users,}7x8ExC3xD1xE3x0F column=info:regioninfo,

x0DxE9xFE'fIKxB7xD6,133 timestamp=1335466889966, value={NAME =>

5466889926.a3c3a9162eeeb8abc 'users,}7x8ExC3xD1xE3x0Fx0DxE9xF

0358e9e31b892e6. E'fIKxB7xD6,1335466889926.a3c3a9162eee

b8abc0358e9e31b892e6.', STARTKEY =>

'}7x8ExC3xD1xE3x0F

x0DxE9xFE'fIKxB7xD6', ENDKEY => '',

ENCODED =>

a3c3a9162eeeb8abc0358e9e31b892e6,}

users,}7x8ExC3xD1xE3x0F column=info:server,

x0DxE9xFE'fIKxB7xD6,133 timestamp=1335466889966,

5466889926.a3c3a9162eeeb8abc value=localhost:58269

0358e9e31b892e6.

users,}7x8ExC3xD1xE3x0F column=info:serverstartcode,

x0DxE9xFE'fIKxB7xD6,133 timestamp=1335466889966,

5466889926.a3c3a9162eeeb8abc value=1335465653436

0358e9e31b892e6.

|

| 3 row(s) in 0.5660 seconds |

After you split users, new entries are made in the .META. table for the daughter regions. These daughter regions replace the parent region that was split. The entry for the parent region contains the information about the splits, and requests are no longer served by the parent region. For a brief time, the information for the parent region remains in .META. Once the hosting RegionServer has completed the split and cleaned up the parent region, the entry is deleted from .META.. After the split is complete, .META. looks like this:

hbase(main):030:0> scan '.META.'

ROW COLUMN+CELL

users,,1335466889926.9fd558e column=info:regioninfo,

d44a63f016c0a99c4cf141eb5. timestamp=1335466889968, value={NAME =>

'users,,1335466889926.9fd558ed44a63f016c

0a99c4cf141eb5.', STARTKEY => '', ENDKEY

=> '}7x8ExC3

xD1xE3x0Fx0DxE9xFE'fIKxB7xD6',

ENCODED =>

9fd558ed44a63f016c0a99c4cf141eb5,}

users,,1335466889926.9fd558e column=info:server,

d44a63f016c0a99c4cf141eb5. timestamp=1335466889968,

value=localhost:58269

users,,1335466889926.9fd558e column=info:serverstartcode,

d44a63f016c0a99c4cf141eb5. timestamp=1335466889968,

value=1335465653436

users,}7x8ExC3xD1xE3x0F column=info:regioninfo,

x0DxE9xFE'fIKxB7xD6,133 timestamp=1335466889966, value={NAME =>

5466889926.a3c3a9162eeeb8abc 'users,}7x8ExC3xD1xE3x0Fx0DxE9xF

0358e9e31b892e6. E'fIKxB7xD6,1335466889926.a3c3a9162eee

b8abc0358e9e31b892e6.', STARTKEY =>

'}7x8ExC3xD1xE3

x0Fx0DxE9xFE'fIKxB7xD6', ENDKEY =>

'', ENCODED =>

a3c3a9162eeeb8abc0358e9e31b892e6,}

users,}7x8ExC3xD1xE3x0F column=info:server,

x0DxE9xFE'fIKxB7xD6,133 timestamp=1335466889966,

5466889926.a3c3a9162eeeb8abc value=localhost:58269

0358e9e31b892e6

users,}7x8ExC3xD1xE3x0F column=info:serverstartcode,

x0DxE9xFE'fIKxB7xD6,133 timestamp=1335466889966,

5466889926.a3c3a9162eeeb8abc value=1335465653436

0358e9e31b892e6.

|

| 2 row(s) in 0.4890 seconds |

You may wonder why the start and end keys contain such long, funny values that don’t look anything like the entries you put in. This is because the values you see are byte-encoded versions of the strings you entered.

Let’s tie this back to how the client application interacts with an HBase instance. The client application does a get(), put(), or scan(). To perform any of these operations, the HBase client library that you’re using has to find the correct region(s) that will serve these requests. It starts with ZooKeeper, from which it finds the location of -ROOT-. It contacts the server serving -ROOT- and reads the relevant records from the table that point to the .META. region that contains information about the particular region of the table that it has to finally interact with. Once the client library gets the server location and the name of the region, it contacts the RegionServer with the region information and asks it to serve the requests.

These are the various steps at play that allow HBase to distribute data across a cluster of machines and find the relevant portion of the system that serves the requests for any given client.