3

Parametric Univariate Variables Control Charts

Chapter Overview

In this chapter, we study parametric variables control charts. These are charts that are based on an assumption about the underlying process distribution (such as normality) or charts that are based on some approximation (such as normality, via the central limit theorem) about the distribution of the charting statistic. There are three main classes of parametric variables control charts: the Shewhart chart, the cumulative sum (CUSUM) chart and the exponentially weighted moving average (EWMA) chart, each of which is generally used for a specific type of shift detection purpose in mind. We describe some of the charts in detail and the relative advantages and disadvantages of these charts, that are well documented in the literature and are touched on later. This background is useful since analogs of many of these parametric variables charts are considered in Chapter 4 for the nonparametric setting.

Variables charts are based on charting statistics that have a continuous distribution and we focus on these charts here. In addition to the variables charts, there are also attributes charts based on charting statistics with a discrete distribution. These include, for example, the fraction nonconforming chart (called the ![]() chart) and the control chart for nonconformities (called the

chart) and the control chart for nonconformities (called the ![]() chart), as described in Montgomery (2009, pp. 290, 308). These control charts, although quite useful in practice, are not considered in this book. This chapter also considers the various ways to sensitize a control chart (such as runs-rules or sensitivity rules, warning limits, interpreting patterns on a control chart, etc.) and ends off with a discussion on the robustness of parametric control charts.

chart), as described in Montgomery (2009, pp. 290, 308). These control charts, although quite useful in practice, are not considered in this book. This chapter also considers the various ways to sensitize a control chart (such as runs-rules or sensitivity rules, warning limits, interpreting patterns on a control chart, etc.) and ends off with a discussion on the robustness of parametric control charts.

3.1 Introduction

Let ![]() denote a random sample (measurements on some quantitative continuous quality characteristic or variable, such as temperature, diameter, etc.) of size

denote a random sample (measurements on some quantitative continuous quality characteristic or variable, such as temperature, diameter, etc.) of size ![]() , taken from a process at time

, taken from a process at time ![]() 1, 2, 3,… For parametric variables control charts, the (form of the) underlying process distribution is assumed to be known and the most common assumption in practice is that the distribution is normal, although other distributions, such as the exponential, Weibull, etc., are possible and have been studied. However, since most control charts in the literature are for the normal distribution, we only consider them here and refer to these charts as parametric charts or normal theory charts from this point on. Suppose that a process follows a normal distribution with mean

1, 2, 3,… For parametric variables control charts, the (form of the) underlying process distribution is assumed to be known and the most common assumption in practice is that the distribution is normal, although other distributions, such as the exponential, Weibull, etc., are possible and have been studied. However, since most control charts in the literature are for the normal distribution, we only consider them here and refer to these charts as parametric charts or normal theory charts from this point on. Suppose that a process follows a normal distribution with mean ![]() and variance

and variance ![]() , which may be known (specified) or unknown. If the in‐control (IC) process parameters are known or specified, this situation is referred to as Case K and is typically indicated by adding a subscript zero to the parameter symbols (e.g.,

, which may be known (specified) or unknown. If the in‐control (IC) process parameters are known or specified, this situation is referred to as Case K and is typically indicated by adding a subscript zero to the parameter symbols (e.g., ![]() and

and ![]() ) to the mean and the variance, respectively. If the IC process parameters are unknown, they are to be estimated before monitoring can begin. This is typically done in a retrospective analysis of the available data, called a Phase I study, where a process is stabilized or brought under control and thus a set of reference data is generated before prospective process monitoring can start in Phase II (see Section 2.1.12 for more details on Phase I and Phase II). This situation where process parameters are unknown, is referred to as Case U, and the process mean and the process variance, estimated from the Phase I reference data, are typically denoted by

) to the mean and the variance, respectively. If the IC process parameters are unknown, they are to be estimated before monitoring can begin. This is typically done in a retrospective analysis of the available data, called a Phase I study, where a process is stabilized or brought under control and thus a set of reference data is generated before prospective process monitoring can start in Phase II (see Section 2.1.12 for more details on Phase I and Phase II). This situation where process parameters are unknown, is referred to as Case U, and the process mean and the process variance, estimated from the Phase I reference data, are typically denoted by ![]() and

and ![]() , respectively.

, respectively.

First, we discuss the three basic parametric variables control charts for the known parameter case. The unknown parameter case is discussed later, in Section 3.8.

3.2 Parametric Variables Control Charts in Case K

Recall that there are three main classes of parametric variables control charts: the Shewhart chart, the CUSUM chart, and the EWMA chart, each of which is generally used for a specific type of shift detection purpose in mind. We describe these charts in more detail in each of the three sections that follow.

3.2.1 Shewhart Control Charts

Among the many control charts used in practice, the Shewhart charts are the most popular because of their simplicity, ease of application, and the fact that these versatile charts are quite efficient in detecting moderate to large shifts. These charts were originally proposed by Walter Shewhart in 1926. To describe the Shewhart chart in general, suppose that a process location parameter ![]() , such as the mean, is to be monitored using a charting statistic

, such as the mean, is to be monitored using a charting statistic ![]() , which is a good point estimator of

, which is a good point estimator of ![]() , statistically speaking. Further suppose that the expected value and the standard deviation of

, statistically speaking. Further suppose that the expected value and the standard deviation of ![]() are

are ![]() and

and ![]() , respectively. Statistical considerations often lead us to take

, respectively. Statistical considerations often lead us to take ![]() to be an unbiased estimator of

to be an unbiased estimator of ![]() so that

so that ![]() . Then, a general formula for the center line (CL) and the control limits of a Shewhart control chart are

. Then, a general formula for the center line (CL) and the control limits of a Shewhart control chart are

where ![]() > 0 is the charting constant, which is a chart design parameter that determines the “distance” of the control limits from the CL, expressed in terms of the standard deviation. Hence, these control limits are often called k‐sigma limits. A Shewhart

> 0 is the charting constant, which is a chart design parameter that determines the “distance” of the control limits from the CL, expressed in terms of the standard deviation. Hence, these control limits are often called k‐sigma limits. A Shewhart ![]() ‐sigma control chart is the graphic that displays these three limits as straight lines along with the realized (calculated) values of the charting statistic

‐sigma control chart is the graphic that displays these three limits as straight lines along with the realized (calculated) values of the charting statistic ![]() for a number of samples or over time. Note that, in a Shewhart chart, the upper and the lower control limits are symmetrically placed around the CL. Such control limits are more meaningful when the distribution of

for a number of samples or over time. Note that, in a Shewhart chart, the upper and the lower control limits are symmetrically placed around the CL. Such control limits are more meaningful when the distribution of ![]() is symmetric or approximately so, which goes well with the assumption in a Shewhart chart that either the process distribution is normal or that

is symmetric or approximately so, which goes well with the assumption in a Shewhart chart that either the process distribution is normal or that ![]() has a distribution that is approximately normal with mean

has a distribution that is approximately normal with mean ![]() . Suppose, for example, that

. Suppose, for example, that ![]() is the process mean

is the process mean ![]() to be monitored and the IC value of

to be monitored and the IC value of ![]() is

is ![]() . In this case,

. In this case, ![]() is taken to be the mean

is taken to be the mean ![]() of the sample and then the

of the sample and then the ![]() ‐sigma limits are given by

‐sigma limits are given by ![]() , where

, where ![]() is the known process standard deviation, since

is the known process standard deviation, since ![]() . The rationale behind the

. The rationale behind the ![]() ‐sigma limits is that

‐sigma limits is that ![]() is exactly (or approximately) normally distributed when the process distribution is normal (or by virtue of the central limit theorem). When the charting statistic plots on or outside of either the upper or the lower control limits, we say that a signal has been observed or that a signaling event has taken place and the process is declared to be out‐of‐control (OOC).

is exactly (or approximately) normally distributed when the process distribution is normal (or by virtue of the central limit theorem). When the charting statistic plots on or outside of either the upper or the lower control limits, we say that a signal has been observed or that a signaling event has taken place and the process is declared to be out‐of‐control (OOC).

According to the objective of detecting increases and/or decreases in the process parameter ![]() , control charts are often designed in a one‐sided or two‐sided form. A one‐sided upper (or lower) chart is used to detect upward (or downward) changes, while a two‐sided control chart is used to detect some change in the process, which could be either upward or downward. Thus, the use of a one‐sided control implies that one is interested in the shift or change in a known direction, that is, an increase or a decrease. A lower one‐sided Shewhart chart signals that a downward shift occurred if

, control charts are often designed in a one‐sided or two‐sided form. A one‐sided upper (or lower) chart is used to detect upward (or downward) changes, while a two‐sided control chart is used to detect some change in the process, which could be either upward or downward. Thus, the use of a one‐sided control implies that one is interested in the shift or change in a known direction, that is, an increase or a decrease. A lower one‐sided Shewhart chart signals that a downward shift occurred if ![]() (the chart only has an LCL), whereas an upper one‐sided Shewhart chart signals that an upward shift occurred if

(the chart only has an LCL), whereas an upper one‐sided Shewhart chart signals that an upward shift occurred if ![]() (the chart only has a UCL). From this point forward, we consider two‐sided charts since they are more general (as the practitioner often may not know the direction of the shift) and since these have received the most attention in the literature. Moreover, two‐sided charts can easily be adapted to yield one‐sided charts.

(the chart only has a UCL). From this point forward, we consider two‐sided charts since they are more general (as the practitioner often may not know the direction of the shift) and since these have received the most attention in the literature. Moreover, two‐sided charts can easily be adapted to yield one‐sided charts.

Various refinements and enhancements of the Shewhart chart have been considered in the literature; we discuss some of these in the later sections. An illustrative example of the Shewhart control chart is provided later as well.

Figure 3.1 The 3‐sigma principle.

3.2.2 CUSUM Control Charts

While the Shewhart charts are widely known and often used in practice because of their simplicity and effectiveness in detecting moderate to large shifts, other charts, such as CUSUM charts, may be more useful in certain situations for detecting smaller, persistent kind of shifts. These charts, sometimes labeled time‐weighted charts, are more naturally appropriate in the process control environment in view of the sequential nature of data collection. The CUSUM control charts were first introduced by Page (1954) (although not in its present form) and have been studied by many authors over the last 60 years. See, for example, Barnard (1959), Ewan and Kemp (1960), Johnson (1961), Goldsmith and Whitfield (1961), Page (1961), Ewan (1963), Hawkins (1992, 1993), and Hawkins and Olwell (1998). These are some examples, and since the introduction of CUSUM charts in 1954 by Page, there has been an incredible amount of work on CUSUM charts (see the overview in the Encyclopaedia of Statistics in Quality and Reliability by Ruggeri, Kenett, and Faltin (2007a) and the citations therein, for example). The CUSUM charts are typically based on the CUSUMs of a statistic or of differences of a statistic from its IC expected value, and are calculated progressively as the data accumulate over time. For example, the CUSUM chart for the mean is typically based on the CUSUM of the deviations of the individual observations (or the subgroup means) from the specified value of the IC target mean.

To describe the CUSUM chart in more detail, assume as before that ![]() denote the ith sample or a sample (subgroup) of size

denote the ith sample or a sample (subgroup) of size ![]() at sampling instance (time)

at sampling instance (time) ![]() ,

, ![]() 1,2,…, from a process with a known or specified IC process mean

1,2,…, from a process with a known or specified IC process mean ![]() and a known or specified IC process standard deviation

and a known or specified IC process standard deviation ![]() . Let

. Let

be a statistic constructed using the data in the ith sample, ![]() 1,2,… where ψ is some function. The statistic in Equation 3.2 is referred to as the basic (pivot) statistic, which would be an estimator of the process mean; for example,

1,2,… where ψ is some function. The statistic in Equation 3.2 is referred to as the basic (pivot) statistic, which would be an estimator of the process mean; for example, ![]() could be the sample mean. As we noted earlier, one can form either a CUSUM of the sample means (for subgrouped data) or of the individual observations (

could be the sample mean. As we noted earlier, one can form either a CUSUM of the sample means (for subgrouped data) or of the individual observations (![]() ) or of the differences of the sample means or the individual observations from

) or of the differences of the sample means or the individual observations from ![]() . The CUSUM chart for individual observations is described below. Here

. The CUSUM chart for individual observations is described below. Here ![]() and

and ![]() . The adaptation to sample means is straightforward and will be commented on later. The CUSUM chart is formed by plotting

. The adaptation to sample means is straightforward and will be commented on later. The CUSUM chart is formed by plotting ![]() where

where

which can be written as

There are two ways to represent CUSUMs, namely, (i) the tabular (or algorithmic) CUSUM and (ii) the V‐mask form of the CUSUM. We only consider the tabular CUSUM, since the V‐mask form of the CUSUM is no longer used in practice. Montgomery (2009, p. 403) states, “Of the two representations, the tabular CUSUM is preferable.” Since we only consider the tabular CUSUM, the word “tabular” will be dropped from this point forward and we will only refer to the CUSUM chart.

The upper one‐sided CUSUM chart is used to detect increases in the mean and it works by accumulating differences between ![]() and

and ![]() . Hence, for the upper one‐sided CUSUM chart, we use the charting statistic

. Hence, for the upper one‐sided CUSUM chart, we use the charting statistic

to detect positive shifts (increases) in the mean from ![]() , with starting value

, with starting value ![]() 0 and where

0 and where ![]() is called a reference or a slack value. It is common to express

is called a reference or a slack value. It is common to express ![]() in terms of the process standard deviation, so that

in terms of the process standard deviation, so that ![]() . The control chart issues a signal for the first i such that

. The control chart issues a signal for the first i such that ![]() , where the constant

, where the constant ![]() is called the decision interval.

is called the decision interval.

Similarly, the lower one‐sided CUSUM is of interest for detecting decreases in the mean and it works by accumulating differences from ![]() that are below target

that are below target

or

and is used to detect negative shifts or deviations from ![]() with starting value

with starting value ![]() 0. Here, a signaling event occurs for the first i such that

0. Here, a signaling event occurs for the first i such that ![]() (if Equation 3.4 is used) or

(if Equation 3.4 is used) or ![]() (if Equation 3.5 is used). For a more visually appealing chart, Equation 3.4

will be used in this book to construct the lower one‐sided CUSUM from this point forward. The two‐sided CUSUM chart is of interest when both increases and decreases in the mean are of interest. This chart signals for the first i at which either one of the two inequalities,

(if Equation 3.5 is used). For a more visually appealing chart, Equation 3.4

will be used in this book to construct the lower one‐sided CUSUM from this point forward. The two‐sided CUSUM chart is of interest when both increases and decreases in the mean are of interest. This chart signals for the first i at which either one of the two inequalities, ![]() or

or ![]() is satisfied. Note that for the CUSUM chart, there are some counters,

is satisfied. Note that for the CUSUM chart, there are some counters, ![]() and

and ![]() , which indicate the number of consecutive periods that the CUSUM's

, which indicate the number of consecutive periods that the CUSUM's ![]() and

and ![]() have been non‐zero before signaling, which helps in identifying at what point in time the shift may have taken place. If a signal is given by the upper CUSUM (i.e.,

have been non‐zero before signaling, which helps in identifying at what point in time the shift may have taken place. If a signal is given by the upper CUSUM (i.e., ![]() ) at sample number 29 and, say, the corresponding counter is equal to 7 (i.e.,

) at sample number 29 and, say, the corresponding counter is equal to 7 (i.e., ![]() ), then we would conclude that the process was last IC at sample number 29 – 7 = 22, so the shift likely occurred between sample numbers 22 and 23. The CUSUM charts have the added advantage that one can obtain an estimate of the new process mean,

), then we would conclude that the process was last IC at sample number 29 – 7 = 22, so the shift likely occurred between sample numbers 22 and 23. The CUSUM charts have the added advantage that one can obtain an estimate of the new process mean, ![]() , following a shift. The quantity

, following a shift. The quantity ![]() is an estimate of the amount by which the current mean, after a shift, is above

is an estimate of the amount by which the current mean, after a shift, is above ![]() when a signal occurs with

when a signal occurs with ![]() . The quantity

. The quantity ![]() is an estimate of the amount by which the current mean, after a shift, is below

is an estimate of the amount by which the current mean, after a shift, is below ![]() when a signal occurs with

when a signal occurs with ![]() . Thus, if the upper CUSUM signaled, we would estimate the new process average as

. Thus, if the upper CUSUM signaled, we would estimate the new process average as

whereas, if the lower CUSUM signaled, we would estimate the new process average as

The constants ![]() and

and ![]() are needed in order to implement the CUSUM chart; this is discussed next.

are needed in order to implement the CUSUM chart; this is discussed next.

The constants ![]() and

and ![]() are referred to as the design parameters of the CUSUM chart and are typically chosen so that the chart has a specified nominal ARLIC (such as 370) and is capable of detecting a shift in the mean, especially a small shift, as soon as possible. The first step in this direction is to choose

are referred to as the design parameters of the CUSUM chart and are typically chosen so that the chart has a specified nominal ARLIC (such as 370) and is capable of detecting a shift in the mean, especially a small shift, as soon as possible. The first step in this direction is to choose ![]() , which is called the reference value. Since

, which is called the reference value. Since ![]() , and

, and ![]() is assumed to be known, by specifying

is assumed to be known, by specifying ![]() we are, in fact, specifying

we are, in fact, specifying ![]() . Accordingly, from this point forward, we only consider the choice of

. Accordingly, from this point forward, we only consider the choice of ![]() . Note that the latter comment also holds for the design parameter

. Note that the latter comment also holds for the design parameter ![]() because

because ![]() is also typically defined as

is also typically defined as ![]() with

with ![]() = 4 or 5 providing good ARL properties (see Montgomery, 2009, p. 408); so, by specifying

= 4 or 5 providing good ARL properties (see Montgomery, 2009, p. 408); so, by specifying ![]() we are, in fact, specifying

we are, in fact, specifying ![]() . For the design parameter

. For the design parameter ![]() , let us consider the parametric CUSUM chart for monitoring the known mean of a normal distribution (

, let us consider the parametric CUSUM chart for monitoring the known mean of a normal distribution (![]() 0, without loss of generality) with standard deviation

0, without loss of generality) with standard deviation ![]() (again, without loss of generality), on the basis of individual data (

(again, without loss of generality), on the basis of individual data (![]() = 1). In this case, the IC distribution of

= 1). In this case, the IC distribution of ![]() follows a normal distribution with mean

follows a normal distribution with mean ![]() 0 and standard deviation

0 and standard deviation ![]() 1. To examine the impact of the choice of

1. To examine the impact of the choice of ![]() , we examine a graph of some ARLOOC values calculated for the normal distribution, calculated by simulation, in Figure 3.2, setting the nominal ARLIC = 500, for

, we examine a graph of some ARLOOC values calculated for the normal distribution, calculated by simulation, in Figure 3.2, setting the nominal ARLIC = 500, for ![]() = 0.1, 0.25, 0.5, and 1.0. Note that

= 0.1, 0.25, 0.5, and 1.0. Note that ![]() represents the increased values of

represents the increased values of ![]() to be detected “quickly” from

to be detected “quickly” from ![]() ; hence,

; hence, ![]() represents the true shift in the mean, where

represents the true shift in the mean, where ![]() with

with ![]() = 0.1, 0.25, 0.5, and 1.0, respectively.

= 0.1, 0.25, 0.5, and 1.0, respectively.

Figure 3.2 ARLOOC values of the traditional CUSUM chart with the nominal ARLIC = 500 for different values of  and

and  = 0.1, 0.25, 0.5, and 1.0.

= 0.1, 0.25, 0.5, and 1.0.

From Figure 3.2

, several interesting observations can be made. On the one hand, when the shift is small (see Panels (a) and (b)) and a larger value of ![]() is chosen, the ARLOOC values become unacceptably high. On the other hand, if the shift is large (see Panels (c) and (d)) and a smaller value of

is chosen, the ARLOOC values become unacceptably high. On the other hand, if the shift is large (see Panels (c) and (d)) and a smaller value of ![]() is chosen, the ARLOOC values are also high, but not as high as in the latter case. This suggests that when there is little or no a priori information regarding the size of the shift, a small value of

is chosen, the ARLOOC values are also high, but not as high as in the latter case. This suggests that when there is little or no a priori information regarding the size of the shift, a small value of ![]() is a safer choice (to protect against any unnecessary delay in detection).

is a safer choice (to protect against any unnecessary delay in detection).

The choice of ![]() for the parametric CUSUM chart for the normal mean has been discussed by many authors; see, for example, Lucas (1985), Hawkins and Olwell (1998), Kim et al. (2007), and Montgomery (2009). Lucas (1985) states, “The CUSUM parameter

for the parametric CUSUM chart for the normal mean has been discussed by many authors; see, for example, Lucas (1985), Hawkins and Olwell (1998), Kim et al. (2007), and Montgomery (2009). Lucas (1985) states, “The CUSUM parameter ![]() is determined by the acceptable mean level (

is determined by the acceptable mean level (![]() ) and by the unacceptable mean (

) and by the unacceptable mean (![]() ) level which the CUSUM scheme is to detect quickly. For normally distributed variables the

) level which the CUSUM scheme is to detect quickly. For normally distributed variables the ![]() value is chosen half way between the acceptable mean level and the unacceptable mean level.” In the more recent literature, see, for example, Montgomery (2009), it is agreed that, in the normal theory setting,

value is chosen half way between the acceptable mean level and the unacceptable mean level.” In the more recent literature, see, for example, Montgomery (2009), it is agreed that, in the normal theory setting, ![]() is typically chosen relative to the size of the shift that we want to detect, that is,

is typically chosen relative to the size of the shift that we want to detect, that is, ![]() , where

, where ![]() is the size of the shift in the mean expressed in standard deviation units.

is the size of the shift in the mean expressed in standard deviation units.

After choosing a value of ![]() , the next step is to find the decision interval

, the next step is to find the decision interval ![]() in conjunction with the chosen

in conjunction with the chosen ![]() so that a specified nominal ARLIC is attained. Note, however, that for a discrete random variable, the chances are that

so that a specified nominal ARLIC is attained. Note, however, that for a discrete random variable, the chances are that ![]() cannot always be found such that the specified nominal ARLIC is attained exactly and hence, using a conservative approach,

cannot always be found such that the specified nominal ARLIC is attained exactly and hence, using a conservative approach, ![]() is found so that the attained ARLIC is less than or equal to the specified nominal ARLIC. The decision interval,

is found so that the attained ARLIC is less than or equal to the specified nominal ARLIC. The decision interval, ![]() , can be found using a grid search algorithm using, say, 100 000 Monte Carlo simulations using some statistical software such as SAS®1 or R®.2

, can be found using a grid search algorithm using, say, 100 000 Monte Carlo simulations using some statistical software such as SAS®1 or R®.2

At this point, it should be noted that the detection capability of the CUSUM chart depends on the proper design (tuning) of the chart. The proper design of a CUSUM chart involves obtaining the optimal combination of the CUSUM chart parameters (![]() and

and ![]() ) by minimizing the OOC average run‐length (denoted by

) by minimizing the OOC average run‐length (denoted by ![]() ) for a specified value of the shift size

) for a specified value of the shift size ![]() and a nominal IC average run‐length (denoted by

and a nominal IC average run‐length (denoted by ![]() ). In other words, one may obtain many

). In other words, one may obtain many ![]() and

and ![]() combinations that attain a specified nominal

combinations that attain a specified nominal ![]() , but the optimal pair is that for which the

, but the optimal pair is that for which the ![]() is the lowest.

is the lowest.

Note that this general discussion regarding the CUSUM chart is for Case K, that is, when the process parameters are known. In Case U, the process parameters are unknown and need to be estimated. Naturally, this will impact the choice of k and h. The reader is referred to Jones, Champ, and Rigdon (2004), who evaluated the performance of the CUSUM chart with estimated parameters. The authors also provide a method for approximating the run‐length distribution and its moments.

In this section, we have discussed the CUSUM chart for individual measurements. However, if rational subgroups of size n > 1 are taken, then simply replace ![]() with

with ![]() and

and ![]() with

with ![]() in the equations above.

in the equations above.

The reader is referred to Hawkins and Olwell (1998) for a detailed overview on parametric CUSUM charts. They also provide software and some very useful tables with ARLIC values for various values of ![]() and

and ![]() ; see their Tables 3.1 and 3.2 on pages 48 and 49, respectively. Hawkins (1993) further provides a table of (

; see their Tables 3.1 and 3.2 on pages 48 and 49, respectively. Hawkins (1993) further provides a table of (![]() ) values for a nominal ARLIC value of 370. Using these tables, we provide the (

) values for a nominal ARLIC value of 370. Using these tables, we provide the (![]() ) values for a two‐sided CUSUM chart in Table 3.1

for a nominal ARLIC value of 370 and 500, respectively. For example, for a shift of about

) values for a two‐sided CUSUM chart in Table 3.1

for a nominal ARLIC value of 370 and 500, respectively. For example, for a shift of about ![]() in the process mean, taking

in the process mean, taking ![]() = 0.5 and

= 0.5 and ![]() = 4.77 gives an ARLIC = 370.

= 4.77 gives an ARLIC = 370.

Table 3.1 CUSUM chart parameters: (h, k) values for a nominal ARLIC value of 370 and 500, respectively.

| 370 | 0.25 | 0.50 | 0.75 | 1.00 | 1.25 | 1.50 | |

| 8.01 | 4.77 | 3.34 | 2.52 | 1.99 | 1.61 | ||

| 500 | 0.25 | 0.50 | 0.75 | 1.00 | 1.25 | 1.50 | |

| 7.27 | 4.39 | 3.08 | 2.32 | 1.83 | 1.47 |

Table 3.2 (λ,L) combinations that give ARLIC values close to the desired nominal values of 370 and 500.

| Shift size | Nominal |

Nominal |

| Small | (0.05, 2.492) | (0.05, 2.615) |

| Moderate | (0.10, 2.703) | (0.10, 2.814) |

| Large | (0.20, 2.860) | (0.20, 2.962) |

| Not provided for λ = 0.25 | (0.25, 2.998) | |

| Not provided for λ = 0.40 | (0.40, 3.054) |

It should be noted that as with the Shewhart chart, a lot of work has been done on enhancing the performance of the CUSUM chart. One of these is referred to as the fast initial response (FIR) feature. The FIR enhancement feature was originally proposed in Lucas and Crosier (1982) for the parametric CUSUM chart. The FIR, or head‐start (HS), feature is used when one wants to improve the sensitivity of a CUSUM at process start‐up. This is done by setting the starting values ![]() and

and ![]() equal to some non‐zero value, typically we set

equal to some non‐zero value, typically we set ![]() and

and ![]() . This is called a 50% HS.

. This is called a 50% HS.

With so much work done with the parametric control charts, it is natural to consider analogs using nonparametric charting statistics. This approach has led to nonparametric CUSUM charts (denoted by NPCUSUM), which are discussed in the next chapter.

3.2.3 EWMA Control Charts

Another popular class of control charts is the EWMA charts. The EWMA charts also take advantage of the sequentially (time‐ordered) accumulating nature of the data, arising in a typical SPC environment, and are known to be efficient in detecting smaller shifts, but may be easier to implement than the CUSUM charts (see, for example, Montgomery, 2009, p. 419). The classical EWMA charts for the mean were introduced by Roberts (1959) and they contain the Shewhart charts as a special case. The literature on EWMA charts is enormous and continues to grow at a substantial pace (see, for example, the overview in the Encyclopaedia of Statistics in Quality and Reliability by Ruggeri, Kenett, and Faltin (2007b) and the references therein). Some more recent references include Capizzi and Masarotto (2012) and Ross et al. (2012).

To describe the EWMA chart in more detail, assume, as before, that ![]() denote a random sample (subgroup) of size

denote a random sample (subgroup) of size ![]() on the process output at each sampling instance

on the process output at each sampling instance ![]() 1,2,…, from a process with a known IC process mean

1,2,…, from a process with a known IC process mean ![]() and a known IC process standard deviation

and a known IC process standard deviation ![]() . As in the case of the CUSUM chart, we consider the individual observations case so that

. As in the case of the CUSUM chart, we consider the individual observations case so that ![]() and we monitor

and we monitor ![]() with

with ![]() for

for ![]() 1,2,… The adaptation to sample means is straightforward and is commented on later.

1,2,… The adaptation to sample means is straightforward and is commented on later.

The charting statistic for the EWMA control chart is defined as

where ![]() is a constant called the smoothing parameter. The starting value

is a constant called the smoothing parameter. The starting value ![]() is typically taken to be the IC value of the process mean, that is,

is typically taken to be the IC value of the process mean, that is, ![]() .

.

To illustrate that the charting statistic ![]() is a weighted average of all the previous statistics,

is a weighted average of all the previous statistics, ![]() may be substituted by

may be substituted by ![]() into Equation 3.8 to obtain

into Equation 3.8 to obtain

This method of substitution is called recursive substitution. By continuing the process of recursive substitution for ![]() , j = 2,3,…, we obtain

, j = 2,3,…, we obtain

The expected value and the variance of the charting statistic ![]() are given by

are given by

and

respectively (see Appendix 3.1 for the derivations). Hence, the exact control limits and the CL of the EWMA control chart are given by

where L > 0 is a charting constant. Note that the EWMA control limits are set at ![]() standard deviations away from the IC mean (the IC expected value of the charting statistic). When

standard deviations away from the IC mean (the IC expected value of the charting statistic). When ![]() , the control limits reduce to the Shewhart chart limits

, the control limits reduce to the Shewhart chart limits ![]() and the EWMA chart reduces to the Shewhart chart.

and the EWMA chart reduces to the Shewhart chart.

Calculating and implementing the exact EWMA control limits may be somewhat cumbersome. Alternatively, one can use the so‐called steady‐state control limits (which are typically used when the EWMA chart has been running for several time periods so that the term ![]() in Equation 3.11 approaches unity) are given by

in Equation 3.11 approaches unity) are given by

The two‐sided EWMA chart is constructed by plotting ![]() against the sample number

against the sample number ![]() (or time). If the charting statistic

(or time). If the charting statistic ![]() falls between the two control limits, that is,

falls between the two control limits, that is, ![]() , the process is considered IC. If the charting statistic

, the process is considered IC. If the charting statistic ![]() falls on or outside one of the control limits, that is,

falls on or outside one of the control limits, that is, ![]() or

or ![]() , the process is considered OOC and a search for assignable causes is necessary.

, the process is considered OOC and a search for assignable causes is necessary.

The two‐sided EWMA chart can be easily modified to construct a one‐sided EWMA chart in much the same way as was done for a CUSUM chart. For example, an upper one‐sided EWMA charting statistic is given by ![]() for

for ![]() = 1, 2, 3,… with starting value

= 1, 2, 3,… with starting value ![]() . If the

. If the ![]() plots on or above the

plots on or above the ![]() , the process is declared OOC and a search for assignable causes is necessary.

, the process is declared OOC and a search for assignable causes is necessary.

Also, like the CUSUM chart, the design parameters of the EWMA chart, ![]() and

and ![]() , are chosen so that the chart has a specified nominal ARLIC and is capable of detecting a specified amount of shift, specially a small shift, as soon as possible, in terms of the shortest ARLOOC. Montgomery (2009, p. 422) states that, “The optimal design procedure would consist of specifying the desired IC and OOC average run‐lengths and the magnitude of the process shift that is anticipated, and then to select the combination of λ and L that provide the desired ARL performance.” The constant λ

, are chosen so that the chart has a specified nominal ARLIC and is capable of detecting a specified amount of shift, specially a small shift, as soon as possible, in terms of the shortest ARLOOC. Montgomery (2009, p. 422) states that, “The optimal design procedure would consist of specifying the desired IC and OOC average run‐lengths and the magnitude of the process shift that is anticipated, and then to select the combination of λ and L that provide the desired ARL performance.” The constant λ ![]() is the smoothing parameter and is selected depending on the magnitude of the shift to be detected. The constant L > 0 is the distance of the control limits from the CL (the larger the value of L, the wider the control limits and vice versa) and is selected in combination with the value of the smoothing parameter λ. With regard to the implementation of the EWMA chart, the first step is to choose λ. The recommendation is to choose a small λ, say, equal to 0.05, when small shifts are of interest. If moderate shifts are of greater concern, choose λ = 0.10, whereas λ = 0.20 should be chosen if larger shifts are of interest (see, for example, Montgomery, 2009, p. 423). After λ is chosen, the second step involves choosing L so that a desired ARLIC is attained. This can be done using a grid search algorithm using, say, 100 000 Monte Carlo simulations using some statistical software such as SAS® or R®.

is the smoothing parameter and is selected depending on the magnitude of the shift to be detected. The constant L > 0 is the distance of the control limits from the CL (the larger the value of L, the wider the control limits and vice versa) and is selected in combination with the value of the smoothing parameter λ. With regard to the implementation of the EWMA chart, the first step is to choose λ. The recommendation is to choose a small λ, say, equal to 0.05, when small shifts are of interest. If moderate shifts are of greater concern, choose λ = 0.10, whereas λ = 0.20 should be chosen if larger shifts are of interest (see, for example, Montgomery, 2009, p. 423). After λ is chosen, the second step involves choosing L so that a desired ARLIC is attained. This can be done using a grid search algorithm using, say, 100 000 Monte Carlo simulations using some statistical software such as SAS® or R®.

Table 3.2

provides some (λ, L) combinations that give ![]() values close to the desired values of 370 and 500, respectively, for individual data. These were found using Monte Carlo simulations, as described above.

values close to the desired values of 370 and 500, respectively, for individual data. These were found using Monte Carlo simulations, as described above.

The shift detection capability of the EWMA chart, like that of the CUSUM chart, depends on proper designing (tuning) of the chart. A proper design of an EWMA chart involves obtaining the (optimal) combination of the EWMA chart parameters (λ and L), by minimizing the ![]() for a specified value of the shift size

for a specified value of the shift size ![]() and for a given nominal

and for a given nominal ![]() . In other words, one may obtain many λ and L combinations that yield a specified nominal

. In other words, one may obtain many λ and L combinations that yield a specified nominal ![]() . The optimal pair, out of these pairs, is that one for which the

. The optimal pair, out of these pairs, is that one for which the ![]() is the lowest.

is the lowest.

Note that this general discussion regarding the EWMA chart is for Case K, that is, when the IC values of the process parameters are known. In Case U, the process parameters are unknown and need to be estimated. The process of estimation impacts chart performance and the chart design parameters, and therefore the optimal choice needs to be re‐evaluated in this case. The reader is referred to Jones, Champ, and Rigdon (2001), who evaluated the performance of the EWMA chart with estimated parameters. We take up the issue of parameter estimation later in this chapter.

In this section, we have discussed the EWMA chart for individual measurements. However, if rational subgroups of size n > 1 are taken, then simply replace ![]() with

with ![]() and

and ![]() with

with ![]() in the equations above.

in the equations above.

A lot of research has been done to improve the performance of the EWMA chart by adding enhancements. One of these is the FIR feature. The FIR enhancement feature was originally proposed in Lucas and Crosier (1982) for the parametric CUSUM chart. Then, Lucas and Saccucci (1990) proposed a similar feature for the parametric EWMA chart. An FIR feature is used as an antidote to start‐up problems and those processes that lack corrective action after the previous OOC signal. Lucas and Saccucci (1990) stated that, for the EWMA chart, the FIR feature is most useful when ![]() is small. In Table 3.3, we give the different types of FIR features for the EWMA chart found in the literature so far.

is small. In Table 3.3, we give the different types of FIR features for the EWMA chart found in the literature so far.

Table 3.3 Different types of FIR enhancement for EWMA charts and the corresponding articles.

| Type of FIR enhancement | Description | Article |

| (i) Fixed control limits with head‐start (HS) | 0% |

Lucas and Saccucci (1990) |

| (ii) Time‐varying (TV) control limits with HS | 0% |

Rhoads, Montgomery, and Mastrangelo (1996) |

| (iii) TV control limits with exponential‐type FIR | Steiner (1999) | |

| (iv) TV control limits with modified exponential‐type FIR | Haq, Brown, and Moltchanova (2014) |

With so much work available on parametric control charts, it is clearly natural to consider analogs of these charts using nonparametric charting statistics. This line of thinking has led to several nonparametric EWMA (NPEWMA) charts, which are discussed in the next chapter.

Before going into more detail and giving illustrative examples on the parametric CUSUM and EWMA charts, we first focus on the parametric Shewhart control charts.

3.3 Types of Parametric Variables Charts in Case K: Illustrative Examples

3.3.1 Shewhart Control Charts

3.3.1.1 Shewhart Control Charts for Monitoring Process Mean

For monitoring the mean of a process, we typically use the sample mean (![]() ). An example follows.

). An example follows.

3.3.1.2 Shewhart Control Charts for Monitoring Process Variation

Variation is an important aspect of any analysis and thus it is necessary to monitor the process variation or spread and ensure that it is IC. Moreover, as we see in Equation 3.1 , the Shewhart control limits for the process mean depend on the process standard deviation. Thus, unless the standard deviation remains IC, the control chart for the mean will not be very informative. So, we need to monitor the variance or the standard deviation using a control chart.

There are several possible statistics that can be used to monitor variation. The most popular choices are the sample range (![]() ), the sample standard deviation (

), the sample standard deviation (![]() ), and the sample variance (

), and the sample variance (![]() ).

).

Typically, we use a control chart to monitor the process mean together with a control chart to monitor the process variation. If the variation is IC, we go ahead and examine the control chart for the mean. For example, a Shewhart ![]() chart for the mean is often used together with a Shewhart

chart for the mean is often used together with a Shewhart ![]() chart for the spread. Note that, for illustration, we consider the Shewhart

chart for the spread. Note that, for illustration, we consider the Shewhart ![]() chart even though recent literature recommends using a different spread chart, such as the Shewhart

chart even though recent literature recommends using a different spread chart, such as the Shewhart ![]() chart; see, for instance, Mahmoud et al. (2010). We do this because the Shewhart

chart; see, for instance, Mahmoud et al. (2010). We do this because the Shewhart ![]() chart is simple and continues to be used in the industry.

chart is simple and continues to be used in the industry.

In Case K, the values of ![]() and

and ![]() are known or are specified so that they can be used to construct the respective control charts. We illustrate the Shewhart R and S charts for the known standard deviation

are known or are specified so that they can be used to construct the respective control charts. We illustrate the Shewhart R and S charts for the known standard deviation ![]() .

.

3.3.2 CUSUM Control Charts

An example for the parametric CUSUM control chart for individual data follows.

3.3.3 EWMA Control Charts

An example for the parametric EWMA control chart for individual data follows.

3.4 Shewhart, EWMA, and CUSUM Charts: Which to Use When

As we have noted, the Shewhart charts are the most popular in practice because of their simplicity, ease of application, and the fact that these versatile charts are quite efficient in detecting moderate‐to‐large shifts. While the Shewhart charts are the most widely known and used control charts in practice because of their simplicity and global performance, other classes of charts, such as the CUSUM and EWMA charts, are useful and sometimes more naturally appropriate in the process control environment in view of the sequential nature of data collection. These charts, typically based on the cumulative totals of a charting statistic, obtained as data accumulate, are known to be more efficient for detecting small to moderate magnitudes of shifts in the process. Thus, the CUSUM and the EWMA control charts have been developed as alternatives to the Shewhart chart to detect small, persistent shifts in mean. A natural question to ask is, “Between the CUSUM and the EWMA chart, which control chart is the most effective and should be used when?” Most of the available literature suggests that the performance of the CUSUM and the EWMA charts are very similar. However, each has its advantages and disadvantages.

The EWMA chart has what is known as the inertia problem which may be a practical issue. Before the concept of inertia is explained, we first clarify that both the EWMA and the CUSUM charts have the problem of inertia. The CUSUM chart doesn't have such a significant inertia problem since it uses resets (see Yashchin, 1987, 1993). A more detailed explanation of this follows after the term “inertia” has been defined. The term “inertia” refers to a measure of the resistance of a chart to signaling a particular process shift, for example, if the EWMA charting statistic happens to be closer to the LCL at the time when an upward shift occurs, the time required to reach the UCL will be longer than if the EWMA statistic was closer to the CL. It has been shown that EWMA charts have more of an inertia problem than the CUSUM charts (see, for example, Woodall and Mahmoud, 2005). This is particularly the case when we are interested in both upward and downward shifts (i.e., two‐sided control charts) as the EWMA is implemented by means of a single charting statistic, as opposed to a CUSUM procedure, which uses two separate (upper and lower) charting statistics. Therefore, although the EWMA chart is easier to implement in practice, its “worst‐case” OOC performance is worse than that of a CUSUM chart. Although there have been some recommendations in the literature on how to overcome the problem of inertia, such as using a one‐sided EWMA procedure with resetting (see Spliid, 2010), using EWMA charts in conjunction with Shewhart limits (see Woodall and Mahmoud, 2005), or using an adaptive EWMA (AEWMA) approach (see Capizzi and Masarotto, 2003), these refinements are not typically used in practice. Woodall and Mahmoud (2005) proposed a measure of inertia, referred to as the signal resistance, to be the largest standard deviation from target not leading to an immediate OOC signal. It is highly recommended that the inertia properties of control charts be considered important in control chart selection and that the signal resistance be calculated for that reason. By calculating the signal resistance for EWMA and CUSUM charts, Woodall and Mahmoud (2005) concluded that the EWMA chart has worse inertial properties than the CUSUM chart, in the sense that the signal resistance values can be considerably higher. Another advantage of the CUSUM chart is that the time of the shift can be pinpointed by making use of the quantities ![]() and

and ![]() , which indicate the number of consecutive periods that the CUSUM's

, which indicate the number of consecutive periods that the CUSUM's ![]() and

and ![]() have been nonzero. For example, if a signaling event occurred on sample number 29 with the corresponding quantity being

have been nonzero. For example, if a signaling event occurred on sample number 29 with the corresponding quantity being ![]() at sample number 29, we would conclude that the process was last IC at sample number 29 – 7 = 22, so the shift likely occurred between sample numbers 22 and 23. Another advantage to using the CUSUM chart is that one can obtain an estimate of the new process mean following the shift, for example, continuing with the previous example, if the IC process mean equals 10 (

at sample number 29, we would conclude that the process was last IC at sample number 29 – 7 = 22, so the shift likely occurred between sample numbers 22 and 23. Another advantage to using the CUSUM chart is that one can obtain an estimate of the new process mean following the shift, for example, continuing with the previous example, if the IC process mean equals 10 (![]() ), the reference value is taken to be 0.5 (

), the reference value is taken to be 0.5 (![]() ) and the value of

) and the value of ![]() , then the new process mean is estimated using

, then the new process mean is estimated using ![]() . Thus, the IC process mean of 10 has shifted upward to the value of 11.25 sometime between sample numbers 22 and 23. With the CUSUM chart having all of these advantages, why would one use the EWMA chart? Hawkins and Wu (2014) perhaps stated it best and we quote their findings here, “One thing is clear – if the shift occurs at or near the beginning of the process (the ‘initial‐state’) then the EWMA is a better choice than the CUSUM chart. No matter what size of shift is monitoring process is designed for or that actually happened, the EWMA always responds faster than the CUSUM.”

. Thus, the IC process mean of 10 has shifted upward to the value of 11.25 sometime between sample numbers 22 and 23. With the CUSUM chart having all of these advantages, why would one use the EWMA chart? Hawkins and Wu (2014) perhaps stated it best and we quote their findings here, “One thing is clear – if the shift occurs at or near the beginning of the process (the ‘initial‐state’) then the EWMA is a better choice than the CUSUM chart. No matter what size of shift is monitoring process is designed for or that actually happened, the EWMA always responds faster than the CUSUM.”

3.5 Control Chart Enhancements

3.5.1 Sensitivity Rules

While the Shewhart control chart is effective in detecting large process shifts, it has been shown that it lacks sensitivity in detecting small process shifts. The papers by Koutras, Bersimis, and Maravelakis, (2007) and Park and Seo (2012) present literature reviews on Shewhart charts with supplementary sensitizing rules based on runs and scans to improve the effectiveness of Shewhart control charts for detecting small shifts. Sensitizing rules are signaling rules designed to detect some improbable and/or non‐random pattern of the charting statistics on a control chart. We start by discussing the well‐known Western Electric rules and, following this, we discuss other supplementary sensitizing rules based on runs and scans.

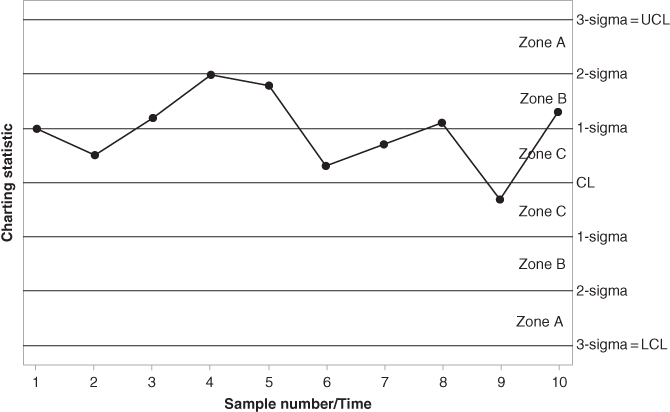

The Western Electric rules make use of warning limits, typically set at a distance of 1‐sigma or 2‐sigma from the CL to determine whether the process is IC or OOC. The Western Electric rules (Western Electric Company, 1956) are listed below. The chart will signal if any one of these conditions is met.

- One or more points on or outside the control limits.

- Two of three consecutive points plot outside the 2‐sigma warning limits but still inside the control limits.

- Four of five consecutive points above the 1‐sigma limits.

- A run of eight consecutive points on one side of the CL.

The Western Electric rules are illustrated in Figures 3.8–3.11. The question may arise about how these rules are set up. For example, why do we consider a run of eight consecutive points on one side of the CL in Western Electric Rule number 4 instead of nine consecutive points on one side of the CL? All of these rules are designed so that they will approximately have the same probability of a false alarm, which is also close to the nominal value that is typically taken to be 0.0027. For Western Electric Rule number 4, for example, if we make the assumption that the CL of a control chart is equal to the IC median of the distribution, then the probability of a charting statistic to plot above and below the CL are equal, thus ![]() (Charting statistic plots above the CL) =

(Charting statistic plots above the CL) = ![]() (Charting statistic plots below the CL) =

(Charting statistic plots below the CL) = ![]() . Thus,

. Thus,

which is close to the typically desired nominal value of 0.0027.

Figure 3.8 Western Electric rule number 1.

Figure 3.9 Western Electric rule number 2.

Figure 3.10 Western Electric rule number 3.

Figure 3.11 Western Electric rule number 4.

Western Electric rule number 1 is illustrated in Figure 3.8 . This rule gives a signal if one or more points plot on or outside the LCL or UCL. The control chart in Figure 3.8 signals at sample number 9. Although this rule is illustrated for a possible upward shift in the process, it also gives a signal if the charting statistics had a perfect mirror‐image copy around the CL, indicating a possible downward shift in the process.

Western Electric rule number 2 is illustrated in Figure 3.9. This rule gives a signal if two of three consecutive points plot outside the 2‐sigma warning limits but still inside the control limits. The control chart in Figure 3.9 signals at sample number 9. Although this rule is illustrated for a possible upward shift in the process, it also gives a signal if the charting statistics had a perfect mirror‐image copy around the CL, indicating a possible downward shift in the process.

Western Electric rule number 3 is illustrated in Figure 3.10. This rule gives a signal if four of five consecutive points plot outside the 1‐sigma warning limits but still inside the control limits. The control chart in Figure 3.10 signals at sample number 10. Although this rule is illustrated for a possible upward shift in the process, it also gives a signal if the charting statistics had a perfect mirror‐image copy around the CL, indicating a possible downward shift in the process.

Western Electric rule number 4 is illustrated in Figure 3.11 . This rule gives a signal if a run of eight consecutive points on one side of the CL occurs. The control chart in Figure 3.11 signals at sample number 8. Although this rule is illustrated for a possible upward shift in the process, it also gives a signal if the charting statistics had a perfect mirror‐image copy around the CL, indicating a possible downward shift in the process.

Some other sensitizing rules have been suggested in the literature and these include:

- Six points in a row steadily increasing or decreasing.

- Fifteen points in a row in Zone C (both above and below the CL); see Figures 3.8 –3.11 for an illustration of the different zones.

- Fourteen points in a row alternatively up and down.

- Eight points in a row on both sides of the CL with none in Zone C; see Figures 3.8 –3.11 for an illustration of the different zones.

- An unusual or non‐random pattern in the data.

- One or more points near a warning or control limit.

In Table 3.8, we list some possible causes of control chart signals.

Table 3.8 Possible causes of control chart signals.

| Signal | Possible Cause |

| One point outside the control limits followed by no anomalous patterns |

Measurement of recording error Isolated/temporary event such as substitute staff |

| Fifteen points in a row in Zone C (both above and below the CL); see Figures 3.8 –3.11 for an illustration of the different zones | Data have been manipulated, i.e., extreme values are not being recorded or are being recorded incorrectly |

| Trends, e.g., six points in a row steadily increasing or decreasing | The gradual drifting of data can indicate wear and tear on measuring equipment or on machinery. It may also result from machine warm‐up and cool‐down or inadequate maintenance |

| Cyclical pattern; see Figure 2.3 |

This may result from environmental changes such as changes in temperature It can also be a result of operator fatigue, or fluctuation in voltage or pressure or some other variable in the machinery Chemical properties of raw material can also play a role |

| Stratification; see Figure 2.4 |

Stratification may result from the incorrect calculation of control limits or from incorrect subgrouping It may also be a result of not recalculating the control limits after process improvement |

| A sudden jump | This could be a result of damaged equipment, new staff, etc. |

3.5.2 Runs‐type Signaling Rules

Runs‐type signaling rules are used to improve the Shewhart chart's sensitivity for detecting smaller process shifts, along with preserving the simplicity of the Shewhart charts. These are also called supplementary rules as they are used to supplement an original chart to make it more sensitive to detecting process changes. The original Shewhart chart uses the charting statistic from the current sample to check if there is a signal or not in order to determine the state of the process; thus, this chart is also known as the 1‐of‐1 chart (based on the 1‐of‐1 rule). While this is reasonable, it might be worthwhile to consider the charting statistics from a few of the previous samples to see if there is a pattern in the signals. For example, if we have signals from two back to back samples (a run of two), a stronger evidence of change would emerge. Charts using such signaling rules are called runs‐rules enhanced charts. Two of the popular signaling rules are the 2‐of‐2 and 2‐of‐3 runs‐type signaling rules and these runs‐rules enhanced charts are labeled the 2‐of‐2 and 2‐of‐3 charts.

Note that the k‐of‐k ![]() runs‐type signaling rule is the general case of the 2‐of‐2 runs‐type signaling rule. The k‐of‐k runs‐type signaling rule signals where k consecutive charting statistics all plot on or outside the control limit(s). A generalization of the k‐of‐k runs‐type signaling rule is the k‐of‐w

runs‐type signaling rule is the general case of the 2‐of‐2 runs‐type signaling rule. The k‐of‐k runs‐type signaling rule signals where k consecutive charting statistics all plot on or outside the control limit(s). A generalization of the k‐of‐k runs‐type signaling rule is the k‐of‐w ![]() runs‐type signaling rule, which signals where k of the last w charting statistics plots on or outside the control limit(s).

runs‐type signaling rule, which signals where k of the last w charting statistics plots on or outside the control limit(s).

While the runs‐rules enhanced charts can make the Shewhart chart more sensitive to a change, there may be some potential costs. The FAR may be increased and the resulting chart might lack the ability to immediately detect a large process shift. The 2‐of‐3 signaling rules, for example, require at least the last two or three charting statistics to signal, which may be potentially costly to the practitioner since the chart's ability to detect a large shift in the process is delayed until at least three samples are collected. Thus, the cost and the benefit need to be carefully weighed before one decides to use a supplementary runs‐rules enhanced chart.

Many parametric control charts with runs‐type signaling rules have been proposed in the literature. The interested reader can take a look at, for example, Roberts (1958), Champ and Woodall (1987), Champ (1992), Klein (2000), Shmueli and Cohen (2003), Acosta‐Mejia (2007), Khoo and Ariffin (2006), Lim and Cho (2009), Cheng and Chen (2011), Santiago and Smith (2013) and others. However, the focus of our discussion is on nonparametric control charts and, accordingly, we focus on nonparametric control charts to monitor location and/or scale with runs‐type signaling rules. Overall, to learn about the advantages and disadvantages of Shewhart‐type control charts supplemented by runs‐type signaling rules, refer to Nelson (1985). Koutras et al. (2007) show that the overall probability of a false alarm when h decision rules are used is

provided that all ![]() decision rules are independent, with rule

decision rules are independent, with rule ![]() having probability

having probability ![]() such that the charting statistic plots on or outside the control limits when the process is IC. Note that Equation 3.16 is not valid in the case of the typical runs‐rules; see Nelson (1985) and Montgomery (2009).

such that the charting statistic plots on or outside the control limits when the process is IC. Note that Equation 3.16 is not valid in the case of the typical runs‐rules; see Nelson (1985) and Montgomery (2009).

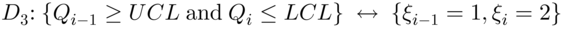

3.5.2.1 Signaling Indicators

Let the charting statistic for the ![]() subgroup be denoted by

subgroup be denoted by ![]() for

for ![]() 1, 2, 3, … Let the indicator functions for the one‐sided control charts be denoted by

1, 2, 3, … Let the indicator functions for the one‐sided control charts be denoted by

and

respectively. Thus, ![]() equals 1 and indicates a signal for an upper one‐sided chart when

equals 1 and indicates a signal for an upper one‐sided chart when ![]() plots on or above the UCL. Similarly,

plots on or above the UCL. Similarly, ![]() takes a value 1 and indicates a signal for a lower one‐sided chart when

takes a value 1 and indicates a signal for a lower one‐sided chart when ![]() plots on or below the LCL. Thus, these binary variables are called signaling indicators. For a two‐sided control chart, the signaling indicator may be denoted by

plots on or below the LCL. Thus, these binary variables are called signaling indicators. For a two‐sided control chart, the signaling indicator may be denoted by

so that ![]() indicates whether the charting statistic of

indicates whether the charting statistic of ![]() plots on or above the UCL (

plots on or above the UCL (![]() ), between the two limits (

), between the two limits (![]() ), or on or below the LCL (

), or on or below the LCL (![]() ). The values 1 and 2 indicate that there is a signal, on the high or the low side, respectively, whereas the value 0 indicates there is not a signal and that the process is IC.

). The values 1 and 2 indicate that there is a signal, on the high or the low side, respectively, whereas the value 0 indicates there is not a signal and that the process is IC.

3.5.2.1.1 The 1‐of‐1 Runs‐type Signaling Rule

The most commonly used and least complex charts are the usual Shewhart charts, called 1‐of‐1 charts, which are not supplemented by any runs‐rules. However, they are known to be insensitive in detecting small process shifts. These charts signal when the event ![]() or

or ![]() , or

, or ![]() occurs, respectively, where

occurs, respectively, where

- The 1‐of‐1 runs‐type signaling rule for an upper one‐sided chart:

.

. - The 1‐of‐1 runs‐type signaling rule for a lower one‐sided chart:

.

. - The 1‐of‐1 runs‐type signaling rule for a two‐sided chart:

.

.

Figure 3.12 illustrates points (i) and (ii) of the 1‐of‐1 runs‐type signaling rule for an upper one‐sided and a lower one‐sided chart, respectively. Panel (a) detects an upward shift at time i = 7 when ![]() plots above the UCL, whereas Panel (b) detects a downward shift at time i = 7 when

plots above the UCL, whereas Panel (b) detects a downward shift at time i = 7 when ![]() plots below the LCL. For each chart the process is declared OOC and a search for assignable causes can be started.

plots below the LCL. For each chart the process is declared OOC and a search for assignable causes can be started.

Figure 3.12 The 1‐of‐1 runs‐type signaling rule for an upper one‐sided and a lower one‐sided chart.

Figure 3.13 illustrates point (3) of the 1‐of‐1 runs‐type signaling rule for a two‐sided chart. Both charts signal at time i = 7; at first an upward shift is detected when ![]() plots above the UCL, and then a downward shift is detected when

plots above the UCL, and then a downward shift is detected when ![]() plots below the LCL.

plots below the LCL.

Figure 3.13 The 1‐of‐1 runs‐type signaling rule for a two‐sided chart.

3.5.2.1.2 The k‐of‐k and k‐of‐w Runs‐type Signaling Rules

Several runs‐type signaling rules, such as the 2‐of‐2 and 2‐of‐3 runs‐rules, have been investigated by many authors and proved that their “runs‐rules enhanced” charts outperform the 1‐of‐1 chart.

One‐sided k‐of‐k and k‐of‐w Runs‐type Signaling Rules

To illustrate, we start with the 2‐of‐2 runs‐type signaling rule. This rule uses the last two charting statistics, ![]() and

and ![]() , to determine whether the process is IC or OOC. For the 2‐of‐2 runs‐type signaling rule, an upper one‐sided chart signals when event

, to determine whether the process is IC or OOC. For the 2‐of‐2 runs‐type signaling rule, an upper one‐sided chart signals when event ![]() occurs and a lower one‐sided chart signals when event

occurs and a lower one‐sided chart signals when event ![]() occurs where

occurs where

- an upper one‐sided chart supplemented by a 2‐of‐2 runs‐type signaling rule:

- a lower one‐sided chart supplemented by a 2‐of‐2 runs‐type signaling rule:

.

.

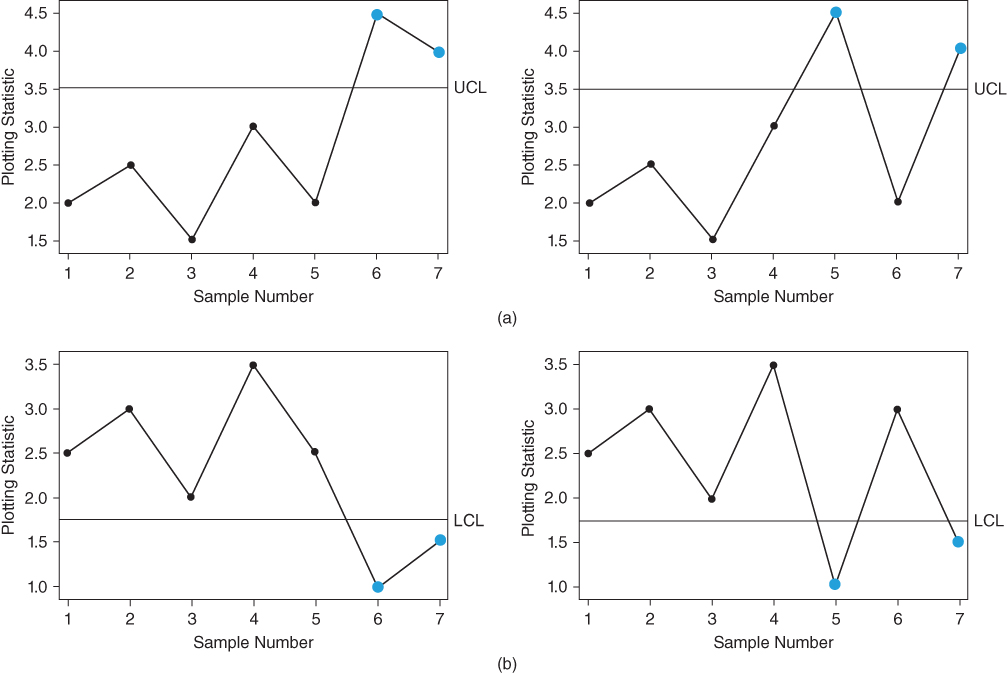

Panels (a) and (b) in Figure 3.14 illustrate these signaling events ![]() and

and ![]() , and both signal at time i = 7 where two consecutive points plots above (below) the UCL (LCL). For each chart, the process is declared OOC and a search for assignable causes can be started.

, and both signal at time i = 7 where two consecutive points plots above (below) the UCL (LCL). For each chart, the process is declared OOC and a search for assignable causes can be started.

Figure 3.14 The 2‐of‐2 runs‐type signaling rule for an upper one‐sided and a lower one‐sided chart.

Next, we illustrate the 2‐of‐3 runs‐type signaling rule for an upper one‐sided chart and a lower one‐sided chart, respectively. These charts use two of the last three charting statistics ![]() ,

, ![]() , and

, and ![]() to determine whether the process is IC or OOC. Although there are three ways that two of the three charting statistics can result in a signal, only on two of these possibilities does the last charting statistic plot on or above (below) the upper (lower) control limits. The one‐sided charts supplemented by the 2‐of‐3 runs‐type signaling rule signal when event

to determine whether the process is IC or OOC. Although there are three ways that two of the three charting statistics can result in a signal, only on two of these possibilities does the last charting statistic plot on or above (below) the upper (lower) control limits. The one‐sided charts supplemented by the 2‐of‐3 runs‐type signaling rule signal when event ![]() or

or ![]() =

= ![]() occurs where:

occurs where:

| An upper one‐sided chart supplemented by the 2‐of‐3 runs‐type signaling rule |

|

(1) (2) |

| A lower one‐sided chart supplemented by the 2‐of‐3 runs‐type signaling rule |

|

(3) (4) |

Figure 3.15 illustrates these signaling events ![]() ,

, ![]() ,

, ![]() , and

, and ![]() of an upper one‐sided chart and a lower one‐sided chart supplemented by the 2‐of‐3 runs‐type signaling rule, respectively. The upper one‐sided charts in Panel (a) signal at time i = 7 where two of the last three points plot above the UCL. For each chart, the process is declared OOC and a search for assignable causes can be started. Likewise, the lower one‐sided charts in Panel (b) signal at time i = 7 where two of the last three points plot below the LCL. For each chart, the process is declared OOC and a search for assignable causes can be started.

of an upper one‐sided chart and a lower one‐sided chart supplemented by the 2‐of‐3 runs‐type signaling rule, respectively. The upper one‐sided charts in Panel (a) signal at time i = 7 where two of the last three points plot above the UCL. For each chart, the process is declared OOC and a search for assignable causes can be started. Likewise, the lower one‐sided charts in Panel (b) signal at time i = 7 where two of the last three points plot below the LCL. For each chart, the process is declared OOC and a search for assignable causes can be started.

Figure 3.15 The 2‐of‐3 runs‐type signaling rule for an upper one‐sided chart and a lower one‐sided chart.

Some of the patterns of the 2‐of‐3 runs‐type signaling rules are excluded. If the first and the second charting statistics plot outside the control limits, but the third (or last) charting statistic plots between the control limits, the process cannot be declared OOC, because the process has shifted back to being IC and, accordingly, these patterns are excluded from the 2‐of‐3 runs‐type signaling rules. Refer to Figure 3.16, where the upper one‐sided chart supplemented by the 2‐of‐3 runs‐type signaling rule and the lower one‐sided chart supplemented by the 2‐of‐3 runs‐type signaling rule are excluded as signaling events.

Figure 3.16 The 2‐of‐3 runs‐type signaling rules that are excluded for an upper one‐sided and a lower one‐sided chart.

Two‐sided Charts Supplemented by k‐of‐k and k‐of‐w Runs‐type Signaling Rules

A two‐sided chart has both an upper and a lower control limit, thus it can detect either an upward or a downward shift. To illustrate, we start with the 2‐of‐2 runs‐type signaling rule. A two‐sided chart supplemented by a 2‐of‐2 runs‐type signaling rule is much the same as that of the one‐sided charts in the sense that it uses the last two charting statistics, ![]() and

and ![]() , to determine whether the process is IC or OOC, but it is able to detect both an upward or a downward shift. A two‐sided chart supplemented by the 2‐of‐2 runs‐type signaling rule signals when event

, to determine whether the process is IC or OOC, but it is able to detect both an upward or a downward shift. A two‐sided chart supplemented by the 2‐of‐2 runs‐type signaling rule signals when event ![]() or

or ![]() occurs where

occurs where

- both charting statistics plot on or above the UCL:

- both charting statistics plot on or below the LCL:

- the first charting statistic plots on or above the UCL and the second plots on or below the LCL:

- the first charting statistic plots on or below the LCL and the second plots on or above the UCL:

.

.

Figure 3.17 illustrates these signaling events ![]() ,

, ![]() ,

, ![]() , and

, and ![]() for the two‐sided chart supplemented by 2‐of‐2 runs‐type signaling rules, respectively. Panels (a) and (b) illustrate events

for the two‐sided chart supplemented by 2‐of‐2 runs‐type signaling rules, respectively. Panels (a) and (b) illustrate events ![]() and

and ![]() ; both charts signal at time i = 7, where two consecutive points plots above (below) the UCL (LCL). Panels (c) and (d) illustrate events

; both charts signal at time i = 7, where two consecutive points plots above (below) the UCL (LCL). Panels (c) and (d) illustrate events ![]() and

and ![]() when an upward shift is immediately followed by a downward shift, or vice versa.

when an upward shift is immediately followed by a downward shift, or vice versa.

Figure 3.17 The 2‐of‐2 runs‐type signaling rule for a two‐sided chart.

Next, we illustrate a two‐sided chart with 2‐of‐3 runs‐type signaling rules. Similar to one‐sided chart, a two‐sided chart uses two of the last three charting statistics, ![]() ,

, ![]() , and

, and ![]() , to determine whether the process is IC or OOC. There are up to 12 scenarios where exactly two of the three charting statistics can plot outside the control limits; however, we will only focus on four signaling events

, to determine whether the process is IC or OOC. There are up to 12 scenarios where exactly two of the three charting statistics can plot outside the control limits; however, we will only focus on four signaling events ![]() or

or ![]() that may occur where:

that may occur where:

| Two of the three charting statistics plot on or above the UCL |

|

(1) (2) |

| Two of the three charting statistics plot on or below the LCL |

|

(3) (4) |

Figure 3.18 illustrates these signaling events ![]() ,

, ![]() ,

, ![]() , and

, and ![]() for a two‐sided chart supplemented by 2‐of‐3 runs‐type signaling rules. Panel (a) illustrates events

for a two‐sided chart supplemented by 2‐of‐3 runs‐type signaling rules. Panel (a) illustrates events ![]() and

and ![]() , where exactly two out of the three charting statistics plot on or above the UCL, whereas Panel (b) illustrates events

, where exactly two out of the three charting statistics plot on or above the UCL, whereas Panel (b) illustrates events ![]() and

and ![]() , where exactly two out of the three charting statistics plot on or below the LCL.

, where exactly two out of the three charting statistics plot on or below the LCL.

Figure 3.18 The 2‐of‐3 runs‐type signaling rule for a two‐sided chart.

Figure 3.19 illustrates eight of the 12 scenarios that are excluded as signaling events. Panel (a) shows a possible swing detected, whereas Panel (b) illustrates a downward or upward trend in the process. As mentioned in Figure 3.16 , the four events illustrated in Panel (c) will be excluded as signaling events due to the fact that the last point plots between the control limits; therefore, the process cannot be declared OOC, because the process has shifted back to being IC.

Figure 3.19 The 2‐of‐3 runs‐type signaling rules that are excluded for a two‐sided chart.

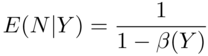

3.6 Run‐length Distribution in the Specified Parameter Case (Case K)

The performance of a control chart is analyzed via the run‐length distribution and associated characteristics such as the various moments and percentiles. We discuss the methods of calculating the run‐length distribution of control charts in this section. The discussion is general and applies to all types of control charts, including parametric and nonparametric control charts and the parameter known (Case K) and unknown (Case U) cases. We deal with Case K first.

3.6.1 Methods of Calculating the Run‐length Distribution

There are four methods to calculate (or at least approximate) the run‐length distribution of a control chart. Specifically, in Case K, these are

- the exact approach (for Shewhart and some Shewhart‐type charts);

- the Markov chain (MC) approach;

- the integral equation approach; and

- the computer simulations (the Monte Carlo) approach.

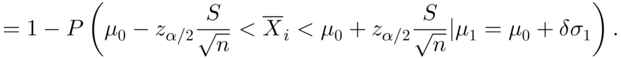

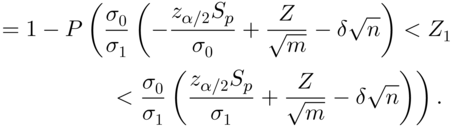

A discussion on each method follows. We consider first the case of Shewhart charts.