Chapter 10: Implementing Agile Threat Intelligence

Advanced security programs use threat-informed defense to power up their incident response and day-to-day defenses. This implies that these programs consume threat intelligence and have integrated threat intelligence with the rest of their security operations. This final chapter will deal with an approach to doing that.

Threat intelligence requires a significant amount of organizational readiness, as well as a mindset that's associated with agile. Threat intelligence (or intelligence proper) involves dealing with uncertainty, being wrong at times, taking calculated risks, and performing assessments that may only have a temporary value.

A credible threat intelligence program, which is a program in which intelligence is not only consumed, but also used, consists of several activities that are best performed in the context of agile security operations, such as curation, threat hunting, tasking, and adversary simulation.

This chapter will cover the following topics, which, when put together, describe the threat intelligence cycle:

- What threat intelligence is and isn't

- A threat intelligence program

- Direction

- Collection and collation

- Interpretation

- Dissemination

You may be wondering, what is agile about threat intelligence? Agile threat intelligence focuses on consuming and developing threat intelligence – that is, it's timely, actionable, and open to revision and adaption when the situation requires it. This requires agility in the threat intelligence program and agility among the people operating it.

What threat intelligence is and isn't

In the previous chapters of this book, we discussed how incident response is at the core of security practices. This is also true for threat intelligence. The closure of the incident response loop, which we discussed in Chapter 3, Engineering for Incident Response, allows us to sketch the placement of a cyber threat intelligence program into security operations.

As we discussed there, the result of this retrospective in incident response is intelligence, TTPs, and the context of the incident that was just resolved. Threat intelligence is the process of gathering structured, actionable information about attackers from our threats, as well as from outside sources.

The role of threat intelligence in closing the incident response process is shown in the following diagram:

Figure 10.1 – The context of cyber threat intelligence

From the preceding diagram, it should be clear that threat intelligence is not just a firehose of threat data that's consumed indiscriminately – it requires context and processing to be of use to the organization. Threat intelligence, when done well, is hard work.

Context and threat intelligence processing is best described with the intelligence cycle, in which data is collected, curated, disseminated, and ultimately used. Cyber threat intelligence depends on this cycle, and the steps in the threat intelligence cycle form the components of a threat intelligence program.

The classic intelligence cycle consists of the following steps:

- Direction: The statement of the intelligence requirement. This should take the form of a specific question usually focused on a strategic or business problem.

- Collection: Defining and acquiring the essential information.

- Collation: All the essential elements from all the sources are collated into a readily accessible and searchable collection.

- Interpretation: Information is analyzed and turned into intelligence.

- Dissemination: The intelligence is communicated in written or oral form.

The purpose of a threat intelligence program is to improve the detection and prevention of attacks by using detailed knowledge about the toolset and behavior of the attacker.

The Threat Intelligence Cycle and Intelligence Failures

It should come as no surprise that intelligence failures are, in many cases, attributable to organizations making fatal mistakes in the various steps of the threat intelligence cycle. So, understanding the different steps and their implementation is key to having a better understanding of how threat intelligence may be consumed and processed. Examples of how intelligence failures may be attributable to failures in the intelligence cycle in classical intelligence have been collected in J. Hughes-Wilson, On Intelligence: The History of Espionage and the Secret World, Little, Brown Book Group, London, 2016.

The threat intelligence cycle is depicted in the following diagram. It shows the various stages that organizations must follow to use a cyber threat intelligence program:

Figure 10.2 – The threat intelligence cycle

To this end, we can use the intelligence cycle shown in the preceding diagram while keeping in mind that the dissemination of the information is not entirely in written reports, but in actual inputs to detection and prevention – that is, tasking our infrastructure with that specific detection.

A threat intelligence program

How does the intelligence cycle fit with the entirety of the security program? In the context of what we discussed in Chapter 7, How Secure Are You?– Measuring Security Posture, threat intelligence is a capability that has strategic value, both for the security program as well as for the entire organization.

In the context of implementing threat intelligence, we will focus on acquiring threat intelligence from outside sources, and then also consider what is required to implement a threat intelligence program.

Acquiring threat intelligence

Commercial or community-based threat intelligence is usually provided in the form of feeds, which are formatted into a protocol that can be used to share threat intelligence, such as STIX and TAXII or MISP. Community feeds are usually shared under the traffic light protocol, which we discussed in Chapter 1, How Security Operations Are Changing. Commercial feeds also have commercial terms regarding their use and dissemination throughout the organization.

Threat intelligence can come in various types:

- Strategic: Strategic threat intelligence is intelligence at the level of the business or sector that the organization operates in. It consists of threat landscape surveys, threat reports for specific threat groups, or new and somewhat unknown varieties of compromise for business processes. Strategic threat intelligence lets the business make changes to its strategy and has long-term value.

- Tactical: Tactical threat intelligence focuses on the operations of specific threat groups, as well as the methods that are being used by threat groups to compromise their victims. Tactical threat intelligence is of medium-term value and allows an organization to design and deploy both detections and defenses, as well as enable threat hunting.

- Operational: Operational threat intelligence focuses on distributing indicators of compromise or specific FQDNs that are being used for malicious activities and allows an organization to deploy immediate low-level detections, usually at the bottom end of the pyramid of pain. The pyramid of pain is a classification scheme for indicators (it was also referenced in Chapter 3, Engineering for Incident Response, and Chapter 6, Active Defense).

Running your own function

Apart from accessing threat intelligence through an outside feed, it is also possible to form and operate an internal threat intelligence team, either as a part of incident response or as an entirely separate function in the security team.

A cyber threat intelligence program is based on the threat intelligence cycle but also incorporates some modifications to this cycle. As an example, we have the collection phase, which may involve the sort of activities that people associate with spying on traditional intelligence. In the cyber variety, it involves gathering data from intelligence sources, performing reconnaissance activities on adversaries, and using known patterns of attack from the ATT&CK matrix or similar sources.

In cyber threat intelligence, the collation and interpretation phases primarily focus on how to deal with large and complex data sources and may involve data mining and artificial intelligence to make sense of it all.

A team can start the threat intelligence function in a low-key manner once they close the incident loop in the manner discussed in Chapter 3, Engineering for Incident Response, and consider the threat-based improvements in their infrastructure. A team may then opt to share these findings with an outside group of organizations that they team up with and trust under the traffic light protocol, for instance.

Using threat intelligence

But the biggest modification that is made in cyber threat intelligence is in dissemination, which, in traditional intelligence terms, means writing a report and sharing it with the people that have been cleared to read that report. In cyber threat intelligence, while you may do such reporting, dissemination is also a key element of our old friend detection engineering, but its benefits are not limited to just that.

Specifically, the dissemination component focuses on operationalizing threat intelligence so that it becomes useful to the business. Operationalization can take three forms:

- Detection engineering, where the new intelligence is translated into specific detections that are then deployed.

- Threat hunting, where the new intelligence is used to inform hunt queries. Tactical threat intelligence is particularly useful in this scenario.

- Infrastructure hardening, where operational threat intelligence is deployed in static defenses to harden the infrastructure against those specific threats.

In this last scenario, dissemination involves tasking detection and prevention infrastructure with up-to-date indicators of compromise that have been collected through the intelligence program, so that it can detect or prevent the attack. This is a form of infrastructure hardening that makes it more resilient against attacks.

Note

On the first.org website, you can find a detailed course on threat intelligence: https://www.first.org/education/trainings#FIRST-Threat-intel-Pipelines-Course.

In terms of the intelligence cycle, in cyber threat intelligence, we must consider the following:

- Direction: The direction phase is guided by considering threats and risks to the organization, ranging from the activities of specific threat groups to the risks to the sector the business operates in.

- Collection: In the collection phase, we can consider traditional news sources for reports of attacks in our industry or sector, the outcome of our current security incidents, monitoring leaked enterprise accounts that appear in dumps, become part of a threat sharing group, or monitor the exploits of our most frequent vulnerabilities.

- Collation: All the essential elements from all the sources are collated into a readily accessible database, which is commonly called a threat intelligence platform. The collation process describes the process whereby all the elements that make up the intelligence product are put together in a form that allows analysis.

- Interpretation: In this phase, the information is analyzed and enriched by combining several elements. This is often a manual process, which can follow some of the techniques we discussed earlier for analyzing incidents.

- Dissemination: In this phase, the intelligence that's been collected is communicated in written or oral form to an audience. This may include organizational leadership, internal stakeholders, and external parties as required. This phase also involves looking at our defensive infrastructure through detection engineering.

In the remainder of this chapter, we will discuss these steps in more detail and outline operational procedures that describe how they can be implemented.

Direction

Running a threat intelligence program is hard and may be expensive. In Chapter 7, How Secure Are You? – Measuring Security Posture, we discussed the elements of a risk and strategy framework that allows the security posture of the business to be measured. In this section, we will apply that framework to threat intelligence collection.

The critical success factors for direction are as follows:

- Having a robust understanding of how impacts on capabilities and operations translate into business risk

- Realizing that past incidents are a partial, but not complete, guide to the future

- Combining threat modeling and business modeling into attack path modeling to understand the financial underpinnings of risk reduction

Let's now discuss the risk reduction strategies.

Understanding risk reduction

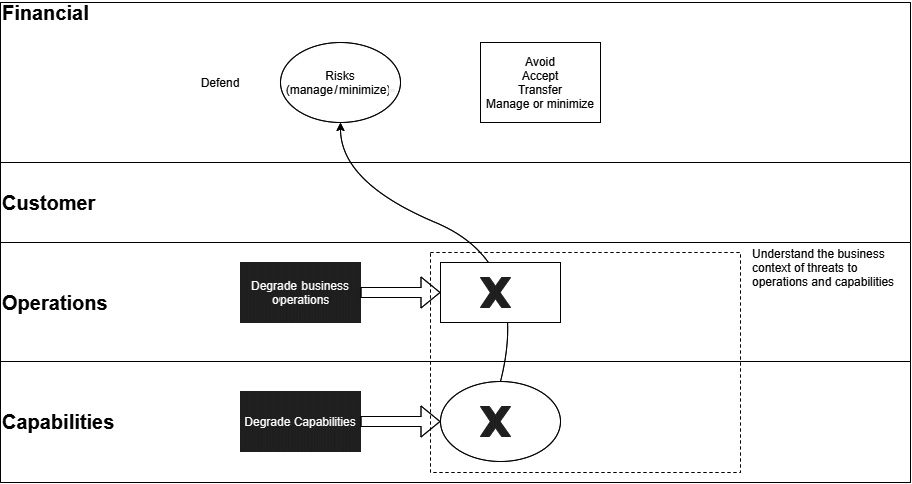

In terms of the risk framework, we are interested in the potential for threats to impact business operations and capabilities.

We discussed the strategy development model in Chapter 7, How Secure Are You?– Measuring Security Posture. This model also gives us a way to translate (qualitatively) the degradation of operations or capabilities into risks to the business by considering how such a degradation affects the various customers and their finances.

The following diagram shows how this model can degrade processes and capabilities and translate them into business risks:

Figure 10.3 – Degradation of capabilities flowing into business risk

From this, it is clear that cyber defenders need to have robust insights into the key business processes and how they affect customers to develop a view regarding which threats would lead to the largest risks.

We can combine this understanding with our discussion of use cases from Chapter 6, Active Defense, to develop a good view of which particular use cases would be the most damaging to the business, and then focus on those.

Using past attacks as a guide

Another way to get a handle on direction is to close the incident loop and look at all past attacks, successful or not, as events that are likely to happen again in the future. This is not to say that past events form a complete guide to what may happen in the future. But past events provide a partial guide into the future – one where we may combine past experiences with our lessons learned to get better future outcomes.

Groups that have attacked us in the past, especially in cases where we have decided that they are advanced persistent groups, are likely to be back in the future, , with tactics and techniques that are variations on the ones used in the attacks we have already come across and resolved. Closing the incident loop means we are already familiar with those tactics and techniques and are more likely to recognize variations of them in the future.

Scoping prospective groups

In addition to the knowledge about attacks and attack groups that have already occurred, we can also scope prospective groups that are, for instance, active in our industry to get a view of who may attack us in the future. In Chapter 6, Active Defense, we discussed how we can use a combination of news feeds, threat intelligence platforms, and even indictments to map out new potential adversaries.

Business capabilities and operational context

Combining all this information should give us a decent overview of the most active threats for our organizations as well as the tactics, techniques, and, to some degree, procedures associated with those threats.

At this point, we must map these threats and their tactics to business capabilities and operations. Threats pose a risk because of their capability to disrupt or destroy capabilities and business processes, and it is in this sense that they pose a risk. In addition to these TTPs, the impact tactic in the last column of the ATT&CK framework (https://attack.mitre.org/tactics/TA0040/) can also help map the impact of threats, both real and perceived, to processes and capabilities.

By considering past attacks as well as prospective attacks, we can build a retrospective and prospective view of business risk.

In the retrospective view, we are certain about the impact that threat group will have on our infrastructure because we have been here before: the impact of the attacks from that group and their copycats will have been broadly known. However, we are uncertain that this group will be back. They may or may not.

In the prospective view, we have additional uncertainty about both the likelihood of the attack as well as its impact. Both retrospective and proactive views work together to establish an estimate of the threat landscape being faced by the organization.

The direction of cyber threat intelligence may also include information about our organization, how well they are organized, and the defenses that are already in place, all of which are evaluated against the assessment of the threat landscape.

The influence on direction

In the direction phase, the organization defines the objectives of its threat intelligence program: the intelligence it wants to collect and what it wants to do with it. The direction question, since it focuses on risk, can take several different forms:

- Which cyber threat adversaries are the most dangerous to our organization? Why?

- How well is our organization prepared to counter these threats? What does the environment look like from the viewpoint of a defender and the viewpoint of an attacker?

- What critical information would be required by the executive team to make decisions about responding to an incident, should the scenario outlined in the threat assessment eventuate?

The direction phase of a threat intelligence program is a strategic phase that is unique to each organization. Organizations need to organically consider threats and their impact on their systems and ask specific, directed, and answerable questions related to their threat intelligence program.

Collection and collation

Once the direction of threat intelligence has been established, we need to consider how the data is collected and treated so that it can answer the directive question(s).

Threat intelligence is usually made available as a feed, which contains information about various artifacts (usually somewhat down on the pyramid of pain) that constitute various degrees of threat to the organization. But feeding data like this is meaningless if it is not combined with a business context.

The data funnel

A concept that is useful for describing the process of generating threat intelligence in the business is depicted in the following diagram, which depicts a funnel where data originating in our own organization is progressively collected, put together, and analyzed:

Figure 10.4 – The threat intelligence data funnel

In this model, the environment generates logging and event data, which is collected and collated (put together) in a single place and combined with a threat feed of known (usually lower level) indicators of malicious activity. The question then is whether, at some point, the information available from our logs matches the activity.

As we can also see from this diagram, external feeds are only one part of the possible threat intelligence that we have access to, with the other ones being anomalies and past incidents.

When anomalies are followed up consistently, we get to the practice of threat hunting, which aims to establish whether the observed anomalies are part of an existing threat by following some leads that have been generated by threat intelligence. We discussed threat hunting in Chapter 8, Red, Blue, and Purple Teaming.

We have already argued that past incidents provide a robust guide to the threats faced by our organization since we understand past events from the viewpoint of business impact, not just technical indicators. Closing the incident loop is all about ensuring that we obtain the most intelligence from past incidents and reuse them for future benefit.

External feeds

Threat intelligence vendors work hard to monitor the corners of the internet where we usually don't go, such as underground forums where attackers may go to exchange methods, victim information, or planned attacks. They then translate this information into early warnings for their customers. In addition, commercial threat feed vendors can feed us IOCs that are associated with threat groups.

Commercially available threat feeds can lead to a spigot of data, usually without much business context. As a rule, the feed data sits lower in the pyramid of pain, which we briefly mentioned in Chapter 3, Engineering for Incident Response.

It makes little sense to just go out and buy a threat feed. Some considerations for threat feeds are as follows:

- How specific is the threat feed with regard to our business environment, and does the information supplied by the vendor match with my understanding of the major risks my organization faces?

- Does the threat data usefully extend my understanding of those risks?

- Is the format that the data is supplied in consistent with the platform I am using to store my data?

I hope this model makes it clear that external threat feeds are important, but that they are by no means the only way of getting threat intelligence.

Feeds meeting internal logs

Feeds can be matched to log events to create sightings; positive confirmation of some bad actors on our network. Sightings should be further investigated as part of our incident response processes.

We also need to ensure that we log data that is meaningful in a threat intelligence context. To that end, we must devise a logging strategy, as discussed in Chapter 3, Engineering for Incident Response, and Chapter 6, Active Defense. Following the TTPs in the ATT&CK framework can assist in determining which events to log and how.

This stage of the threat intelligence program is not particularly different from the security practices we have already discussed in terms of its implementation. This changes once we get to the next stage.

Interpretation

Interpretation focuses on how to make sense of threat intelligence at the level of our IT systems as well as the business, and ultimately the threat groups and risks. Whereas most incident response practices focus on resolving the incidents, generating threat intelligence from incident data involves generalizing the observed data into a set of TTPs and evaluating those against the possible objectives of the attackers.

Using structured analytic techniques

The analytic tools we can use to do this are structured analytic techniques, which we have already mentioned in Chapter 4, Key Concepts in Cyber Defense. These structured analytic techniques can help us generate and test hypotheses that explain the observed data patterns and can assist us with generating the TTPs related to a specific attack.

This is not a simple process as it relies on trial and error, as well as flexibility in revising prior views. To get good threat intelligence, we need to have a good explanation of each event and robust analysis, which is not easy to come by.

Threat groups

Data from multiple attacks, once it's been collected and documented, can lead us to threat groups if we cluster the TTPs that we have observed. The idea behind this is that threat groups have a business model that states the things they know how to do best and most easily, as well as what characterizes an attack group is this business model alongside the objectives outlined. Some of the variables that influence attack clustering are as follows:

- The tactics and methodology followed by the group to compromise their victims. Some groups focus on phishing for credentials, while others specialize in malware-laden office documents.

- Imputed objectives: some groups are financially motivated, while others attempt to steal intellectual property or perform extortion.

- The industry segments that are targeted by a group.

- The malware families that are used by the group.

- The exploits that are used by a group.

It usually takes more than one attack to correctly characterize the threat group behind it, but especially for persistent threats, such efforts pay off impressively.

Note

An article discussing a clustering algorithm for threat groups can be found here: https://www.fireeye.com/blog/threat-research/2019/03/clustering-and-associating-attacker-activity-at-scale.html. This is one of the many ways in which you can cluster groups.

Clustering is sometimes made more difficult because of certain threat groups only specializing in some aspects of intrusion, where the results are then sold to other groups. Access brokers, for instance, specialize in gaining access to victims but may sell this access to other groups.

In other cases, common malware is sold to anyone who is considering becoming a bad actor on the internet, so it is not uncommon to see such malware in use across several groups at the same time.

Both trends make clustering harder, which is why it can take multiple years and large amounts of attack data before a trustworthy assignment can be made.

There is no standard terminology for candidate threat groups, with Mandiant using the UNC prefix and Microsoft using the DEV prefix. Once the attack groups have been categorized, however, the common prefixes for the group names are ATP for advanced persistent threat and FIN for financially motivated groups. At the time of writing, many ransomware gangs, if they have been classified, are in the FIN category.

Dissemination

Disseminating cyber threat intelligence focuses on how we use the result of the threat intelligence exercise. It can occur in various forms.

The extended data funnel for threat intelligence, as outlined in the following diagram, mentions a few components: risk analysis, alerting, detection engineering, and tasking. In the following diagram, we are not representing the external threat feeds as a specific input:

Figure 10.5 – Closing the threat intelligence loop

These three elements play out at different levels of the organization. Risk analysis focuses on the strategic aspect of security operations and considers the impact on the business. Alerting, detection engineering, and tasking play out at the tactical level of security operations.

Risk analysis

Intelligence about threat groups can be used by assessing the cost to the business concerning the typical impacts that result from that group, alongside the TTPs, to establish the risk this group poses to our organization.

From our discussion in Chapter 1, How Security Operations Are Changing, we know that risk is the combination of a threat event, which we can get a handle on with cyber threat intelligence, our vulnerability, and the impact caused by the event (Figure 1.1 and the surrounding discussion). A cyber threat intelligence program allows us to correctly estimate the risks that are posed by the threat groups that are active in our sector.

Alerting, hunting, and detection

Having a good handle on the threat groups that are active in our environment allows us to design a better alerting and detection framework that takes this knowledge into account.

Detection

We have already discussed the practice of detection engineering, which amounts to treating detections as code that need to be continuously updated so that the improvements stay relevant to the user. A cyber threat intelligence program provides the direction for that process by outlining which threat actors are relevant, what their TTPs look like, and what these threat actors look like early in the kill chain.

In this way, we can develop and continuously improve detections for these actors that catch them early rather than later.

The threat intelligence input to detection engineering can take different forms:

- Indicators of compromise (IOCs), which consist of specific file hashes, FQDNs, IP addresses, packet signatures, or other low-level threat data that can be put into detection tooling almost directly.

- Collections of this data, such as in a sigma rule, which can be processed programmatically to be deployed into detection infrastructure or the SIEM.

- Tactical threat intelligence, which usually leads to the development of new detections. To go from tactical intelligence to new detections, you need to consider how this tactic or technique may be detected or prevented.

Sigma Rules

Sigma rules are generic rules that describe the signatures of threats and how they can be detected in general, so that the rules become portable between systems. A converter program can be used to translate these sigma rules into the specific formats that are used by a SIEM or log aggregation platform:

Threat hunts

Threat intelligence information can also be used in the case of threat hunts. Threat intelligence information allows us to search our past data and logs with the help of specific intelligence-driven hunt leads: analytic queries to look for evidence of the presence of the group we have received intelligence about.

Hunting is best deployed in combination with tactical threat intelligence, which involves focusing on the tactics of the group that is the hunt target to ensure that its chosen techniques and procedures can be detected and mitigated.

Infrastructure hardening

One of the most interesting results that can flow from a threat intelligence program is the capability to harden our infrastructure with the specific technical indicators that are associated with the threat groups that we want to protect our organization against.

It is a great bonus to be able to be specific about such groups, rather than having to deploy every potential indicator related to all groups. The latter approach is likely to overwhelm our infrastructure and processes, long before it even finishes, as blocklists become longer, data is loaded indiscriminately, and we have a non-existent process for weeding out stale data from the blocklist.

Some considerations and key questions to ask when considering this hardening are as follows:

- Automation is essential but needs to be driven by judicious discrimination in what to load, lest we overwhelm the system.

- Loading data is as important as unloading data. We cannot afford a situation where blocklists just get indiscriminately longer and longer.

- The timeliness of the data. It makes no sense to block URLs and hashes that were relevant, say, 2 weeks ago, but are no longer used by the attackers..

Like everything we have discussed in this book, creating, developing, and using threat intelligence is a cycle of continuous improvement that requires the agile processes to undergo rapid feedback and incremental improvement.

Summary

In this final chapter, we discussed creating, consuming, and utilizing threat intelligence to strengthen an already existing program so that it can perform threat-informed defense. Threat-informed defense is driven by having a robust program for performing incident management and extracting intelligence from incident data, as well as observations, engineered detections, and actions.

A point we have not discussed in this chapter, but one that is worth mentioning is that a well-executed threat intelligence program can significantly improve the standing of security operations at an executive level, where people deal with risks. Threat intelligence, when done well, makes such risks not only quantifiable but also visible.