Chapter 14. BGP High Availability

The following topics are covered in this chapter:

![]() BGP Graceful-Restart

BGP Graceful-Restart

![]() BGP SSO and Nonstop Routing

BGP SSO and Nonstop Routing

![]() BFD

BFD

![]() Fast External Failover

Fast External Failover

![]() Route Dampening

Route Dampening

![]() BGP Add-Path

BGP Add-Path

![]() BGP Prefix-Independent Convergence

BGP Prefix-Independent Convergence

BGP Graceful-Restart

The BGP Graceful-Restart (GR) feature allows a BGP speaker to express its ability to preserve forwarding state during Border Gateway Protocol (BGP) restart or Route Processor (RP) switchover. In other words, it is the capability exchanged between the BGP speakers to indicate its ability to perform Nonstop Forwarding (NSF). This helps in minimizing the impact of services caused by BGP restart. Specially in large network deployments, where BGP carries large number of prefixes, a BGP restart, especially by a route-reflector (RR) router, can have a severe performance and service impact and can lead to major outages.

Examine the network topology shown in Figure 14-1. R1 is acting as the RR and its peering with multiple clients. If there is a BGP restart or RP switchover on R1, the peer detects the session flaps and propagate routing updates throughout the network. This can lead to increased CPU utilization if the RR is holding a large BGP table. The traffic destined to the prefixes that were removed are impacted.

RFC 4724 defines the GR mechanism for BGP. The BGP GR was developed with the following motivations:

![]() Avoid widespread routing changes.

Avoid widespread routing changes.

![]() Decrease control plane overhead throughout the network.

Decrease control plane overhead throughout the network.

![]() Enhance overall stability of routing.

Enhance overall stability of routing.

A GR-capable device announces its ability to perform GR for the BGP peer. It also initiates the graceful-restart process when a RP switchover occurs and acts as a GR-aware device. A GR-aware device, also known GR helper mode, is capable of understanding that a peer router is transitioning and takes appropriate actions based on the configuration or default timers.

GR capability should always be enabled for all routing protocols, especially when the routers are running with dual route processors (RP) and perform a switchover in case of any failure instance. Because BGP runs on Transmission Control Protocol (TCP), GR should be enabled on both the peering devices. After GR is configured or enabled on both peering devices, reset the BGP session to exchange the capability and activate the GR feature.

Note

GR is always on by default for non-TCP–based protocols such as Interior Gateway Protocol (IGPs). These protocols start operating in GR mode as soon as the other side is configured with GR capability.

BGP GR is an optional feature and is not enabled by default. BGP peers announce GR capability in the BGP OPEN message. Within the OPEN message, the following information is negotiated:

![]() Restart Flag: This bit indicates if a peer sending the GR capability has just restarted. This is used to prevent deadlocks if both peers restart at the same time.

Restart Flag: This bit indicates if a peer sending the GR capability has just restarted. This is used to prevent deadlocks if both peers restart at the same time.

![]() Restart Time: Indicates the length of time that the sender of the GR capability requires to complete a restart. The restart timer also helps in speeding up convergence in the event the peer never comes back up after a restart.

Restart Time: Indicates the length of time that the sender of the GR capability requires to complete a restart. The restart timer also helps in speeding up convergence in the event the peer never comes back up after a restart.

![]() Address-Family Identifier (AFI)/Subaddress-Family Identifier (SAFI): Address-family for which GR is supported.

Address-Family Identifier (AFI)/Subaddress-Family Identifier (SAFI): Address-family for which GR is supported.

![]() AFI Flags: It contains a Forwarding State bit. This bit indicates whether the peer sending the GR capability has preserved forwarding during the previous restart.

AFI Flags: It contains a Forwarding State bit. This bit indicates whether the peer sending the GR capability has preserved forwarding during the previous restart.

Peers can include GR capability without including any address-families. This implies GR awareness (nonrestarting support for GR) without the ability to perform a GR.

When a BGP restart happens on the peer router or when RP switchover occurs, the routes currently held in the forwarding table; that is, hardware, are marked as stable. This way, the forwarding state is preserved as the control plane and the forwarding plane operate independently. On the restarting peer (where the switchover occurred), BGP on the newly active RP starts to establish sessions with all the configured peers. BGP on the other side, the nonrestarting side, sees new connection requests coming in while BGP already is in established state. Such an event is an indication for the nonrestarting peer that the peer has restarted. At this point, the restarting peer sends the GR capability with Restart State bit set to 1 and Forwarding State bit set to 1 for the AFI/SAFIs.

The nonrestarting peer at this point cleans up old (dead) BGP sessions and marks all the routes in the BGP table that are received from the restarting peer as stale. If the restarting peer never reestablishes the BGP session, the nonrestarting peer purges all stale routes after the Restart Time expires. The nonrestarting peer sends an initial routing table update, followed by an End-of-RIB (EoR) marker. Restarting peer delays best-path calculation for an AFI until after receiving EoR from all peers except for those that are not GR capable or for the ones that have Restart State bit set.

The restarting peer finally generates updates for its peers and sends the EoR marker for each AFI after the initial table is sent. The nonrestarting peers receive the routing updates from the restarting peer and remove stale marking for any refreshed route. It purges any remaining stale routes after EoR is received from the restarting peer or the Stale Path Timer expires.

GR can be configured both globally or on a per neighbor basis. Use the command bgp graceful-restart to enable GR globally. Example 14-1 demonstrates the global configuration of GR on Cisco IOS, IOS XR, and NX-OS platforms. Use the command bgp graceful-restart restart-time value to set the GR restart timer and the command bgp graceful-restart stalepath-time value to set the maximum time for which the router will maintain the stale path entries in case it does not receives an EoR from the restarting peer. In IOS XR, the command bgp graceful-restart stalepath-timer sets the maximum time to wait for restart of GR capable peers and a new command is introduced to take care of purging the stale paths from the peer—bgp graceful-restart purge-time value.

Example 14-1 Global Configuration for Graceful-Restart

! Configuration on Cisco IOS

R1(config)# router bgp 100

R1(config-router)# bgp graceful-restart

R1(config-router)# bgp graceful-restart restart-time 300

R1(config-router)# bgp graceful-restart stalepath-time 400

! Configuration on IOS XR

RP/0/0/CPU0:R2(config-line)# router bgp 100

RP/0/0/CPU0:R2(config-bgp)# bgp graceful-restart

RP/0/0/CPU0:R2(config-bgp)# bgp graceful-restart restart-time 300

RP/0/0/CPU0:R2(config-bgp)# bgp graceful-restart stalepath-time 400

RP/0/0/CPU0:R2(config-bgp)# bgp graceful-restart purge-time 400

RP/0/0/CPU0:R2(config-bgp)# commit

! Configuration on NX-OS

R3(config)# router bgp 100

R3(config-router)# graceful-restart

R3(config-router)# graceful-restart restart-time 300

R3(config-router)# graceful-restart stalepath-time 400

If the BGP session is already in established state before GR configuration, the BGP sessions are required to be reset in order to exchange the GR capability. The GR capability is verified by using the command show bgp afi safi neighbors ip-address. Examine the output of show bgp ipv4 unicast neighbors ip-address in Example 14-2. Notice that in the command output, the GR capability is in advertised and received state. If either the advertised or received state is missing, it means that one of the peers is not having GR configured or the GR was configured after the session came up.

Example 14-2 Verifying GR Capability for BGP Neighbor

! Command Output on Cisco IOS

R1# show bgp ipv4 unicast neighbors 192.168.2.2

BGP neighbor is 192.168.2.2, remote AS 100, internal link

BGP version 4, remote router ID 192.168.2.2

BGP state = Established, up for 01:10:35

Last read 00:00:30, last write 00:00:29, hold time is 180, keepalive interval is

60 seconds

Neighbor sessions:

1 active, is not multisession capable (disabled)

Neighbor capabilities:

Route refresh: advertised and received(new)

Four-octets ASN Capability: advertised and received

Address family IPv4 Unicast: advertised and received

Graceful Restart Capability: advertised and received

Remote Restart timer is 300 seconds

Address families advertised by peer:

IPv4 Unicast (was not preserved

Enhanced Refresh Capability: advertised

! Output omitted for brevity

! Command Output on IOS XR

RP/0/0/CPU0:R2# show bgp ipv4 unicast neighbors 192.168.1.1

BGP neighbor is 192.168.1.1

Remote AS 100, local AS 100, internal link

Remote router ID 192.168.1.1

Cluster ID 192.168.2.2

BGP state = Established, up for 01:11:37

NSR State: None

Last read 00:00:41, Last read before reset 01:11:39

Hold time is 180, keepalive interval is 60 seconds

Configured hold time: 180, keepalive: 60, min acceptable hold time: 3

Last write 00:00:31, attempted 19, written 19

Second last write 00:01:31, attempted 19, written 19

Last write before reset 01:11:39, attempted 82, written 82

Second last write before reset 01:11:46, attempted 19, written 19

Last write pulse rcvd May 12 05:12:40.534 last full not set pulse count 267

Last write pulse rcvd before reset 01:11:39

Socket not armed for io, armed for read, armed for write

Last write thread event before reset 01:11:39, second last 01:11:39

Last KA expiry before reset 00:00:00, second last 00:00:00

Last KA error before reset 00:00:00, KA not sent 00:00:00

Last KA start before reset 00:00:00, second last 00:00:00

Precedence: internet

Non-stop routing is enabled

Graceful restart is enabled

Restart time is 300 seconds

Stale path timeout time is 400 seconds

Multi-protocol capability received

Neighbor capabilities:

Route refresh: advertised (old + new) and received (old + new)

Graceful Restart (GR Awareness): received

4-byte AS: advertised and received

Address family IPv4 Unicast: advertised and received

Received 140 messages, 1 notifications, 0 in queue

Sent 126 messages, 1 notifications, 0 in queue

Minimum time between advertisement runs is 0 secs

Inbound message logging enabled, 3 messages buffered

Outbound message logging enabled, 3 messages buffered

For Address Family: IPv4 Unicast

BGP neighbor version 2

Update group: 0.3 Filter-group: 0.4 No Refresh request being processed

Route-Reflector Client

AF-dependent capabilities:

Graceful Restart capability advertised

Local restart time is 300, RIB purge time is 400 seconds

Maximum stalepath time is 400 seconds

! Output omitted for brevity

! Command Output on NX-OS

R3# show bgp ipv4 unicast neighbors 192.168.2.2

BGP neighbor is 192.168.2.2, remote AS 100, ibgp link, Peer index 1

BGP version 4, remote router ID 192.168.2.2

BGP state = Established, up for 02:03:32

Using loopback0 as update source for this peer

Last read 00:00:22, hold time = 180, keepalive interval is 60 seconds

Last written 00:00:29, keepalive timer expiry due 00:00:30

Received 172 messages, 1 notifications, 0 bytes in queue

Sent 173 messages, 0 notifications, 0 bytes in queue

Connections established 2, dropped 1

Last reset by peer 02:03:43, due to session cleared

Last reset by us never, due to No error

Neighbor capabilities:

Dynamic capability: advertised (mp, refresh, gr)

Dynamic capability (old): advertised

Route refresh capability (new): advertised received

Route refresh capability (old): advertised received

4-Byte AS capability: advertised received

Address family IPv4 Unicast: advertised received

Graceful Restart capability: advertised received

Graceful Restart Parameters:

Address families advertised to peer:

IPv4 Unicast

Address families received from peer:

IPv4 Unicast

Forwarding state preserved by peer for:

Restart time advertised to peer: 300 seconds

Stale time for routes advertised by peer: 400 seconds

Restart time advertised by peer: 300 seconds

! Output omitted for brevity

Sometimes, not all peers are GR capable and are not required to be GR capable as well. GR can also be configured on a per-neighbor basis and having the GR globally disabled. This helps in exchanging GR capability with only those neighbors for which forwarding should not be impacted or be least impacted. GR is enabled for an individual neighbor using the command neighbor ip-address graceful-restart on both Cisco IOS XR and NX-OS and using the command neighbor ip-address ha-mode graceful-restart on Cisco IOS software. Example 14-3 demonstrates the configuration of GR on a per-neighbor basis.

Example 14-3 Per-Neighbor Graceful-Restart Configuration

! Configuration on Cisco IOS

R1(config)# router bgp 100

R1(config-router)# neighbor 192.168.2.2 ha-mode graceful-restart

! Configuration on IOS XR

RP/0/0/CPU0:R2(config)# router bgp 100

RP/0/0/CPU0:R2(config-bgp)# neighbor 192.168.1.1

RP/0/0/CPU0:R2(config-bgp-nbr)# graceful-restart

! Configuration on NX-OS

R3(config)# router bgp 100

R3(config-router)# neighbor 192.168.2.2

R3(config-router-neighbor)# graceful-restart

The NX-OS software also supports for GR-aware feature configuration; that is, the router does not perform full GR functionality but can have peers that are GR capable and is capable of sending EoR to restarting peers. This feature can also be configured on NX-OS either globally or on a per-neighbor basis. To enable GR aware configuration, use the global BGP command graceful-restart-helper or use the neighbor command neighbor ip-address graceful-restart-helper.

Cisco’s implementation of GR assumes NSF is enabled and tells the peers: “If I ever drop this session, it is because I am failing over from primary RP to secondary RP and will keep forwarding packets.” This makes the peer think that it needs to keep sending the packets. This scenario works as long as there is no reload or reboot on the router. If the router goes down, the neighbor router keeps sending the packets to this router, instead of forwarding the traffic to a working path, assuming the router that restarted is performing a switchover and it has its Forwarding Information Base (FIB) updated. This causes the traffic to black hole and causes an outage.

The problem is not with the feature itself but with the understanding between GR and NSF. GR does not mean that NSF is enabled but only assumes that NSF is enabled on the router. NSF is not configurable but is enabled by default when the router is running in Stateful Switchover (SSO) mode. NSF can also be defined as a function to checkpoint the FIB on the standby router.

The GR Restart Timer, which defaults to 120 seconds, takes care of clearing the stale path entries in case the BGP peer does not comes up within this time period.

Note

Before moving to the next topic, it is important to understand routers’ and switches’ different high-availability operating modes with dual RPs.

![]() Stateful Switchover (SSO): Failover from the active RP (crashing or reloading) to the standby RP (which takes over as the active role) where state is preserved and the router was in hot-standby mode before the switchover.

Stateful Switchover (SSO): Failover from the active RP (crashing or reloading) to the standby RP (which takes over as the active role) where state is preserved and the router was in hot-standby mode before the switchover.

![]() RPR+: RP redundancy mode where standby RP is partially initialized, but there is no synchronization of state.

RPR+: RP redundancy mode where standby RP is partially initialized, but there is no synchronization of state.

It is required to have SSO state for features like NSF, Nonstop Routing (NSR), or GR.

BGP Nonstop Routing

High-availability features like GR are really useful in critical network environments, where traffic loss even for few seconds can cost a lot to the organization, whether it is a service provider network or an enterprise. But GR is not really a feasible solution in all deployments. Think about a service provider network. It is easy to deploy a GR feature everywhere in the service provider core and edge, but the service provider cannot expect to have the customers enable GR or be GR capable. There might be customer environments where the customer premises equipment (CPE) might be running a platform or software that does not support GR or might be running the CPE with just a single RP. In such situations, GR is not feasible for the customers.

An RP switchover should be transparent to the customer, and this was the primary motivation behind NSR. NSR is a feature where routing protocols explicitly checkpoint state from active RP to the standby RP to maintain routing information across a switchover. Thus, NSR sessions are in established state on the standby RP prior to switchover and remain established even after the switchover. The main benefit of using NSR is it is transparent to the remote speaker; that is, the remote does not need to be NSR capable for the feature to work.

There are three phases in NSR operation. Each phase performs certain actions, and based on these phases, it becomes easier to identify any problem with BGP NSR.

![]() Synchronization: During this state, the task of session state mirroring happens between the active and the standby RP. The TCP stack is first synchronized, followed by the application stacks—in this case, BGP.

Synchronization: During this state, the task of session state mirroring happens between the active and the standby RP. The TCP stack is first synchronized, followed by the application stacks—in this case, BGP.

![]() NSR-ready: The active and standby stacks operate independently, but the incoming packets or updates are replicated to both the RPs. The outgoing segments or updates are sent out via the standby RP or active RP depending on the underlying platform. On IOS/IOS XE, the active RP sends the update to the peers, but on IOS XR, the update is sent out via the standby RP. Note that the system uses asynchronous inter-process communication (IPC) between the active and standby RPs to replicate the information. In this state, the active RP sends prefix/best-path information to the standby.

NSR-ready: The active and standby stacks operate independently, but the incoming packets or updates are replicated to both the RPs. The outgoing segments or updates are sent out via the standby RP or active RP depending on the underlying platform. On IOS/IOS XE, the active RP sends the update to the peers, but on IOS XR, the update is sent out via the standby RP. Note that the system uses asynchronous inter-process communication (IPC) between the active and standby RPs to replicate the information. In this state, the active RP sends prefix/best-path information to the standby.

![]() Switchover: When the switchover occurs, TCP activates the sockets based on the application trigger and restores keepalive functionality to maintain the session states. In other words, the new active RP (previously acting standby RP) continues from where the active RP left.

Switchover: When the switchover occurs, TCP activates the sockets based on the application trigger and restores keepalive functionality to maintain the session states. In other words, the new active RP (previously acting standby RP) continues from where the active RP left.

Figure 14-2 depicts the BGP NSR architecture with the various functions occurring between the active and the standby RP on Cisco IOS/IOS XE platform.

The BGP NSR feature is supported on IOS/IOS XE and IOS XR platforms. To enable BGP NSR on Cisco IOS, use the command neighbor ip-address ha-mode sso. On IOS XR, NSR is not supported on a per-neighbor basis and can only be enabled globally for all address families using the command nsr under the router bgp configuration mode. Example 14-4 demonstrates the configurations of BGP NSR on both Cisco IOS and IOS XR platforms. NSR is enabled globally on Cisco IOS by using the command bgp sso route-refresh-enable. This command only allows BGP NSR to be enabled to peers that are Route Refresh capable.

Example 14-4 BGP NSR Configuration

! Configuration on Cisco IOS

R1(config)# router bgp 100

R1(config-router)# bgp sso route-refresh-enable

R1(config-router)# neighbor 192.168.2.2 ha-mode sso

! Configuration on IOS XR

RP/0/0/CPU0:R2(config)# router bgp 100

RP/0/0/CPU0:R2(config-bgp)# nsr

RP/0/0/CPU0:R2(config-bgp)# commit

The BGP NSR related information is found for each peer by using the command show bgp afi safi neighbor ip-address. Example 14-5 displays the output of the command show bgp ipv4 unicast neighbors ip-address to verify the BGP NSR status. On IOS XR, another command to verify if NSR is enabled for the BGP process is the command show bgp process. This command displays the information related to the BGP process, such as Router ID, default timers, NSR information, and other generic information.

Example 14-5 BGP NSR Verification

IOS

R1# show bgp ipv4 unicast neighbors 192.168.2.2

BGP neighbor is 192.168.2.2, remote AS 100, internal link

BGP version 4, remote router ID 192.168.2.2

BGP state = Established, up for 08:13:01

Last read 00:00:00, last write 00:00:11, hold time is 180, keepalive interval is

60 seconds

Neighbor sessions:

1 active, is not multisession capable (disabled)

Neighbor capabilities:

Route refresh: advertised and received(new)

Four-octets ASN Capability: advertised and received

Address family IPv4 Unicast: advertised and received

Enhanced Refresh Capability: advertised

Multisession Capability:

Stateful switchover support enabled: NO for session 1

! Output omitted for brevity

IOS XR

RP/0/0/CPU0:R2# show bgp ipv4 unicast neighbors 192.168.1.1

BGP neighbor is 192.168.1.1

Remote AS 100, local AS 100, internal link

Remote router ID 192.168.1.1

Cluster ID 192.168.2.2

BGP state = Established, up for 08:26:48

NSR State: None

Last read 00:00:37, Last read before reset 00:00:00

Hold time is 180, keepalive interval is 60 seconds

Configured hold time: 180, keepalive: 60, min acceptable hold time: 3

Last write 00:00:40, attempted 19, written 19

Second last write 00:01:40, attempted 19, written 19

Last write before reset 00:00:00, attempted 0, written 0

Second last write before reset 00:00:00, attempted 0, written 0

Last write pulse rcvd May 15 11:52:27.695 last full not set pulse count 1074

Last write pulse rcvd before reset 00:00:00

Socket not armed for io, armed for read, armed for write

Last write thread event before reset 00:00:00, second last 00:00:00

Last KA expiry before reset 00:00:00, second last 00:00:00

Last KA error before reset 00:00:00, KA not sent 00:00:00

Last KA start before reset 00:00:00, second last 00:00:00

Precedence: internet

Non-stop routing is enabled

Multi-protocol capability received

Neighbor capabilities:

Route refresh: advertised (old + new) and received (old + new)

! Output omitted for brevity

RP/0/0/CPU0:R2# show bgp process

BGP Process Information:

BGP is operating in STANDALONE mode

Autonomous System number format: ASPLAIN

Autonomous System: 100

Router ID: 192.168.2.2 (manually configured)

Default Cluster ID: 192.168.2.2

Active Cluster IDs: 192.168.2.2

Fast external fallover enabled

Neighbor logging is enabled

Enforce first AS enabled

Default local preference: 100

Default keepalive: 60

Non-stop routing is enabled

Update delay: 120

Generic scan interval: 60

Address family: IPv4 Unicast

Dampening is not enabled

! Output omitted for brevity

In IOS XR, there are instances when a process crashes because of various reasons. So, if a TCP or BGP process starts on the active RP, the system can force the active RP to failover to standby RP as a recovery action in such situations. But this is not done automatically. To enable this behavior, configure the command nsr process-failures switchover. Note that if a process restarts on the standby RP, only the NSR functionality is lost until the time the process comes up again, but there is not any other service impact.

From the command-line perspective, there isn’t much information that can be viewed on the Cisco IOS or IOS XE platforms, but on IOS XR, a lot of information is available for BGP NSR. The BGP NSR goes through various states. Figure 14-3 examines the finite state machine (FSM) that BGP NSR goes through at different stages.

The following describes the different states of the BGP NSR finite state machine:

![]() None: NSR is disabled (not configured).

None: NSR is disabled (not configured).

![]() Initializing: Basic initialization in progress. This is done after the first time NSR is configured.

Initializing: Basic initialization in progress. This is done after the first time NSR is configured.

![]() Connecting: Attempting to connect to peer (ACTV/STDBY) process.

Connecting: Attempting to connect to peer (ACTV/STDBY) process.

![]() TCP Init-Sync: Synchronization of TCP sessions in progress.

TCP Init-Sync: Synchronization of TCP sessions in progress.

![]() BGP Init-Sync: Synchronization of BGP database in progress.

BGP Init-Sync: Synchronization of BGP database in progress.

![]() NSR-Ready: Ready to perform NSR-enabled switchover.

NSR-Ready: Ready to perform NSR-enabled switchover.

Note that in Example 14-5, the NSR state is None. This is because there is not a standby RP present in the system. In an ideal situation with dual RPs, the NSR state should be NSR-Ready. To view the NSR state on a dual RP system, use the command show redundancy. This command displays the active and the standby RP redundancy states.

Example 14-6 displays the output of the command show redundancy from another node running on dual RPs. Also the command show bgp ipv4 unicast neighbor ip-address command displays the NSR state as NSR-Ready.

Example 14-6 Redundancy Status

RP/0/RSP0/CPU0:R2# show redundancy

Redundancy information for node 0/RSP0/CPU0:

==========================================

Node 0/RSP0/CPU0 is in ACTIVE role

Node Redundancy Partner (0/RSP0/CPU0) is in STANDBY role

Standby node in 0/RSP1/CPU0 is ready

Standby node in 0/RSP1/CPU0 is NSR-ready

Node 0/RSP0/CPU0 is in process group PRIMARY role

Process Redundancy Partner (0/RSP1/CPU0) is in BACKUP role

Backup node in 0/RSP1/CPU0 is ready

Backup node in 0/RSP1/CPU0 is NSR-ready

Group Primary Backup Status

--------- --------- --------- ---------

dsc 0/RSP0/CPU0 0/RSP1/CPU0 Ready

dlrsc 0/RSP0/CPU0 0/RSP1/CPU0 Ready

central-services 0/RSP0/CPU0 0/RSP1/CPU0 Ready

v4-routing 0/RSP0/CPU0 0/RSP1/CPU0 Ready

netmgmt 0/RSP0/CPU0 0/RSP1/CPU0 Ready

mcast-routing 0/RSP0/CPU0 0/RSP1/CPU0 Ready

v6-routing 0/RSP0/CPU0 0/RSP1/CPU0 Ready

Group_10_bgp2 0/RSP0/CPU0 0/RSP1/CPU0 Ready

Group_5_bgp3 0/RSP0/CPU0 0/RSP1/CPU0 Ready

RP/0/RSP0/CPU0:R2# show bgp ipv4 unicast neighbors 192.168.1.1

BGP neighbor is 192.168.1.1

Remote AS 100, local AS 100, internal link

Remote router ID 192.168.1.1

Speaker ID 1

BGP state = Established, up for 1d04h

NSR State: NSR Ready

Last read 00:00:03, Last read before reset 1d04h

! Output omitted for brevity

Use the command show bgp afi safi [prefix | summary] [standby] to view the BGP session state and the BGP table for an AFI/SAFI on the standby RP.

Note

If a manual switchover is required for maintenance purposes, ensure that the redundancy state is Standby hot and also the standby is in NSR-Ready state. This ensures seamless activity without any service impact.

After a switchover, the standby RP goes through all the NSR states as previously mentioned. This information is viewed by using the command show bgp summary nsr or show bgp nsr. These commands display all the various modes that the standby goes through after it moves to a standby ready state along with the timeline. It also shows the state of the BGP neighbor along with the NSR state. To view the NSR states and the neighbor state on the standby RP, use the command show bgp summary nsr standby. Example 14-7 displays the command output of the show bgp summary nsr command.

Example 14-7 show bgp summary nsr Command Output

RP/0/RSP0/CPU0:R2# show bgp summary nsr

BGP router identifier 192.168.2.2, local AS number 100

BGP generic scan interval 60 secs

Non-stop routing is enabled

BGP table state: Active

Table ID: 0xe0000000 RD version: 37

BGP main routing table version 37

BGP NSR Initial initsync version 3 (Reached)

BGP scan interval 60 secs

BGP is operating in STANDALONE mode.

node0_RSP0_CPU0 Speaker

Entered mode Standby Ready : May 15 15:35:05

Entered mode TCP NSR Setup : May 15 15:35:05

Entered mode TCP NSR Setup Done : May 15 15:35:05

Entered mode TCP Initial Sync : May 15 15:35:05

Entered mode TCP Initial Sync Phase Two : May 15 15:35:06

Entered mode TCP Initial Sync Done : May 15 15:35:07

Entered mode FPBSN processing done : May 15 15:35:07

Entered mode Update processing done : May 15 15:35:07

Entered mode BGP Initial Sync : May 15 15:35:07

Entered mode BGP Initial Sync done : May 15 15:35:07

Entered mode NSR Ready : May 15 15:35:07

Current BGP NSR state - NSR Ready achieved at: May 15 15:35:07

NSR State READY notified to Rmf at: May 15 15:35:07

Process RcvTblVer bRIB/RIB LabelVer ImportVer SendTblVer StandbyVer

Speaker 37 37 37 37 37 37

Neighbor Spk AS TblVer SyncVer AckVer NBRState NSRState

192.168.1.1 1 100 37 37 37 Established NSR Ready

RP/0/RSP0/CPU0:R2# show bgp summary nsr standby

Mon May 16 06:44:38.868 UTC

BGP router identifier 192.168.2.2, local AS number 100

BGP generic scan interval 60 secs

Non-stop routing is enabled

BGP table state: Active

Table ID: 0xe0000000 RD version: 37

BGP main routing table version 37

BGP NSR Initial initsync version 1 (Not Reached)

BGP tunnel nexthop version 1

BGP scan interval 60 secs

BGP is operating in STANDALONE mode.

node0_RSP1_CPU0 Speaker

Entered mode None : May 15 15:34:05

Entered mode Standby Ready : May 15 15:35:05

Entered mode TCP Replication : May 15 15:35:05

Entered mode TCP Init Sync Done : May 15 15:35:07

Entered mode NSR Ready : May 15 15:35:07

Process RcvTblVer bRIB/RIB LabelVer ImportVer SendTblVer StandbyVer

Speaker 37 1 37 37 1 0

Neighbor Spk AS TblVer SyncVer AckVer NBRState NSRState

192.168.1.1 1 100 37 0 1 Established NSR Ready

A cumulative view of all the session states, that is, Neighbor State and NSR State, is viewed by using the command show bgp sessions. If there are sessions that are not NSR ready, such sessions are viewed by using the command show bgp sessions [not-nsr-ready]. Example 14-8 displays the BGP sessions that are not NSR ready. The output indicates the NSRState field as None because it was captured when the IOS XR router R2 was running on single RP.

Example 14-8 Not-NSR-Ready BGP Sessions

RP/0/RSP0/CPU0:R2# show bgp sessions not-nsr-ready

Neighbor VRF Spk AS InQ OutQ NBRState NSRState

192.168.1.1 default 0 100 0 0 Established None

Because the TCP state is required to be synchronized between the active RP and the standby RP, it is vital to verify how many sessions an application (in this case BGP) ask TCP to synchronize and how many have actually synchronized. To verify this information, use the command show tcp nsr session-set brief. Examine the output of this command in Example 14-9. The IPv4 AFI has total of one session to sync, and the output shows that it has been synced on the standby.

Example 14-9 TCP NSR Sync Information

RP/0/RSP0/CPU0:R2# show tcp nsr session-set brief

--------------------------------------------------------------

Node: 0/RSP0/CPU0

--------------------------------------------------------------

SSCB Client LocalAPP Set-Id Family State Protect-Node Total/Synced

0x10272978 581993 bgp#1 1 IPv4 Ac YN 0/RSP1/CPU0 1/1

0x1017f338 581993 bgp#1 2 IPv6 Ac YN 0/RSP1/CPU0 0/0

While troubleshooting BGP NSR issues, ensure that the TCP session related to BGP is synched with the standby or is NSR ready. This is verified by using the command show tcp nsr brief. In this command, look for the same protocol control block (PCB) value that is achieved from the command show tcp brief and ensure that the NSR state is Up. Example 14-10 illustrates how to verify if the TCP session is NSR ready.

Example 14-10 Verifying TCP NSR State

RP/0/0/CPU0:R2# show tcp brief

PCB VRF-ID Recv-Q Send-Q Local Address Foreign Address State

0x10161660 0x60000000 0 0 192.168.2.2:646 192.168.10.1:25070 ESTAB

0x101698b0 0x60000000 0 0 192.168.2.2:646 192.168.3.3:23158 ESTAB

0x102311b4 0x60000000 0 0 192.168.2.2:179 192.168.1.1:41318 ESTAB

RP/0/RSP0/CPU0:R2# show tcp nsr brief

Tue May 17 05:18:15.908 UTC

--------------------------------------------------------------

Node: 0/RSP0/CPU0

--------------------------------------------------------------

PCB VRF-ID Local Address Foreign Address NSR

0x102311b4 0x60000000 192.168.2.2:179 192.168.1.1:41318 Up

The command show tcp nsr detail pcb pcb-value displays how much time was taken to perform the initial sync for the TCP connection. Example 14-11 shows the output of the command show tcp nsr detail pcb pcb-value of the previously stated TCP connection.

Example 14-11 TCP NSR Session Detail

RP/0/RSP0/CPU0:R2# show tcp nsr detail pcb 0x102311b4

Tue May 17 05:22:34.573 UTC

--------------------------------------------------------------

Node: 0/RSP0/CPU0

--------------------------------------------------------------

==============================================================

PCB 0x102311b4, VRF Id 0x60000000, Client PID: 56177002

Local host: 192.168.2.2, Local port: 179

Foreign host: 192.168.1.1, Foreign port: 41318

SSCB 0x102316d4, Client PID 56177002

Node Role: Active, Protected by: 0/RSP1/CPU0, Cookie: 0x00000000

NSR State: Up

Replicated to standby: Yes

Synchronized with standby: Yes

FSSN: 1823391429, FSSN Offset: 0

ID of the last or current initial sync: 2077858654

Initial sync done in two phases: yes

Initial sync started at: Sun May 15 15:35:05 2016

Initial sync ended at: Sun May 15 15:35:07 2016

Number of incoming packets currently held: 0

Number of iACKS currently held: 0

If there is a delay noticed between the sync, the TCP packet can be traced within the system to examine what action is being taken for a particular packet along with the packet details, such as sequence number, ack, length, window size, and so on. Use the command show tcp packet-trace pcb-value to trace the TCP packet. Example 14-12 examines the packet for the TCP session established by the BGP session between 192.168.2.2 and 192.168.1.1.

Example 14-12 TCP Packet Trace

RP/0/RSP0/CPU0:R2# show tcp packet-trace 0x102311b4

==============================================================

Packet traces for: PCB 0x102311b4, 192.168.2.2:179 <-> 192.168.1.1:41318,

VRF 0x60000000

May 17 04:56:58.757>S (app write)

snduna 3633157372 sndnxt 3633157372 sndmax 3633157372 sndwnd 32198

rcvnxt 1823434377 rcvadv 1823466820 rcvwnd 32443

May 17 04:56:58.757>s --A-P- SEQ 3633157372 ACK 1823434377 LEN 19 WIN 47998 (pak:

0x0, line: 733)

snduna 3633157372 sndnxt 3633157391 sndmax 3633157391 sndwnd 32198

rcvnxt 1823434377 rcvadv 1823466820 rcvwnd 32443

May 17 04:56:58.960>R --A--- SEQ 1823434377 ACK 3633157391 LEN 0 WIN 32179 (pak:

0xb196c50b, line: 3603)

snduna 3633157372 sndnxt 3633157391 sndmax 3633157391 sndwnd 32198

rcvnxt 1823434377 rcvadv 1823466820 rcvwnd 32443

May 17 04:56:58.960>D --A--- SEQ 1823434377 ACK 3633157391 LEN 0 WIN 32179 (pak:

0xb196c50b, line: 893)

snduna 3633157391 sndnxt 3633157391 sndmax 3633157391 sndwnd 32179

rcvnxt 1823434377 rcvadv 1823466820 rcvwnd 32443

May 17 04:57:47.569>R --A-P- SEQ 1823434377 ACK 3633157391 LEN 19 WIN 32179 (pak:

0xb1971453, line: 3603)

snduna 3633157391 sndnxt 3633157391 sndmax 3633157391 sndwnd 32179

rcvnxt 1823434377 rcvadv 1823466820 rcvwnd 32443

May 17 04:57:47.569>R (app read)

snduna 3633157391 sndnxt 3633157391 sndmax 3633157391 sndwnd 32179

rcvnxt 1823434396 rcvadv 1823466820 rcvwnd 32424

! Output omitted for brevity

If the TCP data related to TCP packet flow, the socket state for session that is already closed, and so on is required for investigating what happened to the TCP session, use the command show tcp dump-file filename. The filename for the peer is found using the command show tcp dump-file list ip-address.

The show bgp trace sync command is also very useful to view the timelines of various state changes. This command is useful if there is a delay in the BGP NSR sync. Example 14-13 displays the output of the command show bgp trace sync [reverse]. The reverse keyword is used to view the output in reversed form so that you don’t have to scroll down to the end to view the latest logs. The unfiltered command gives more details on what is happening during the sync process, but filtering the output for just NSR state can help identify where the actual delay occurred, and further logs can be reviewed around the same timeline.

RP/0/RSP0/CPU0:R2# show bgp trace sync reverse | inc "NSR state"

15:35:07.737 default-bgp/spkr-tr2-sync 0/RSP0/CPU0 t16 [SYNC]:4831: Active

NSR state trans, event 'Stdby NSR ack', state 'BGP Initial Sync done'

-> 'NSR Ready'

15:35:07.734 default-bgp/spkr-tr2-sync 0/RSP0/CPU0 t16 [SYNC]:4831: Active

NSR state trans, event 'BGP Initial sync done', state 'BGP Initial Sync'

-> 'BGP Initial Sync done'

15:35:07.733 default-bgp/spkr-tr2-sync 0/RSP0/CPU0 t16 [SYNC]:4831: Active

NSR state trans, event 'Standby ready for BGP sync message', state

'Update processing done' -> 'BGP Initial Sync'

15:35:07.732 default-bgp/spkr-tr2-sync 0/RSP0/CPU0 t16 [SYNC]:4831: Active NSR state

trans, event 'Update Processing Done', state 'FPBSN processing done' -> 'Update

processing done'

15:35:07.732 default-bgp/spkr-tr2-sync 0/RSP0/CPU0 t16 [SYNC]:4831: Active

NSR state trans, event 'FPBSN Processing done', state 'TCP Initial Sync Done'

-> 'FPBSN processing done'

15:35:07.732 default-bgp/spkr-tr2-sync 0/RSP0/CPU0 t16 [SYNC]:4831: Active

NSR state trans, event 'TCP initial sync done', state 'TCP Initial Sync' ->

'TCP Initial Sync Done'

15:35:05.725 default-bgp/spkr-tr2-sync 0/RSP0/CPU0 t16 [SYNC]:4831: Active

NSR state trans, event 'End of Convergence', state 'TCP NSR Setup Done' ->

'TCP Initial Sync'

15:35:05.725 default-bgp/spkr-tr2-sync 0/RSP0/CPU0 t16 [SYNC]:4831: Active

NSR state trans, event 'TCP NSR setup done', state 'TCP NSR Setup' ->

'TCP NSR Setup Done'

15:35:05.724 default-bgp/spkr-tr2-sync 0/RSP0/CPU0 t16 [SYNC]:4831: Active

NSR state trans, event 'End of read-only', state 'Standby Ready' ->

'TCP NSR Setup'

15:35:05.724 default-bgp/spkr-tr2-sync 0/RSP0/CPU0 t16 [SYNC]:4831: Active

NSR state trans, event 'Standby ready message', state 'None' ->

'Standby Ready'

15:34:04.258 default-bgp/spkr-tr2-sync 0/RSP0/CPU0 t8 [SYNC]:8274: Trigger

to Init rmf with bgp NSR state 0 Client type 0

! Output omitted for brevity

There are a few debug commands that can be used for debugging BGP NSR sync issues:

![]() debug bgp sync: General interaction between active and standby

debug bgp sync: General interaction between active and standby

![]() debug bgp commlib: Details of message encoding or decoding happening between active and standby or speaker and BGP routing information base (RIB)

debug bgp commlib: Details of message encoding or decoding happening between active and standby or speaker and BGP routing information base (RIB)

![]() debug tcp nsr: TCP NSR related debug

debug tcp nsr: TCP NSR related debug

A collective set of commands and traces are found in show tech-support bgp and show tech-support tcp nsr. These commands are useful while investigating an outage event and are really helpful for root cause analysis.

Bidirectional Forwarding Detection

Bidirectional forwarding detection (BFD) is a simple, fixed-length hello protocol that is used for faster detection of failures. BFD provides a low-overhead, short-duration mechanism for detection of failures in the path between adjacent forwarding engines. Defined in RFC 5880 through RFC 5884, BFD supports adaptive detection times and a three-way handshake that ensures both systems are aware of any changes. BFD control packets contains the desired transmit (tx) and receive (rx) intervals by the sender. For example, if a node cannot handle a high rate of BFD packets, you can specify a large desired rx interval. This way its neighbor(s) cannot send packets at a smaller interval. The following features of BFD make it a most desirable protocol for failure detection:

![]() Subsecond failure detection

Subsecond failure detection

![]() Media independent (Ethernet, Packet over Sonet (POS), Serial, and so on).

Media independent (Ethernet, Packet over Sonet (POS), Serial, and so on).

![]() Runs over User Datagram Protocol (UDP), data protocol independent (IPv4, IPv6, LSP).

Runs over User Datagram Protocol (UDP), data protocol independent (IPv4, IPv6, LSP).

![]() Application independent: Interior Gateway Protocol (IGP), Tunnel liveliness, Fast Re-route (FRR) trigger, and so on

Application independent: Interior Gateway Protocol (IGP), Tunnel liveliness, Fast Re-route (FRR) trigger, and so on

When an application (BGP, OSPF, and the like) creates or modifies a BFD session, it provides the following information:

![]() Interface handle (single-hop session)

Interface handle (single-hop session)

![]() Address of the neighbor

Address of the neighbor

![]() Local address

Local address

![]() Desired interval

Desired interval

![]() Multiplier

Multiplier

The product of the desired interval and multiplier indicates the desired failure detection interval. The operational workflow of BFD for BGP or any other application is as follows:

![]() User configured BFD for a BGP neighbor (usually internal BGP (IBGP) / external BGP (EBGP) on physical interface).

User configured BFD for a BGP neighbor (usually internal BGP (IBGP) / external BGP (EBGP) on physical interface).

![]() BGP initiates creation of BFD session.

BGP initiates creation of BFD session.

![]() After the BFD session is created, timers are negotiated.

After the BFD session is created, timers are negotiated.

![]() BFD sends periodic control packets to its peer.

BFD sends periodic control packets to its peer.

![]() If a link failure occurs, BFD detects the failure in the desired failure detection interval (desired interval * multiplier) and informs the peer of the failure as well as informing the local BFD client (for example, BGP).

If a link failure occurs, BFD detects the failure in the desired failure detection interval (desired interval * multiplier) and informs the peer of the failure as well as informing the local BFD client (for example, BGP).

![]() The BGP session goes down immediately rather than waiting for the hold timer to expire.

The BGP session goes down immediately rather than waiting for the hold timer to expire.

BFD runs on two modes:

![]() Asynchronous mode

Asynchronous mode

![]() Demand mode

Demand mode

Note

Demand mode is not supported on Cisco platforms. In demand mode, no control packets are exchanged after the session is established. In this mode, BFD assumes that there is another way to verify connectivity between the two endpoints. Either host may still send control packets if needed, but they are not generally exchanged.

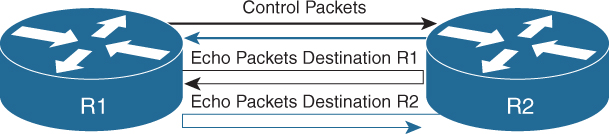

Asynchronous Mode

Asynchronous mode is the primary mode of operation and is mandatory for BFD to function. In this mode, each system periodically sends BFD control packets to one another. For example, packets send by router R1 have a source address of R1 and a destination address of router R2, as shown in Figure 14-4.

Each stream of BFD control packets is independent and does not follow a request response cycle. If a number of packets in a row are not received by the other system, then the session is declared down. An adaptive failure detection time is used to prevent false failures if a neighbor is sending packets slower than what it is advertising.

BFD Async packets are sent on UDP port 3784. The BFD source port must be in the range of 49152 through 65535. The BFD control packets contain the following fields:

![]() Version: Version of BFD control header. XR runs version 1 as default, but legacy sessions can run version 0 as well.

Version: Version of BFD control header. XR runs version 1 as default, but legacy sessions can run version 0 as well.

![]() Diag: A diagnostic code specifying the local system’s reason for the last change in session state, detection time expired, echo failed, and so on.

Diag: A diagnostic code specifying the local system’s reason for the last change in session state, detection time expired, echo failed, and so on.

![]() State: The current BFD session state as seen by the transmitting system.

State: The current BFD session state as seen by the transmitting system.

![]() P: Poll bit, if set, the transmitting system is requesting verification of connectivity, or of a parameter change, and is expecting a packet with the Final (F) bit in reply.

P: Poll bit, if set, the transmitting system is requesting verification of connectivity, or of a parameter change, and is expecting a packet with the Final (F) bit in reply.

![]() F: Final bit, if set, the transmitting system is responding to a received BFD Control packet that had the Poll (P) bit set.

F: Final bit, if set, the transmitting system is responding to a received BFD Control packet that had the Poll (P) bit set.

![]() Detect Multiplier: Detection time multiplier. The negotiated transmit interval, multiplied by this value, provides the detection time for the transmitting system in Asynchronous mode.

Detect Multiplier: Detection time multiplier. The negotiated transmit interval, multiplied by this value, provides the detection time for the transmitting system in Asynchronous mode.

![]() My Discriminator: A unique, nonzero discriminator value generated by the transmitting system, used to de-multiplex multiple BFD sessions between the same pair of systems.

My Discriminator: A unique, nonzero discriminator value generated by the transmitting system, used to de-multiplex multiple BFD sessions between the same pair of systems.

![]() Your Discriminator: The discriminator received from the corresponding remote system. This field reflects back the received value of My Discriminator, or is zero if that value is unknown.

Your Discriminator: The discriminator received from the corresponding remote system. This field reflects back the received value of My Discriminator, or is zero if that value is unknown.

![]() Desired Min TX Interval: This is the minimum interval, in microseconds, that the local system would like to use when transmitting BFD Control packets.

Desired Min TX Interval: This is the minimum interval, in microseconds, that the local system would like to use when transmitting BFD Control packets.

![]() Desired Min RX Interval: This is the minimum interval, in microseconds, between received BFD control packets that this system is capable of supporting.

Desired Min RX Interval: This is the minimum interval, in microseconds, between received BFD control packets that this system is capable of supporting.

![]() Required Min Echo RX Interval: This is the minimum interval, in microseconds, between received BFD Echo packets that this system is capable of supporting.

Required Min Echo RX Interval: This is the minimum interval, in microseconds, between received BFD Echo packets that this system is capable of supporting.

The BFD control packets as defined by IETF is shown in Figure 14-5.

BFD authentication is not supported on all platforms. BFD single-hop authentication is supported on IOS XE and NX-OS platforms.

Asynchronous Mode with Echo Function

Asynchronous mode with echo function is designed to test only the forwarding path and not the host stack on the remote system. It is enabled only after the session is enabled. BFD echo packets are sent in such a way that the other end just loops them back through its forwarding path. For example, a packet sent by router R1 could be sent with both the source and destination address belonging to R1 as shown in Figure 14-6.

Because echo packets do not require application or host stack processing on the remote end, it can be used for aggressive detection timers. Another benefit of using the echo function is that the sender has complete control of the response time. In order for the echo function to work, the remote node should also be capable of echo function. The BFD control packets with echo function enabled are sent as UDP packets with source and destination port 3785. Also, the interfaces running BFD with the echo function should be configured with the command no ip redirects.

Configuration and Verification

BFD is usually configured on a per-interface basis for the routing protocols that support BFD. BFD is enabled using the configuration bfd interval interval min_rx min_rx_interval multiplier multiplier. The variable interval is the transmit interval between BFD packets, whereas min_rx_interval is the minimum receive interval capability.

BFD can be enabled for BGP peer on Cisco IOS using the command neighbor ip-address fall-over bfd. On IOS XR, the command bfd fast-detect is part of the neighbor configuration. BFD for BGP can be enabled on NX-OS using the command bfd under the neighbor configuration. To be able to configure BFD, the feature bfd command should be configured to enable the BFD feature. To understand the BFD feature for BGP, examine the topology as shown in Figure 14-7. Router R1 has an EBGP peering with IOS XR router R2 and NX-OS router R3.

Example 14-14 demonstrates the configuration of BFD for BGP on all three Cisco operating systems. The asynchronous mode configuration is shown without the echo function, which was disabled manually. Some of the platforms have echo function enabled by default and thus require manual configuration to disable the echo function.

Example 14-14 BFD for BGP Configuration

R1

interface GigabitEthernet2/0/3

ip address 10.1.13.1 255.255.255.0

ip ospf 100 area 0

no ip redirects

bfd interval 300 min_rx 300 multiplier 3

no bfd echo

!

interface TenGigabitEthernet2/1/0

ip address 10.1.12.1 255.255.255.0

ip ospf 100 area 0

no ip redirects

bfd interval 300 min_rx 300 multiplier 3

no bfd echo

!

router bgp 100

bgp router-id 192.168.1.1

bgp log-neighbor-changes

no bgp default ipv4-unicast

neighbor 10.1.12.2 remote-as 200

neighbor 10.1.12.2 fall-over bfd

neighbor 10.1.13.3 remote-as 300

neighbor 10.1.13.3 fall-over bfd

!

address-family ipv4

neighbor 10.1.12.2 activate

neighbor 10.1.13.3 activate

exit-address-family

R2

interface TenGigE0/0/2/0

ipv4 address 10.1.12.2 255.255.255.0

!

bfd

interface TenGigE0/0/2/0

!

echo disable

!

router bgp 200

bgp router-id 192.168.2.2

address-family ipv4 unicast

!

neighbor 10.1.12.1

remote-as 100

bfd fast-detect

bfd multiplier 3

bfd minimum-interval 300

address-family ipv4 unicast

R3

feature bfd

feature bgp

!

interface Ethernet3/2

mpls ip

bfd interval 300 min_rx 300 multiplier 3

no bfd echo

no ip redirects

ip address 10.1.13.3/24

ip router ospf 100 area 0.0.0.0

no shutdown

!

router bgp 300

router-id 192.168.3.3

address-family ipv4 unicast

neighbor 10.1.13.2

bfd

remote-as 100

address-family ipv4 unicast

After the BGP session is up, the BFD session is also established. The BFD session is viewed using the command show bfd neighbors [details] on both Cisco IOS and NX-OS platforms. On IOS XR, use the command show bfd session [detail]. The detail command option displays more information on which client applications are using BFD and other details on the packets sent and received, and so on.

Example 14-15 examines the output of the command show bfd neighbors [detail] and show bfd session [detail]. In the output, notice that the BFD client is BGP. The BFD on all three platforms runs on version 1 by default. The BFD command output with detail keyword displays all the fields that are part of the BFD control packet. These fields can be very useful for debugging purposes and to understand whether there is a mismatch between the peers that could possibly cause BFD session to flap. Ensure that the State bit is set to Up rather than AdminDown. The output also shows that the echo function has been disabled, and the echo function interval value is 0.

Example 14-15 Verifying BFD Session

IOS

R1# show bfd neighbors

IPv4 Sessions

NeighAddr LD/RD RH/RS State Int

10.1.12.2 4097/2148073473 Up Up Te2/1/0

10.1.13.3 4098/1090519041 Up Up Gi2/0/3

R1# show bfd neighbors details

IPv4 Sessions

NeighAddr LD/RD RH/RS State Int

10.1.12.2 4097/2148073473 Up Up Te2/1/0

Session state is UP and not using echo function.

Session Host: Hardware

OurAddr: 10.1.12.1

Handle: 1

Local Diag: 0, Demand mode: 0, Poll bit: 0

MinTxInt: 300000, MinRxInt: 300000, Multiplier: 3

Received MinRxInt: 300000, Received Multiplier: 3

Holddown (hits): 677(0), Hello (hits): 300(62318)

Rx Count: 59338, Rx Interval (ms) min/max/avg: 5/312/277 last: 223 ms ago

Tx Count: 62317, Tx Interval (ms) min/max/avg: 5/304/264 last: 30 ms ago

Elapsed time watermarks: 0 0 (last: 0)

Registered protocols: BGP CEF

Uptime: 04:33:56

Last packet: Version: 1 - Diagnostic: 0

State bit: Up - Demand bit: 0

Poll bit: 0 - Final bit: 0

C bit: 1

Multiplier: 3 - Length: 24

My Discr.: 2148073473 - Your Discr.: 4097

Min tx interval: 300000 - Min rx interval: 300000

Min Echo interval: 0

IPv4 Sessions

NeighAddr LD/RD RH/RS State Int

10.1.13.3 4098/1090519041 Up Up Gi2/0/3

Session state is UP and not using echo function.

Session Host: Hardware

OurAddr: 10.1.13.1

Handle: 2

Local Diag: 0, Demand mode: 0, Poll bit: 0

MinTxInt: 300000, MinRxInt: 300000, Multiplier: 3

Received MinRxInt: 300000, Received Multiplier: 3

Holddown (hits): 891(0), Hello (hits): 300(3452)

Rx Count: 3029, Rx Interval (ms) min/max/avg: 296/304/300 last: 9 ms ago

Tx Count: 3451, Tx Interval (ms) min/max/avg: 1/302/264 last: 192 ms ago

Elapsed time watermarks: 0 0 (last: 0)

Registered protocols: BGP CEF

Uptime: 00:15:11

Last packet: Version: 1 - Diagnostic: 0

State bit: Up - Demand bit: 0

Poll bit: 0 - Final bit: 0

C bit: 0

Multiplier: 3 - Length: 24

My Discr.: 1090519041 - Your Discr.: 4098

Min tx interval: 300000 - Min rx interval: 300000

Min Echo interval: 50000

IOS XR

RP/0/RSP0/CPU0:R2# show bfd session

Interface Dest Addr Local det time(int*mult) State

Echo Async H/W NPU

------------------- --------------- ---------------- ---------------- ----------

Te0/0/2/0 10.1.12.1 0s(0s*0) 900ms(300ms*3) UP

No n/a

RP/0/RSP0/CPU0:R2# show bfd session detail

I/f: TenGigE0/0/2/0, Location: 0/0/CPU0

Dest: 10.1.12.1

Src: 10.1.12.2

State: UP for 0d:4h:41m:27s, number of times UP: 1

Session type: PR/V4/SH

Received parameters:

Version: 1, desired tx interval: 300 ms, required rx interval: 300 ms

Required echo rx interval: 300 ms, multiplier: 3, diag: None

My discr: 4097, your discr: 2148073473, state UP, D/F/P/C/A: 0/0/0/0/0

Transmitted parameters:

Version: 1, desired tx interval: 300 ms, required rx interval: 300 ms

Required echo rx interval: 0 ms, multiplier: 3, diag: None

My discr: 2148073473, your discr: 4097, state UP, D/F/P/C/A: 0/0/0/1/0

Timer Values:

Local negotiated async tx interval: 300 ms

Remote negotiated async tx interval: 300 ms

Desired echo tx interval: 0 s, local negotiated echo tx interval: 0 ms

Echo detection time: 0 ms(0 ms*3), async detection time: 900 ms(300 ms*3)

Local Stats:

Intervals between async packets:

Tx: Number of intervals=100, min=1 ms, max=302 ms, avg=139 ms

Last packet transmitted 103 ms ago

Rx: Number of intervals=100, min=225 ms, max=300 ms, avg=264 ms

Last packet received 61 ms ago

Intervals between echo packets:

Tx: Number of intervals=0, min=0 s, max=0 s, avg=0 s

Last packet transmitted 0 s ago

Rx: Number of intervals=0, min=0 s, max=0 s, avg=0 s

Last packet received 0 s ago

Latency of echo packets (time between tx and rx):

Number of packets: 0, min=0 ms, max=0 ms, avg=0 ms

Session owner information:

Desired Adjusted

Client Interval Multiplier Interval Multiplier

-------------------- --------------------- ---------------------

bgp-default 300 ms 3 300 ms 3

NX-OS

R3# show bfd neighbors

OurAddr NeighAddr LD/RD RH/RS Holdown(mult) State Int Vrf

10.1.13.3 10.1.13.1 1090519041/4098 Up 689(3) Up Eth3/2 default

R3# show bfd neighbors details

OurAddr NeighAddr LD/RD RH/RS Holdown(mult) State Int Vrf

10.1.13.3 10.1.13.1 1090519041/4098 Up 689(3) Up Eth3/2 default

Session state is Up and not using echo function

Local Diag: 0, Demand mode: 0, Poll bit: 0, Authentication: None

MinTxInt: 300000 us, MinRxInt: 300000 us, Multiplier: 3

Received MinRxInt: 300000 us, Received Multiplier: 3

Holdown (hits): 900 ms (0), Hello (hits): 300 ms (12449)

Rx Count: 14153, Rx Interval (ms) min/max/avg: 0/21232/265 last: 200 ms ago

Tx Count: 12449, Tx Interval (ms) min/max/avg: 296/296/296 last: 234 ms ago

Registered protocols: bgp

Uptime: 0 days 1 hrs 2 mins 12 secs

Last packet: Version: 1 - Diagnostic: 0

State bit: Up - Demand bit: 0

Poll bit: 0 - Final bit: 0

Multiplier: 3 - Length: 24

My Discr.: 4098 - Your Discr.: 1090519041

Min tx interval: 300000 - Min rx interval: 300000

Min Echo interval: 0 - Authentication bit: 0

Hosting LC: 3, Down reason: None, Reason not-hosted: None, Offloaded: No

Note

Although BFD can be enabled for IBGP sessions as well, it is better to have BFD implemented for IGP than for IBGP sessions. This is because the IBGP is typically established using routes learned from the IGP and is not typically configured between the directly connected neighbors.

An important thing to notice in R1’s BFD neighbors’ detail output is that the session host is Hardware. When the echo function is disabled, BFD is offloaded to hardware. Because BFD is a forwarding path failure detection protocol, it requires sending the BFD echo packets as low as 50 ms in order to reduce overall network convergence time. With multiple BFD sessions, it is hard to process such aggressive timers by the software. Thus, the BFD session gets offloaded to hardware and manages aggressive timers as low as 50ms. It is important to note that the echo function should be disabled in order to offload BFD into hardware. On Cisco IOS and IOS XE platforms, hardware offloaded BFD sessions are verified by using the show bfd neighbors details command or by using the show bfd neighbors hardware details command. If the echo function is enabled, BFD is not hardware offloaded and is processed by CPU (software).

On IOS XR, when the BFD hardware offload is enabled, the async control packets are not generated and received by the line card (LC) CPU but by the Network Processor (NP) on the line card, thus increasing the BFD scale. To enable hardware offload for BFD on ASR9k, use the command hw-module bfd-hw-offload enable location rack/slot/cpu from the admin config mode. After the command is configured, the line card previously mentioned is required to be reloaded before BFD hardware offload is enabled.

Note

BFD is offloaded onto LC by default on NX-OS platforms.

The BFD echo function is enabled by default on most of the Cisco platforms. To enable the echo function (if its disabled), use the command bfd echo on both Cisco IOS and NX-OS software under the interface configuration mode and use the command no echo disable to enable the echo mode globally or under the interface on IOS XR. When the session is configured with the echo function, the BFD session starts off in asynchronous mode using a slow interval of 2 seconds. After the session is up, and if the interval specified by the client is less than 2 seconds, the echo function gets activated (assuming the echo function is enabled on the remote peer as well).

Example 14-16 shows the command output for BFD neighbors when the echo function is enabled. In the output on router R1, the minimum echo interval shows the value of 1 ms. This is because this value is hard-coded to 1 ms, and if the echo function is supported on both ends, the actual echo tx interval for a session is maximum of the following:

1. Local desired echo tx interval.

2. Remote required minimum echo rx interval. (This value is obtained from incoming control packets.)

Example 14-16 BFD Neighbors with Echo Function

IOS

R1# show bfd neighbors details

IPv4 Sessions

NeighAddr LD/RD RH/RS State Int

10.1.12.2 4098/2148073473 Up Up Te2/1/0

Session state is UP and using echo function with 300 ms interval.

Session Host: Software

OurAddr: 10.1.12.1

Handle: 2

Local Diag: 0, Demand mode: 0, Poll bit: 0

MinTxInt: 1000000, MinRxInt: 1000000, Multiplier: 3

Received MinRxInt: 2000000, Received Multiplier: 3

Holddown (hits): 0(0), Hello (hits): 2000(4)

Rx Count: 7, Rx Interval (ms) min/max/avg: 5/1951/1293 last: 798 ms ago

Tx Count: 5, Tx Interval (ms) min/max/avg: 1525/1941/1733 last: 1625 ms ago

Elapsed time watermarks: 0 0 (last: 0)

Registered protocols: BGP CEF

Uptime: 00:00:08

Last packet: Version: 1 - Diagnostic: 0

State bit: Up - Demand bit: 0

Poll bit: 0 - Final bit: 0

C bit: 1

Multiplier: 3 - Length: 24

My Discr.: 2148073473 - Your Discr.: 4098

Min tx interval: 2000000 - Min rx interval: 2000000

Min Echo interval: 1000

! Output omitted for brevity

IOS XR

RP/0/RSP0/CPU0:R2# show bfd session detail

I/f: TenGigE0/0/2/0, Location: 0/0/CPU0

Dest: 10.1.12.1

Src: 10.1.12.2

State: UP for 0d:0h:2m:12s, number of times UP: 6

Session type: PR/V4/SH

Received parameters:

Version: 1, desired tx interval: 1 s, required rx interval: 1 s

Required echo rx interval: 300 ms, multiplier: 3, diag: None

My discr: 4098, your discr: 2148073473, state UP, D/F/P/C/A: 0/0/0/0/0

Transmitted parameters:

Version: 1, desired tx interval: 2 s, required rx interval: 2 s

Required echo rx interval: 1 ms, multiplier: 3, diag: None

My discr: 2148073473, your discr: 4098, state UP, D/F/P/C/A: 0/0/0/1/0

Timer Values:

Local negotiated async tx interval: 2 s

Remote negotiated async tx interval: 2 s

Desired echo tx interval: 300 ms, local negotiated echo tx interval: 300 ms

Echo detection time: 900 ms(300 ms*3), async detection time: 6 s(2 s*3)

! Output omitted for brevity

NX-OS

R3# show bfd neighbors details

OurAddr NeighAddr LD/RD RH/RS Holdown(mult) State Int Vrf

10.1.13.3 10.1.13.1 1090519041/4098 Up 689(3) Up Eth3/2 default

Session state is Up and using echo function with 300 ms interval

Local Diag: 0, Demand mode: 0, Poll bit: 0, Authentication: None

MinTxInt: 300000 us, MinRxInt: 2000000 us, Multiplier: 3

Received MinRxInt: 1000000 us, Received Multiplier: 3

Holdown (hits): 6000 ms (5), Hello (hits): 1000 ms (332494)

Rx Count: 320684, Rx Interval (ms) min/max/avg: 0/10634/269 last: 1370 ms ago

Tx Count: 332494, Tx Interval (ms) min/max/avg: 756/756/756 last: 190 ms ago

Registered protocols: bgp

Uptime: 0 days 0 hrs 1 mins 52 secs

Last packet: Version: 1 - Diagnostic: 0

State bit: Up - Demand bit: 0

Poll bit: 0 - Final bit: 0

Multiplier: 3 - Length: 24

My Discr.: 4097 - Your Discr.: 1090519042

Min tx interval: 1000000 - Min rx interval: 1000000

Min Echo interval: 300000 - Authentication bit: 0

Hosting LC: 3, Down reason: None, Reason not-hosted: None, Offloaded: No

IOS XR has support for viewing the packet counters in a detailed manner at the line card level using the command show bfd counters packet private detail location rack/slot/cpu. Example 14-17 displays the counters for BFD control packets on IOS XR.

Example 14-17 BFD Packet Counters

IOS XR

RP/0/RSP0/CPU0:R2# show bfd counters packet private detail location 0/0/CPU0

TenGigE0/0/2/0 Recv Rx Invalid Xmit Delta

Async: 406384 0 387357

Echo: 15030 0 15030 0

Troubleshooting BFD Issues

Issues with BFD can cause convergence issues; thus, this section discusses some of the most common issues seen with BFD.

BFD Session Not Coming Up

Perform the following steps to verify why the BFD session is not coming up:

Step 1. Verify the application that created the BFD. If the application is BGP, ensure that BFD is properly configured with the same interval and multiplier value on both sides.

Step 2. Verify there is reachability to the remote with which the BFD session is being established. Ensure there is proper adjacency and reachability between the two peering devices.

Step 3. If the reachability is there, but the BFD session is not coming up, verify the received and sent counters on each side of the BFD neighbors and continue with the following:

![]() Ensure there is no ACL that is blocking the BFD packets, that is, UDP ports 3784 and 3785.

Ensure there is no ACL that is blocking the BFD packets, that is, UDP ports 3784 and 3785.

![]() Verify if the line card supports the aggressive timers (if configured) and also that the line card and the RP are not hitting any resource limitation. For this, refer to the hardware data sheet on Cisco.com.

Verify if the line card supports the aggressive timers (if configured) and also that the line card and the RP are not hitting any resource limitation. For this, refer to the hardware data sheet on Cisco.com.

![]() On IOS XR, check which NP corresponds to which interface and if the NP is receiving BFD packets or not. This can be done using the following commands:

On IOS XR, check which NP corresponds to which interface and if the NP is receiving BFD packets or not. This can be done using the following commands:

show controllers np ports all location rack/slot/cpu

show controllers np counters np location rack/slot/cpu | include

“Rate|BFD”

show uidb data location rack/slot/cpu interface ingress

show uidb location rack/slot/cpu interface ing-extension

![]() On NX-OS, verify the event-history for any events or errors.

On NX-OS, verify the event-history for any events or errors.

show system internal bfd event-history [all | error | session]

![]() On IOS XR, verify the BFD traces for any errors or events.

On IOS XR, verify the BFD traces for any errors or events.

show bfd trace [event | error]

![]() Verify if there is any CoPP policy dropping BFD packets. Ensure that BFD packets are treated in a separate class-map under the CoPP policy.

Verify if there is any CoPP policy dropping BFD packets. Ensure that BFD packets are treated in a separate class-map under the CoPP policy.

![]() On IOS XR, verify that the BFD packets are not exceeding the LPTS limit for BFD control packets.

On IOS XR, verify that the BFD packets are not exceeding the LPTS limit for BFD control packets.

BFD Session Flapping

Perform the following steps to troubleshoot BFD issues if the BFD session is flapping:

Step 1. Ensure that the link is not getting congested or oversubscribed.

Step 2. Ensure that BFD is part of the priority queue in QoS configs, and proper resource allocation is given to the BFD class.

Step 3. Ensure that the BFD adjacency is stable. This is usually seen in scenarios after RP switchovers.

Step 4. On IOS XR, ensure that the bfd_agent process is not respawning.

Step 5. Ensure the BFD packets are hardware switched on NX-OS—not software switched and thus getting delayed or dropped. This can be due to hardware misprogramming as well. Also, ensure no ip redirects command is configured under the interfaces.

Step 6. Ensure there is no control plane congestion and there is no configuration that remarks BFD packets from the default IP precedence value of 6, because this will affect the Rx handling of control packets. Verify the queueing policies on the egress to ensure that BFD is not delayed or dropped.

For BFD-related issues, the following outputs can be collected during the problematic state:

![]() On IOS XR

On IOS XR

![]() show tech routing bfd

show tech routing bfd

![]() On NX-OS

On NX-OS

![]() show tech bfd

show tech bfd

BGP Fast-External-Fallover

Historically, when the fast-external-fallover feature was not available and a link went down, the EBGP session remained up until the hold-down timer expired. This situation used to cause a traffic black hole situation and service impact. To overcome this problem, bgp fast-external-fallover command was introduced. With this command configured, the EBGP session terminates immediately if the link goes down. This command is enabled by default on recent IOS releases, and IOS XR and NX-OS releases.

This feature is enabled by default for EBGP sessions but disabled for IBGP sessions. The feature can also be enabled at the interface level using the command ip bgp fast-external-fallover on Cisco IOS software.

Although the command bgp fast-external-fallover improves on convergence time, it is good to disable the command if the EBGP link is flapping continuously. By disabling fast-fallover, the instability caused by neighbors continually transitioning between idle and established states and the routing churn caused by the flood of ADVERTISE and WITHDRAW messages can be avoided. Use the no bgp fast-external-fallover command to disable this feature on both Cisco IOS and NX-OS, and use the command bgp fast-external-fallover disable command to disable this feature on IOS XR.

BGP Add-Path

In BGP, only one best path is advertised by a BGP router or a BGP RR. The BGP speaker accepts only one path for a given prefix from a given peer. If a BGP speaker receives multiple paths for the same prefix, then because of BGP’s implicit withdraw semantics, the latest announcement of the prefix replaces the previous announcements. Even when multipath is configured, BGP RR does not advertise multiple paths but only the best path. This prevents the efficient use of the BGP multipath feature. Also, because of this behavior there could be other side effects, such as Multi-Exit Discriminator (MED) oscillations, suboptimal hot potato routing, and the like.

To understand the default behavior of BGP with multiple paths, examine the topology shown in Figure 14-8. It will be used for all future examples. RR1 is an RR running Cisco IOS, RR2 is running IOS XR, and RR3 is running NX-OS. All the other routers are running Cisco IOS software.

In Figure 14-8, the prefix 172.16.4.4/32 is being advertised by CE2, which is in AS-300. The prefix is learned in AS-100 via two paths: one via PE2 and the other via PE3. Although there are two paths for the prefix, only the best path is advertised to the RR. Even if the RR has multiple paths, it hides all but the best path. Thus the ingress routers most often know about one exit point. When that path fails, traffic loss is proportional to control-plane convergence.

The solution to such issues is having a diverse path available to the ingress router, so that the convergence time is not high. Some of the BGP diverse path features were discussed in Chapter 6, “Troubleshooting Platform Issues Due to BGP.” One of the other features to achieve the diverse path is the BGP add-path feature. The BGP add-path feature signals not only the primary and backup path but the diverse paths ranging from 2 to n or all paths available for the prefix. To implement BGP add-path feature, both the RRs and the edge BGP router should have add-path feature support.

The BGP add-path features provides a lot of benefits to the network as a whole. A few of the benefits are as follows:

![]() Fast Convergence: Because the ingress routers now have visibility to more paths, they can switch to backup paths faster after the primary path fails.

Fast Convergence: Because the ingress routers now have visibility to more paths, they can switch to backup paths faster after the primary path fails.

![]() Load Balancing: Because there is more visibility for the paths to the ingress routers, they can do equal cost multipath (ECMP) on multiple paths to achieve load balancing. This requires either the advertisement of backup paths or all paths to be advertised.

Load Balancing: Because there is more visibility for the paths to the ingress routers, they can do equal cost multipath (ECMP) on multiple paths to achieve load balancing. This requires either the advertisement of backup paths or all paths to be advertised.

![]() Churn Reduction: Withdraws can be suppressed because of available alternate paths.

Churn Reduction: Withdraws can be suppressed because of available alternate paths.

![]() Route Oscillation Prevention: Route oscillation scenarios are covered in RFC 3345. The scenarios presented in the RFC can be overcome by advertising group best paths (in some cases all paths).

Route Oscillation Prevention: Route oscillation scenarios are covered in RFC 3345. The scenarios presented in the RFC can be overcome by advertising group best paths (in some cases all paths).

The BGP add-path feature is defined in RFC 7911. The RFC proposes an extension to the Network Layer Reachability Information (NLRI) by including path-ID, so that multiple paths for the same prefix can be advertised. Path-IDs are unique to a peering session and are generated for each network. The encodings specified in RFC 4271 and RFC 4760 are extended, as shown in Figure 14-9.

For carrying labeled prefixes, the encoding specified in RFC 3107 is modified for the add-path feature, as shown in Figure 14-10.

The add-path feature is negotiated as a capability on a per AFI/SAFI basis and done separately for both Send and Receive direction. The per AFI and per neighbor configuration triggers capability exchange with the peers. For exchanging add-path capability between two routers—for instance, router A and router B, both A and B should configure the add-path capability to send, receive, or both.

For router A to send add-paths to router B, router A should enable send capability and router B should enable receive capability. Similarly, for router A to receive add-paths from router B, router A should be configured with receive capability and router B with the send capability. Any configuration changes will take effect only during the next session establishment.

The add-path capability is configured in two ways. It can either be configured globally under the address-family or on a per-neighbor basis. To enable the BGP add-path capability, use the command bgp additional-paths [send | receive] under the address-family. Cisco IOS routers reset the session as soon as the command is configured, but it is manually required on IOS XR and NX-OS to clear the BGP session to exchange add-path capability.