Appendix B

Linear Algebra Review

B.1 Euclidean Spaces

B.1.1 Inner Product and the Norm

A Euclidean space E is a finite-dimensional vector space for which an inner product ![]() is defined. The inner product, sometimes called dot product and denoted x · y, must satisfy the following three axioms:

is defined. The inner product, sometimes called dot product and denoted x · y, must satisfy the following three axioms:

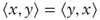

- Symmetry:

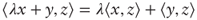

- Bilinearity:

for any λ ∈

for any λ ∈

- Positive-definiteness:

and

and  if and only if x = 0

if and only if x = 0

For example:

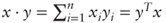

- E =

n with the canonical dot product

n with the canonical dot product

- The space of continuous functions over the interval [a, b] with the inner product:

The inner product induces a distance or norm ![]() with the following standard properties:

with the following standard properties:

- Positive scalability:

for any λ ∈

for any λ ∈

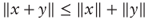

- Triangle inequality:

B.1.2 Cauchy-Schwarz Inequality and Angles

The Cauchy-Schwarz inequality is one of the most important inequalities in all of mathematics and states that:

which allows us to define the absolute angle in [0, π] between x and y as:

B.1.3 Orthogonality

Two vectors are said to be orthogonal whenever their inner product is zero.

A system of vectors is said to be orthogonal whenever they are pairwise orthogonal. There can be at most n vectors in an orthogonal system, where n is the dimension of E.

A system of vectors is said to be orthonormal when it is orthogonal and the norm of each vector is 1.

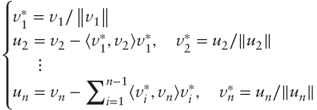

Every Euclidean space has infinitely many orthonormal bases. In practice, given an arbitrary basis (v1,…, vn), we can build an orthonormal basis ![]() by following Gram-Schmidt's orthonormalization process:

by following Gram-Schmidt's orthonormalization process:

The orthogonal projection of a given vector x onto the line Span(v) is simply ![]() . In

. In ![]() n with canonical inner product, the projection operator is then

n with canonical inner product, the projection operator is then ![]() .

.

Given an orthonormal basis ![]() the coordinates of a given vector x are

the coordinates of a given vector x are ![]() and we have

and we have ![]() .

.

An orthogonal matrix O is a square matrix whose columns and rows are orthonormal vectors of ![]() n; equivalently OOT = OTO = I, where I is the identity matrix.

n; equivalently OOT = OTO = I, where I is the identity matrix.

Parseval's identity states that the norm of a vector does not depend on the orthonormal basis in which its coordinates are calculated:

where ![]() is an arbitrary orthonormal basis of E.

is an arbitrary orthonormal basis of E.

B.2 Square Matrix Decompositions

Given a basis ![]() = (v1,…, vn) we may represent any linear transformation f: E → E as an n × n square matrix A whose columns are the coordinates of

= (v1,…, vn) we may represent any linear transformation f: E → E as an n × n square matrix A whose columns are the coordinates of ![]() in

in ![]() . Then

. Then ![]() is an equivalent representation of f.

is an equivalent representation of f.

It is often useful to rewrite a given matrix as a product or sum of simpler components. For example, some matrices may be written ![]() where P is an invertible square matrix whose columns are eigenvectors and D is a diagonal matrix of eigenvalues. By the spectral theorem, this is true of every symmetric matrix in which case P can be chosen to be orthogonal and we may write

where P is an invertible square matrix whose columns are eigenvectors and D is a diagonal matrix of eigenvalues. By the spectral theorem, this is true of every symmetric matrix in which case P can be chosen to be orthogonal and we may write ![]() where v*'s are the columns of P. When all λ's are positive A is said to be positive-definite and when all λ's are nonnegative A is said to be positive-semidefinite.

where v*'s are the columns of P. When all λ's are positive A is said to be positive-definite and when all λ's are nonnegative A is said to be positive-semidefinite.

Another type of decomposition is A = LU where L is lower triangular and U is upper triangular. If A is symmetric positive-definite then we can find L, U such that U = LT and the decomposition A = LLT is called a Cholesky decomposition.

The bilinear transformation ![]() defines an inner product on

defines an inner product on ![]() n if and only if A is symmetric positive-definite, in which case we may rewrite:

n if and only if A is symmetric positive-definite, in which case we may rewrite:

where ![]() is the canonical norm and angles are measured canonically. The associated quantity

is the canonical norm and angles are measured canonically. The associated quantity ![]() is then known as a quadratic form.

is then known as a quadratic form.

The Rayleigh quotient ![]() measures the scaling factor between the canonical norm and the Q-norm; it can be shown to be comprised between mini λi and maxi λi. The maximum eigenvalue of A is then called the spectral radius ρ(A), and we have

measures the scaling factor between the canonical norm and the Q-norm; it can be shown to be comprised between mini λi and maxi λi. The maximum eigenvalue of A is then called the spectral radius ρ(A), and we have ![]() .

.