12.5. The DB2 LOAD Utility

The load utility is another tool you can use to insert data into a table. Note that you cannot run the load tool against a view; the target must be a table that already exists. The major difference between a load and an import is that a load is much faster. Unlike the import tool, data is not written to the database using normal insert operations. Instead, the load utility reads the input data, formats data pages, and writes directly to the database. Database changes are not logged and constraint validations are not performed during a load operation.

12.5.1. The Load Process

Basically, a complete load process consists of four phases.

1. | During the load phase, the load utility scans the input file for any invalid data rows that do not comply with the table definition; for example, if a table column is defined as INTEGER but the input data is stored as “abcd”. Invalid data will not be loaded into the table. The rejected rows and warnings will be written to a dump file specified by the dumpfile modifier. Valid data is then written into the table. At the same time, table statistics (if the statistics option was specified) and index keys are also collected. If the savecount option is specified in the load command, points of consistency are recorded in the message file. Consistency points are established by the load utility. They are very useful when it comes to restarting the load operation. You can restart the load from the last successful consistency point. |

2. | During the build phase, indexes are produced based on the index keys collected during the load phase. The index keys are sorted during the load phase, and index statistics are collected (if the statistic option was specified). |

3. | In the load phase, the utility only rejects rows that do not comply with the column definitions. Rows that violated any unique constraint will be deleted in the delete phase. Note that only unique constraint violated rows are deleted. Other constraints are not checked during this phase or during any load phase. You have to manually check it after the load operation is complete. Refer to Section 12.5.7, Validating Data Against Constraints, for more information. |

4. | During the index copy phase, index data is copied from a system temporary table space to the original table space. This will only occur if a system temporary table space was specified for index creation during a load operation with the read access option specified (see section 12.5.2.5, Locking Considerations During a Load). |

12.5.2. The LOAD Command

The load utility is so powerful that its command can be executed with many different options. Figure 12.16 presents a simplified version of the load command syntax diagram.

Figure 12.16. Simplified syntax diagram of the load command

.-,--------------.

V |

>>-LOAD--+--------+--FROM----+-filename---+-+--OF--filetype----->

'-CLIENT-' +-pipename---+

+-device-----+

'-cursorname-'

>--+-------------------------+---------------------------------->

| .-,--------. |

| V | |

'-LOBS FROM----lob-path-+-'

>--+-------------------------------+---------------------------->

| .--------------. |

| V | |

'-MODIFIED BY----filetype-mod-+-'

>--+--------------+--+-------------+--+-----------------+------->

'-SAVECOUNT--n-' '-ROWCOUNT--n-' '-WARNINGCOUNT--n-'

>--+------------------------+----------------------------------->

'-MESSAGES--message-file-'

>--+-------------------------------+--+-INSERT----+------------->

'-TEMPFILES PATH--temp-pathname-' +-REPLACE---+

+-RESTART---+

'-TERMINATE-'

>--INTO--table-name--+-------------------------+---------------->

| .-,-------------. |

| V | |

'-(----insert-column-+--)-'

>--+---------------------------+-------------------------------->

'-FOR EXCEPTION--table-name-'

>--+-----------------------------+------------------------------>

'-STATISTICS--+-USE PROFILE-+-'

'-NO----------'

>--+-----------------------------------------------------------------+-->

| .-NO----------------------------------------------------. |

+-COPY--+-YES--+-USE TSM--+--------------------------+--------+-+-+

'-NONRECOVERABLE--------------------------------------------------'

>--+-------------------+--+--------------------------+---------->

'-WITHOUT PROMPTING-' '-DATA BUFFER--buffer-size-'

>--+--------------------------+--+--------------------+--------->

'-SORT BUFFER--buffer-size-' '-CPU_PARALLELISM--n-'

>--+---------------------+-------------------------------------->

'-DISK_PARALLELISM--n-'

>--+--------------------------------+--------------------------->

'-INDEXING MODE--+-AUTOSELECT--+-'

+-REBUILD-----+

+-INCREMENTAL-+

'-DEFERRED----'

.-ALLOW NO ACCESS-----------------------------.

>--+---------------------------------------------+-------------->

'-ALLOW READ ACCESS--+----------------------+-'

'-USE--tablespace-name-'

>--+--------------------------------------+--------------------->

'-CHECK PENDING CASCADE--+-IMMEDIATE-+-'

'-DEFERRED--'

>--+-----------------+------------------------------------------>

'-LOCK WITH FORCE-'

|

As you can see, there are many options available to customize your load operation. The following examples illustrate how to use some of them.

12.5.2.1. The MESSAGES, SAVECOUNT, and WARNINGCOUNT Options

In Figure 12.17, data in a DEL input file is loaded into a list of columns in table stock. The messages option is used to record warnings and errors encountered during the load operation. This particular load will stop when the threshold of warnings (in this case, 10) is encountered. You can check the output file for warnings and errors.

The savecount option establishes consistency points after every 1,000 rows are loaded. Because a message is issued at each consistency point, ensure that the savecount value is sufficiently high to minimize performance impact.

Figure 12.17. Example 1: load command

load from stock.del of del savecount 1000 warningcount 10 messages stock.out insert into stock(itemid, itemdesc, cost, inventory) |

Consistency points are established during the load phase. You can use these to restart a failed or terminated load operation. By specifying the same load command but replacing insert with the restart option, the load operation will automatically continue from the last consistency point.

To terminate a load, issue the same load command but use the terminate option in place of insert. For example:

load from stock.del of del savecount 1000 warningcount 10 messages stock.out terminate

12.5.2.2. Loading from a CURSOR

The load utility supports four file formats: IXF, DEL, ASC, and CURSOR (described in Section 12.2, Data Movement File Formats). When using the CURSOR file type as demonstrated in Figure 12.18, the cursor must be already declared but does not need to be opened. The entire result of the query associated with the specified cursor will be processed by the load utility. You must also ensure that the column types of the SQL query are compatible with the corresponding column types in the target table.

Figure 12.18. Example 2: load command

declare cur1 cursor as select * from oldstock; load from cur1 of cursor messages curstock.out insert into stock |

12.5.2.3. MODIFIED BY dumpfile and Exception Table

As mentioned earlier, the load process goes through four phases. During the load phase, data that does not comply with the column definition will not be loaded. Rejected records can be saved in a dump file by using the modified by dumpfile modifier. If dumpfile is not specified, rejected records will not be saved. Since the load utility will not stop unless it reaches the warning threshold if one is specified, it is not easy to identify the rejected records. Hence, it is always a good practice to use the modifier and validate the message file after a load is completed. Figure 12.19 shows how to use modified by dumpfile.

Figure 12.19. Example 3: load command

load from stock.ixf of ixf modified by dumpfile=stockdump.dmp messages stock.out replace into stock for exception stockexp |

Assume that the input file stock.ixf contains the data in Table 12.3.

| itemid | itemdesc | inventory |

|---|---|---|

| 10 | ~~~ | 1 |

| 20 | ~~~ | – |

| 30 | ~~~ | 3 |

| 30 | ~~~ | 4 |

| 40 | ~~~ | X |

| 50 | ~~~ | 6 |

| 50 | ~~~ | 7 |

| 80 | ~~~ | 8 |

The target table stock is defined with three columns using this CREATE TABLE statement:

CREATE TABLE stock ( itemid INTEGER NOT NULL , itemdesc VARCHAR(100) , inventory INTEGER NOT NULL , PRIMARY KEY (itemid) )

Notice that the second and fifth records in stock.ixf do not comply with the NOT NULL and numeric definitions. If the load command shown in Figure 12.19 is executed, a dump file (stockdump.dmp) will be created to save rows that are not loaded due to incompatible data type and the nullability attribute. Table 12.4 shows that the dump file stockdump.dmp contains the rows not loaded.

| itemid | itemdesc | inventory |

|---|---|---|

| 20 | ~~~ | – |

| 40 | ~~~ | X |

Recall that in the third phase the load process deletes rows that violate any unique constraint defined in the target table. You can save the deleted rows in a table called an exception table using the for exception option. If an exception table is not specified, the rows will be discarded.

You need to create an exception table manually before you can use it. The table should have the same number of columns, column types, and nullability attributes as the target table to be loaded. You can create such a table with this command:

CREATE TABLE stockexp LIKE stock

To log when and why rows are rejected, you can add two other optional columns to the end of the exception table. The first column is defined as a TIMESTAMP data type to record when the record was deleted. The second column is defined as CLOB (32K) or larger and tracks the constraint names that the data violates. To add columns to the table, use the ALTER TABLE statement:

ALTER TABLE stockexp ADD COLUMN load_ts TIMESTAMP ADD COLUMN load_msg CLOB(32k)

Like the dumpfile modifier, it is a good practice to also use the exception table, especially if unique violations are possible. The exception table illustrated in Table 12.5 contains rows that violated the unique constraints.

| itemid | itemdesc | inventory |

|---|---|---|

| 30 | ~~~ | 4 |

| 50 | ~~~ | 7 |

Figure 12.20 shows the big picture of the concepts of dumpfile and the exception table.

1. | |

2. | Issue the load command with modified by

dumpfile, messages, and for exception options. |

3. | Rows that do not comply with the table definition (NOT NULL and numeric column) are recorded in the stockdump.dmp file. |

4. | Rows that violated the unique constraint are deleted from the stock table and inserted into the exception table. |

5. | Four rows are successfully loaded into the stock table. |

12.5.2.4. Loading from a Client

In all the examples you have seen so far, the load commands are executed from the database server and the input files are located on the database server. You may sometimes want to invoke a load operation from a remote client as well as using a file that resides at the client. To do so, specify the client keyword in the command as demonstrated in Figure 12.21.

Figure 12.21. Example 4: load command

load client from stock.ixf of ixf modified by dumpfile=stockdump.dmp rowcount 5000 messages stock.out tempfiles path c:loadtemp replace into stock for exception stockexcept lock with force |

You cannot load a CURSOR file type from a client. The dumpfile and lobsinfile modifiers (discussed in the following sections) refer to files on the server even when the command includes the client keyword.

NOTEUse the load client command when the input file resides on the client from which you are issuing the command. Use the dumpfile, tempfile, and lobsinfile modifiers for files located on the DB2 server. |

The rowcount option works exactly the same as the one supported by the import utility. You can control the number of rows to be loaded with this option.

During the load process, the utility uses temporary files. By default, it allocates temporary files from the directory where the load command was issued. To explicitly specify a path for this purpose, use the tempfiles option as shown in Figure 12.21.Notice that the example also uses the replace mode, which replaces the old data in the target table with the new data.

12.5.2.5. Locking Considerations During a Load

The utility acquires various locks during the load process. If you choose to give the load operation a higher priority then other concurrent applications, you can specify the lock with force option (in Figure 12.21) to immediately terminate other applications that are holding conflicting locks so that the load utility does not have to wait for locks.

By default, no other application can access the target table that is being loaded. The utility locks the target table for exclusive access until the load completes. You can set this default behavior with the allow no access option. This is the only valid option for load replace.

You can increase concurrency by locking the target table in share mode and allowing read access. In Figure 12.22, the allow read access option is enabled, which lets readers access data that existed before the load. New data will not be available until the load has completed.

Figure 12.22. Example 5: load command

load from stock.ixf of ixf modified by dumpfile=stockdump.dmp messages stock.out replace into stock for exception stockexcept allow read access indexing mode incremental |

12.5.2.6. The INDEXING MODE Option

The last option in Figure 12.22, indexing mode, indicates whether the load utility is to rebuild indexes or to extend them incrementally. This is done in the build phase. You can use the options in Table 12.6.

| INDEXING MODE option | Description |

|---|---|

| REBUILD | Forces all indexes to be rebuilt. |

| INCREMENTAL | Extends indexes with new data. |

| AUTOSELECT (default) | The load utility will automatically choose between REBUILD or INCREMENTAL mode. |

| DEFERRED | Indexes will not be rebuilt but will be marked as needing a refresh. An index will be rebuilt when it is first accessed or when the database is restarted. |

12.5.3. File Type Modifiers Supported in the Load Utility

The file type modifiers supported in the load utility are as comprehensive as those supported in the export and import utilities. The following section discusses a few of the modifiers. Refer to the DB2 Data Movement Utilities Guide and Reference for a complete list of load utility modifiers.

12.5.3.1. Leaving Free Space in Data and Index Pages

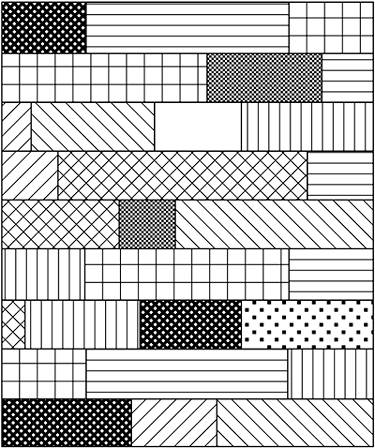

When you insert data into a table with the insert, import, or load operations, DB2 tries to fit as much of the data into the data and index pages as possible. Consider pages tightly packed as shown in Figure 12.23.

Figure 12.23. A tightly packed data or index page

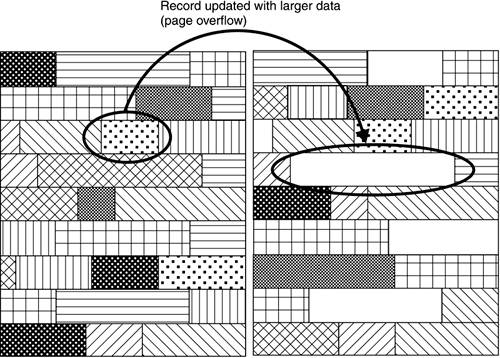

When a certain record is updated with data larger than the original size, new data might not be able to fit into the original data page. DB2 will then search for the next free page to store the updated record. The updated record is referenced from the original page by a pointer. When a request comes in to retrieve the record, DB2 first locates the original data page and then searches for the new data page as referenced by the pointer. This is called page overflow (see Figure 12.24).

Figure 12.24. Page overflow

The higher the number of page overflows, the more time DB2 will spend finding the data or index page. Hence, you want to avoid page overflows as much as possible to improve performance.

To minimize page overflows, you can customize the table definition so that certain free space is reserved so that the pages are not tightly packed. The CREATE TABLE, ALTER TABLE, and CREATE INDEX statements have options for leaving free space in data and/or index pages. The load command also has options to override the default set for the target table. You can specify this using the file type modifiers: indexfreespace, pagefreespace, and totalfreespace.

Modifiers pagefreespace=x and indexfreespace=x can be used to specify the percentage of each data and/or index page that is to be left as free space. For example, Figure 12.25 illustrates leaving 20 percent of free space on each data and index page.

Figure 12.25. Leaving free space for the pagefreespace and indexfreespace file modifiers

The modifier totalfreespace=x specifies the percentage of the total pages in the table that is to be appended to the end of the table as free space. For example, if x = 20, and the table has 100 data pages after the data has been loaded, 20 additional empty pages will be appended. The total number of data pages for the table will be 120 (see Figure 12.26).

Figure 12.26. Using the totalfreespace file modifier

12.5.4. Loading Large Objects

The load utility uses the same option and modifier as the import utility to specify the path where LOBs are stored. For example, the following command lists the directories where LOBs are stored with the lobs from option.

load from stock.ixf of ixf lobs from c:lobs1, c:lobs2, c:lobs3 modified by dumpfile=stockdump.dmp lobsinfile messages stock.out replace into stock for exception stockexcept

12.5.5. Collecting Statistics

During the load phase of the process, the load utility also collects table statistics if you specify statistics. You can either collect statistics using the statistic profile with the statistics use profile option, or specify not to collect statistics with the statistics no option. A statistic profile is a set of options that specify which statistics are to be collected, such as table, index, or distribution statistics.

If you choose not to collect statistics during the load, you should always update the statistics at the earliest convenient time. When large amounts of new data are inserted into a table, you should update the statistics to reflect the changes so that the optimizer can determine the most optimal access plan.

12.5.6. The COPY YES/NO and NONRECOVERABLE Options

Recall that changes made to the target tables during the load are not logged. This is one of the characteristics of the load utility that improves performance. However, it also takes away the ability to perform roll forward recovery for the load operation. DB2 puts the table space where the target table resides in backup pending state when the load operation begins. After the load completes, you must back up the table space or database. This ensures that the table space can be restored to the point where logging is resumed if you ever need to restore the table space restore. This is the behavior of the load option copy no. You can also specify copy yes if archival logging is enabled. With copy yes, a copy of the loaded data will be saved and the table space will not be in backup pending state upon load completion. However, this negatively impacts the performance of the load. Table space status related to load operation will be discussed later in this chapter.

When you cannot afford to have a window to perform a table space backup after the load is complete but you also need the load to complete as fast as possible, neither copy yes nor copy no is a good solution. You may want to consider using the option nonrecoverable if the target table can be recreated and data can be reloaded.

The nonrecoverable option specifies that the target table is marked as nonrecoverable until the associated table space is backed up. In case of failure, such as disk or database failure, the table space needs to be restored and rolled back. The roll forward utility marks the data being loaded as invalid and skips the subsequent transactions for the target table. After the roll forward operation is completed, the target table is not accessible and it can only be dropped. Note that other tables in the same table space are not affected by this option.

12.5.7. Validating Data Against Constraints

The load utility checks for invalid data and unique constraints during the load process. However, other constraints such as referential integrity and check constraints are not validated. DB2 therefore puts target tables defined with these constraints in check pending state. This forces you to manually validate the data before the tables are available for further processing.

The set integrity command gives you the ability to do just that. The command can be as simple as the following, which immediately validates data against the constraints for table stock.

set integrity for stock immediate checked

There are many other options; refer to the DB2 UDB Command Reference for the complete syntax of the command.

12.5.8. Performance Considerations

You can further speed up the load performance by taking advantage of the extra hardware resources you might have on the machine. Table 12.7 lists options and modifiers you can use.

12.5.9. Authorities Required to Perform a Load

To perform a load, you must have SYSADM, DBADM, or LOAD authority. With the LOAD authority, you also need specific privileges on the target tables depending on the mode used in the load command. For example, you need INSERT privileges on the table when the load utility is invoked in INSERT mode. If you use REPLACE mode, you need INSERT and DELETE privileges on the target table.

Note that you also need appropriate access to the exception table if one is specified. In addition, when using the copy yes option, you need SYSADM, SYSCTRL, or SYSMAINT authority because a backup is performed during the load operation.

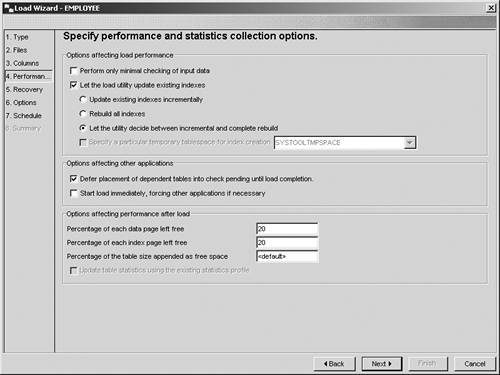

12.5.10. Loading a Table Using the Control Center

The Control Center provides a graphical tool to invoke a load operation. Right-click on the target table and select Load to start the Load Wizard (Figure 12.27). The Load Wizard walks you through the process of loading a table.

12.5.11. Monitoring a Load Operation

During the phases of a load, the target table and its associated table spaces are in different states. By checking the state of the table and table space, you can tell which phase the load operation is currently in. Before introducing the tools to obtain this information, let's first discuss the different table and table space states.

12.5.11.1. Table States

Table 12.8 lists the states in which Tables can be placed by the database manager. You can control some of these; others are caused by the load utility.

12.5.11.2. Table Space States

Table 12.9 lists the states in which table spaces can be placed by the database manager.

| Table State | Description |

|---|---|

| Normal | The table space is in normal state. |

| Quiesced: SHARE | The table space has been quiesced in SHARED mode. |

| Quiesced: UPDATE | The table space has been quiesced in UPDATE mode. |

| Quiesced: EXCLUSIVE | The table space has been quiesced in EXCLUSIVE mode. |

| Load pending | A table space is put in this state if a load operation has been active on one of its associated tables but has been aborted before data could be committed. |

| Delete pending | A table space is put in this state if one of its associated tables is undergoing the delete phase of a load operation but has been aborted or failed. |

| Backup pending | A table space is put in this state after a Point In Time roll forward operation, or after a load operation with the no copy option. You must back up the table space before using it. If it is not backed up, then you cannot update the table space, and only read-only operations are allowed. |

| Roll forward in progress | A table space is put in this state when a roll forward operation on that table space is in progress. Once the roll forward operation completes successfully, the table space is no longer in roll forward-in-progress state. The table space can also be taken out of this state if the roll forward operation is cancelled. |

| Roll forward pending | A table space is put in this state after it is restored or following an I/O error. After it is restored, the table space can be rolled forward to the end of the logs or to a Point In Time. Following an I/O error, the table space must be rolled forward to the end of the logs. |

| Restore pending | A table space is put in this state if a roll forward operation on that table space is cancelled, or if a roll forward operation on that table space encounters an unrecoverable error, in which case the table space must be restored and rolled forward again. |

| Load in progress | A table space is put in this state if it is associated with a load operation. The load in progress state is removed when the load operation is completed or aborted. |

| Reorg in progress | An REORG operation is in progress on one of the tables associated to the table space. |

| Backup in progress | A backup is in progress on the table space. |

| Storage must be defined | For DB2 database manager internal use only. |

| Restore in progress | A restore is in progress on the table space. |

| Offline and not accessible | DB2 failed to access or use one or more containers associated to the table space, so the table space is placed offline. To take the table space out of this state, repair the containers. |

12.5.11.3. Load Querying

DB2 has two utilities that you can use to obtain the table state. Figure 12.28 presents the syntax diagram of one of them, the load query command.

Figure 12.28. Syntax diagram of the load query command

>>-LOAD QUERY--TABLE--table-name--+------------------------+---->

'-TO--local-message-file-'

>--+-------------+--+-----------+------------------------------><

+-NOSUMMARY---+ '-SHOWDELTA-'

'-SUMMARYONLY-'

|

You can specify the following command to check the status of the load operation:

load query table stock to c:/stockstatus.out

The output file stockstatus.out might look similar to Figure 12.29.

Figure 12.29. Sample output of a load query command

SQL3501W The table space(s) in which the table resides will not be placed in backup |

12.5.11.4. The LIST UTILITIES Command |

The list utilities command displays the list of active utilities on the instance. Use the show detail option to also display detailed progress information. Figure 12.30 illustrates sample output.

Figure 12.30. Output of the list utilities command

list utilities show detail ID = 1 Type = LOAD Database Name = SAMPLE Partition Number = 0 Description = OFFLINE LOAD Unknown file type AUTOMATIC INDEXING INSERT |

The report generated in Figure 12.30 indicates that a load was performed on the database sample and includes a brief description of the operation. Progress Monitoring tells you the current phase of the load and the number of rows already loaded and to be loaded.

The table space in which the load target table resides will be placed in backup pending state if COPY NO (the default) option is specified. The utility places the table space in this state at the beginning of the load operation. The table spaces stays in backup pending mode even when the load is complete until you perform a database or table space level backup.

Figure 12.31 shows how to retrieve the table space status.

Figure 12.31. Retrieving the table space status

list tablespaces show detail

Tablespace ID = 2

Name = USERSPACE1

Type = System managed space

Contents = Any data

State = 0x0000

Detailed explanation:

Backup pending

Total pages = 527

Useable pages = 527

Used pages = 527

Free pages = Not applicable

High water mark (pages) = Not applicable

Page size (bytes) = 4096

Extent size (pages) = 32

Prefetch size (pages) = 16

Number of containers = 1

|