Mining Patterns and Violations Using Concept Analysis

Christian Lindig* * Testfabrik AG, Saarbrücken, Germany

Abstract

Large programs develop patterns in their implementation and behavior that can be used for defect mining. Previous work used frequent itemset mining to detect such patterns and their violations, which correlate with defects. However, frequent itemset mining gives much more attention to patterns than to the instances of these patterns. We propose a more general framework to understand and mine purely structural patterns and violations. By combining patterns and their instances into blocks, we gain access to the rich theory of formal concepts. This results in a novel geometric interpretation, which helps to understand previous mining approaches. Blocks form a hierarchy in which each block corresponds to a pattern and neighboring blocks correspond to a violation. Furthermore, blocks may be computed efficiently and searched for violations. Using our open-source tool Colibri/ML, we mined patterns and violations from five open-source projects in less than 1 minute in each case, including the Linux kernel.

Acknowledgments

Discussions with Silvia Breu, David Schuler, and Valentin Dallmeier helped to improve this chapter.

2.1 Introduction

While classifying something as a software defect requires a specification, we can find potential defects without a specification. This is based on the observation that large software systems exhibit patterns in their implementation or behavior and that deviations from these patterns correlate with defects [1]. An automatic analysis of such deviations is practical for large systems and is especially suited to find latent bugs.

Patterns in code and behavior are a consequence of small and orthogonal interfaces. They force clients to combine functions to implement a certain functionality. For example, implementing in C a function with a varying number of arguments (like printf) requires the concerted use of the macros va_start, va_arg, and va_end. Hence, we see many functions that call both va_start and va_end. For example, the source code for the Ruby 1.8.4 interpreter includes 17 such functions. But it also includes one function (vafuncall) that calls va_start but not va_end. This deviation is indeed a bug that was corrected in a later release.

Mining software for structural patterns and their violations was pioneered by Li and Zhou [2] with pr-Miner,1 a tool that mines programming rules from source code and flags violations. Patterns are not limited to a known set of patterns or names, but are purely structural. Li and Zhou demonstrated the effectiveness and efficiency of this approach by reporting 27 previously unknown bugs in the Linux kernel, PostgreSQL, and Apache HTTP Server. pr-Miner uses frequent itemset mining to detect patterns and their violations. Frequent itemset mining discovers implications such as every customer who bought bread and butter also bought milk from itemsets such as shopping carts. Li and Zhou note, however, that “frequent itemset mining algorithms were not designed exactly for this purpose” and developed some ad hoc mechanisms such as applying frequent-item mining twice.

The goal of this chapter is not to improve on the excellent results of pr-Miner, but is to improve the foundation for detecting structural patterns and their violations. Our hope is that this will lead to new applications of the idea that stands behind pr-Miner. In particular, we propose a unified representation for patterns and their instances that uncovers their hierarchical nature and provides an intuitive geometric interpretation.

Our formalism is based on the following insight: any binary relation (such as a call relation) can be represented as a cross table as in Figure 2.1, which shows the call relation of Ruby 1.8.4. A caller f and a callee g are related (marked with a dot) if f calls g. In such a table, rows (callers) and columns (callees) may be permuted without changing the underlying relation. By picking a suitable permutation, we can make a pattern visible as a block. Figure 2.1 shows the block for the pattern {va_start,va_end} as well as the 17 functions that are instances of this pattern. In addition, the violation of this pattern by the function vafuncall becomes visible as an imperfect block: vafuncall calls va_start but not va_end, which leaves a gap in the block.

Mining patterns from a relation can be understood as finding the blocks of the relation. Analogously, detecting violations of patterns can be understood as finding imperfect blocks. Patterns and violations can be mined from any binary relation, not just a call relation. However, for illustration, we shall stick with the call relation as an example for most of this chapter and present another application in Section 2.9.

2.1.1 Contributions

This chapter makes the following contributions:

• Blocks unify patterns and their instances, which were previously treated separately and ad hoc. Furthermore, blocks provide a geometric interpretation of patterns and violations.

• A block hierarchy captures the recursive relation of blocks and violations: patterns correspond to blocks, and violations correspond to neighboring blocks.

• Case studies show the efficiency and practicality of the proposed formalism. Call patterns and their violations can be identified statically for the Python interpreter within 20 seconds, and for the Linux kernel within 1 minute.

• We draw a connection between patterns, their instances and violations, and formal concept analysis [3], which provides a theory to study them.

The remainder of this chapter is organized as follows: Section 2.2 introduces the relation between patterns and blocks, and Section 2.3 shows how to compute them from an input relation. Section 2.4 illustrates the use of Colibri/ML to compute patterns and introduces violations of patterns. Section 2.5 introduces violations of patterns formally, and Section 2.6 shows how to identify them efficiently. Section 2.7 explores the recursive relation of patterns and violations. Section 2.8 reports performance numbers gathered from the analysis of open-source projects. Sections 2.9 and 2.10 demonstrate the versatility of the binary relation in program analysis. The chapter closes with a discussion of related work in Section 2.11 and our conclusions in Section 2.12.

2.2 Patterns and Blocks

A relation associates objects and their features, such as callers and callees in the example above. A pattern is a set of features shared by objects. These objects are called the instances of the pattern. For defect detection, the goal is to find patterns that have many instances because these patterns are likely to capture a universal principle. As we shall see, patterns and instances are unified by blocks.

For example, the Ruby interpreter contains the following pattern: the functions raise and int2inum are called together from 107 different functions. These functions are the instances of the pattern {raise,int2inum}. The number of instances (107) is called the support for the pattern.

Table 2.1 illustrates more patterns and their support that we mined from the call relation of systems implemented in C. Most of them show the familiar pattern of allocation and deallocation of a resource. The interesting fact is not that they exist, but that we were able to find them without knowing the names of these functions in advance.

Table 2.1

Patterns Found in Open-Source Projects

| Project | Support | Pattern |

| Ruby 1.8.4 | 17 | va_start, va_end |

| Apache HTTP 2.2.2 | 20 | va_start, va_end |

| 29 | apr_thread_mutex_lock | |

| apr_thread_mutex_unlock | ||

| Linux 2.6.10 | 28 | add_wait_queue, |

| remove_wait_queue | ||

| 53 | acpi_ut_acquire_mutex, | |

| acpi_ut_release_mutex | ||

| 27 | journal_begin, | |

| journal_end | ||

| Linux 2.6.17 | 31 | kmalloc, copy_from_user, |

| kfree | ||

| Phyton 2006-06-20 | 59 | PyEval_SaveThread, |

| PyEval_RestoreThread |

The column headed “Support” indicates how many instances of that pattern were found in a project.

Formally, the relation ![]() is a set of pairs. Each pair (o,f) relates an object

is a set of pairs. Each pair (o,f) relates an object![]() and a feature

and a feature ![]() . A pattern is a set of features

. A pattern is a set of features ![]() , and its instances are a set of objects

, and its instances are a set of objects ![]() . Given a set of objects O, we can ask what features these objects share; likewise, given a set of features F, we can ask for its instances. Both answers are expressed with the prime operator ′ (which one can think of as the derivative of a set).

. Given a set of objects O, we can ask what features these objects share; likewise, given a set of features F, we can ask for its instances. Both answers are expressed with the prime operator ′ (which one can think of as the derivative of a set).

A pattern (a set of features) corresponds to a block in a cross table—see Figure 2.2. A block is characterized by two sets: a pattern and its instances, which form the sides of the block. The formal definition interlocks a pattern and its instances:

A block as defined above is maximal in the sense that it cannot be extended with an additional object (or feature) without our having to remove a feature (or object).

Note that a block is defined as a pair of two sets, and therefore objects and features are unordered. However, to visualize a block (O,F) in a cross table, we have to put the elements of O and F next to each other. For this reason, typically not all blocks can be made visible in a table at the same time.

Because patterns are sets, a subset relation may hold between them. For example, the Ruby interpreter exhibits the pattern {raise,int2inum}, which has 107 instances. Of these 107 instances, a subset of 81 instances also call the function funcall2. These 81 instances thus form a wider pattern {raise,int2inum,funcall2} with fewer instances.

Patterns in a subset relation correspond to overlapping blocks (see Figure 2.2). The pattern {raise,int2inum} is represented by a tall but slim block, whereas the larger pattern {raise,int2inum,funcall2} is represented by a wider but shorter block. The size |O|×|F| of a block (O,F) can be used as a criterion to find interesting patterns—large blocks are good candidates.

Blocks unify patterns and their instances.

2.3 Computing All Blocks

Finding patterns requires us to identify the blocks of a relation. The crucial question is how to do this efficiently, at least for the blocks that we are most interested in.

The problem of computing all blocks of a relation is solved by formal concept analysis [3]. The definition of a block corresponds to a so-called formal concept. Concepts (and hence blocks) form a hierarchy which is defined by ![]() . Indeed, the hierarchy is a lattice (see Figure 2.4). This means, among other things, that any two blocks have a unique common subblock. and any intersection of two blocks in a table is a block in the hierarchy as well. (The intersection may be empty though.)

. Indeed, the hierarchy is a lattice (see Figure 2.4). This means, among other things, that any two blocks have a unique common subblock. and any intersection of two blocks in a table is a block in the hierarchy as well. (The intersection may be empty though.)

The call relation for the Ruby interpreter has 7280 blocks. Most blocks are small, as can be seen from the frequency distribution for block size (|O|×|F|) in Figure 2.3. A bar in the diagram for size s represents the number of blocks whose size is in an interval of width 10 that is centered at s. There are 6430 blocks of size 20 or less and 88 blocks of size 100 or more. Likewise, 7043 patterns have a support of 20 or less, and 24 patterns have support of 100 or more. We are most interested in large blocks that exceed a minimum support because they are likely to represent a regularity in the Ruby implementation.

The relation ![]() may have up to 2n blocks, where

may have up to 2n blocks, where ![]() . The actual number of blocks strongly depends on the density

. The actual number of blocks strongly depends on the density![]() of the relation (or table). The exponential case holds only for extremely dense tables. The density of the call relation for Ruby (and other systems—see Table 2.2) is below 1%, which is why the number of blocks is typically dominated by O(|R|3).

of the relation (or table). The exponential case holds only for extremely dense tables. The density of the call relation for Ruby (and other systems—see Table 2.2) is below 1%, which is why the number of blocks is typically dominated by O(|R|3).

Table 2.2

Statistics for the Call Relation of Open-Source Projects

| Call Relation | ||||

| Project | Density | Blocks | ||

| Ruby 1.8.4 | 3502 | 1974 | 0.002 | 7280 |

| Linux 2.6.0 | 11,131 | 7176 | <0.001 | 11,308 |

| Python 2.4.3 | 2624 | 1627 | 0.002 | 4870 |

| Lua 5.1 | 766 | 664 | 0.005 | 1523 |

| Apache 2.2.2 | 2256 | 1576 | 0.002 | 3301 |

Since we are most interested in the fraction of patterns (or blocks) with high support and large size, it would be wasteful to compute all blocks of a relation. The key observation for an efficient algorithm is that the blocks highest in the hierarchy exhibit the highest support (see Figure 2.4). In other words, as we move down in the hierarchy, support |O| decreases monotonically, while |F| increases. The size |O|×|F| of blocks maximizes toward the middle of the hierarchy. These are interesting characteristics because they combine wide patterns that still have relatively high support.

2.3.1 Algorithm in a Nutshell

The best-known algorithm for concept analysis is by Ganter and Wille [3]; it computes efficiently the set of all concepts. However, it does not compute the lattice of concepts explicitly, nor does it work breadth-first. Taken together, these facts make it less suitable for the exploration of only the topmost concepts in a lattice. We sketch a better suited, yet simple and efficient, algorithm below. More details can be found in [4].

The top concept (or block) for the relation ![]() is ({}′,{}″) and serves as a starting point. Given any concept (O,F), we can compute a subconcept (Of,Ff) for each feature

is ({}′,{}″) and serves as a starting point. Given any concept (O,F), we can compute a subconcept (Of,Ff) for each feature ![]() that is not already part of (O,F):

that is not already part of (O,F): ![]() . The set of subconcepts contains all lower neighbors of (O,F), but may also contain additional concepts. The following criterion holds only for lower neighbors and is used to identify them: (Of,Ff) is a lower neighbor if and only if for all x ∈ Ff F the following holds:

. The set of subconcepts contains all lower neighbors of (O,F), but may also contain additional concepts. The following criterion holds only for lower neighbors and is used to identify them: (Of,Ff) is a lower neighbor if and only if for all x ∈ Ff F the following holds: ![]() .

.

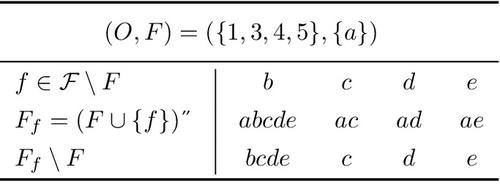

Figure 2.5 shows a small relation R as an example with the corresponding set of concepts shown in Figure 2.6. We look at the concept (O,F) = {1,3,4,5},{a}) as a starting point to explore its lower neighbors. We compute one concept for each attribute that is not already part of F. Hence, we compute concepts (or at least their attribute sets) Fb through Fe. Each of these belongs to a subconcept of (O,F) but is not necessarily also a lower neighbor of (O,F). Applying the test from above we find that Fb does not belong to a lower neighbor, whereas all other attribute sets Fc, Fd, and Fe do. Intuitively, Fb is too large. It contains attributes such as e that, when added to F, result in an attribute set different from Fb (Figure 2.7).

.

.The above algorithm is implemented in Colibri/ML, a command-line tool for concept analysis [5]. It takes a textual representation of a relation and computes all blocks and block violations. As sketched above, Colibri/ML avoids computing all blocks by starting from the top block and then moving to lower blocks breadth-first as long as blocks still exceed a given minimum support.

Colibri/ML worked well for our cases studies (see Section 2.8 for its performance). For very large systems (![]() ) the more advanced algorithm by Stumme et al. [6] could provide an alternative, as it is explicitly designed for extreme scalability.

) the more advanced algorithm by Stumme et al. [6] could provide an alternative, as it is explicitly designed for extreme scalability.

Formal concept analysis computes all blocks from a relation.

2.4 Mining Shopping Carts with Colibri

To demonstrate the mining of rules and exceptions in practice, we analyze some shopping carts—one of the original applications for frequent itemset mining. The data are the first 1000 shopping carts from the data set of Brijs [7]. It contains lines with numbers, where each line represents a shopping cart and each number represents an item the customer bought. The 1000 shopping carts together contain 3182 distinct products. A little massaging brings the original data in a form suitable for Colibri/ML, as shown in Figure 2.8.

As a first task we try to find items that are frequently bought together. Such a set of items is called a rule, and we can control two parameters: the minimum number of items in such a set and the minimum number of customers who need to have bought them together before we report them. Figure 2.9 shows the invocation of Colibri/ML and the result.

Colibri/ML reports 14 itemsets of size 3 and larger and, under support, how often these were bought together. The most popular items bought together are {a48,a41,a39}, which were bought by 106 of 1000 customers. By default, Colibri/ML does not report any itemset that was bought by fewer than 20 customers.

Given this result, the store owner could advertise these items together. He or she might also ask himself or herself whether there are customers who bought some of the items in such a set but not all of them and could advertise the missing item to these customers, assuming that they are likely to be interested. Colibri/ML can also detect such incomplete or “flawed” purchases—see Figure 2.10. In a software engineering context, these could represent software defects.

Forty-one customers bought {a38,a36} together, and three customers bought only a36 but not a38. It thus might pay off to advertise item a38 to them. Likewise, customer o90 might be interested in buying a38, which was bought otherwise always together with a110.

2.5 Violations

When a pattern is represented as a block, a violation of such a pattern is represented by an imperfect block. The initial example in Figure 2.1 shows such an imperfect block formed by the pattern {va_start,va_end}, its instances, and one function that calls only va_start. Adding the missing call to va_end would remove the violation and make the block perfect.

A similar situation is shown more schematically on the left in Figure 2.11. Closer inspection reveals that an imperfect block is really a composition of two blocks. Block A represents a pattern; this pattern is violated by a (small) number of violators belonging to a subset of block B, where the patterns of blocks A and B overlap. This is equivalent to block B being a superblock of block A in the block hierarchy (shown on the right in Figure 2.11). Together they leave a gap in a block as wide as block A and as tall as block B. The width of the gap is the number of corrections necessary in any violator to remove the violation.

Just as not every block constitutes an interesting pattern that captures a universal quality, not every gap constitutes an interesting violation of a pattern. We are interested only in gaps within blocks that we have already found interesting. This typically means that we demand a minimum support for block A before we would consider a gap. In addition, we believe that fewer violations of a pattern make these violations more credible. This is expressed in the confidence for a violation.

Confidence is the probability that any object that exhibits features F1 also exhibits features F2. A rule with a support of 100 instances and two violations yields a confidence of 100/102 = 0.98. In the initial example from Ruby 1.8.4, the rule {va_start,va_end} has support 17 and one violation. This results in a confidence of 17/18 = 0.94. Table 2.3 shows some additional violated patterns from open-source projects.

Table 2.3

Some Pattern Violations; The Underlined Call was Missing

| Project | Support | Confidence | Violated Pattern |

| Linux 2.6.17 | 141 | 0.97 | mutex_lock, |

| mutex_unlock | |||

| Linux 2.6.16 | 48 | 0.98 | down_failed, up_wakeup |

| Linux 2.6.0 | 44 | 0.96 | kmalloc, vmalloc |

| Linux 2.6.0 | 68 | 0.99 | printk, dump_stack |

| Pythona | 59 | 0.98 | PyEval_RestoreThread, |

| PyEval_SaveThread | |||

| Rubyb | 24 | 0.96 | id_each, rb_block_call |

a SVN 2006-06-20.

b CVS 2006-06-20.

A violation is a composition of two blocks.

2.6 Finding Violations

An imperfect block such as that on the left in Figure 2.11 can be constructed from block A and any superblock. In the partial block hierarchy on the right in Figure 2.11, these are blocks B, C, and D, as well as all their superblocks.

The violations of block A with the highest confidence are those represented by the upper neighbors of block A in the block hierarchy: blocks B and C in Figure 2.11. The reason is that as we move up in the hierarchy, blocks become slimmer and taller. Since confidence essentially expresses the height ratio of two blocks and we are looking for blocks of almost equal height, immediate neighbors represent pattern violations with the highest confidence.

Figure 2.12 shows the block hierarchy from the example in Figure 2.1; the number inside each block indicates the support for the pattern represented by that block. Links between blocks represent violations—some are labeled with the confidence of the violation. As we observed above, support decreases monotonically as we move down in the hierarchy. On the other hand, confidence is nonmonotonic. There is no obvious algorithm to identify only the violations with the highest confidence.

A pragmatic approach to identify violations is to consider only those that violate patterns exceeding a minimum support. These are represented by the top blocks in a hierarchy; in Figure 2.12 all blocks with support of at least 3 are shaded. Traversing all edges of the lattice breadth-first, starting from the top element, will find all interesting violations. This is most efficient with an algorithm that computes blocks and their neighbors on demand, rather than all blocks beforehand.

Violations correspond to neighboring blocks in the lattice.

2.7 Two Patterns or One Violation?

The recursive nature of a block hierarchy causes a dilemma: whether a block is a pattern or contributes to the violation of another pattern is a matter of interpretation.

When two blocks A and B with A < B have almost the same support, the confidence for a violation of block B is close to 1. This is the situation presented in Figure 2.11 and in the middle in Figure 2.12. In that case we regard block B as a block that contributes to a violation of block A.

An alternative situation is shown in Figure 2.12 on the right: two blocks A and B with A < B, where block B has about twice the support of block A. Considering block B as violating block A would result in a low confidence. It is thus more sensible to assume that blocks A and B are overlapping but otherwise independent patterns. This would mean that block A and block B both represent a correct usage, even though one pattern (B) is a subset of the other (A).

We analyzed the call relations of the projects in Table 2.2 for independent but overlapping patterns. We considered all patterns with support of at least 20 and a violation confidence below 60%. We found no such patterns in the Linux 2.6.0 kernel, none in Lua 5.1, one in Apache HTTP, but 59 such patterns in Python 2.4.3, and 49 in Ruby 1.8.4. For example, a typical pair of patterns in Python is {PyType_IsSubtype, PyErr_SetString, PyErr_Format} with support 42 and {PyType_IsSubtype, PyErr_SetString} with support 202. We have no immediate explanation why some systems show many overlapping patterns, while others show none at all. Both systems that show them are interpreters, and we suspect that these include a considerable number of functions which call many functions such that overlapping patterns can emerge.

In addition to confidence, we may use a second criterion to classify two blocks as either independent or violating: the width of the gap (see Figure 2.11), which is the number of corrections needed to make an object an instance of the violated pattern. If a pattern has width 5, it is likely that a true error misses one call, rather than two or three calls. We could thus demand that a violation should have a small gap. As a consequence, we would consider only one block violating another if both blocks have about the same height and about the same width.

Using the gap width to identify violations requires patterns of a considerable width. Otherwise the gap width is too bound to be useful as a criterion. This is the case for patterns that we found in C programs, where most patterns have width 2.

Table 2.4 presents some statistics for open-source projects to support this: the columns under the header “Patterns” indicate the number of patterns with support of at least 20 and their average width. The columns under the header “Violated Patterns” indicate how often these were violated, by how many functions (column with header “Violators”), and the average number of missing calls (column with header “Gap”). Because the average gap width is between 1 and 2, it cannot be used as a criterion to classify blocks as violations or patterns.

Table 2.4

Patterns and Violations in the Call Relation of C Programs

| Patternsa | Violated Patternsb | ||||

| Project | No. | Average Width | No. | Violators | Gap |

| Ruby 1.8.4 | 143 | 2.67 | 39 | 1.49 | 2.26 |

| Linux 2.6.0 | 112 | 2.52 | 19 | 1.21 | 1.05 |

| Python 2.4.3 | 163 | 2.32 | 8 | 1.00 | 1.62 |

| Lua 5.1 | 5 | 2.00 | 0 | 0.00 | 0.00 |

| Apache 2.2.2 | 25 | 2.08 | 1 | 1.00 | 1.00 |

a With support of 20 or greater.

b With confidence of 0.95 or greater.

Patterns and violations are recursive.

2.8 Performance

Thinking about patterns and their violations as a hierarchy of blocks is not just a theoretical model, but is also well suited for an implementation. We outlined in Sections 2.3 and 2.5 efficient algorithms to compute all blocks and to find violations of patterns above a minimal support. Here we report some performance numbers gathered with Colibri/ML [5], a command-line tool for concept analysis implemented in OCaml.2

Our test subjects were the open-source applications written in C that we have used throughout the chapter. These range from the small Lua interpreter (12 kloc of C code3 ), over-medium-sized systems like the Apache HTTP Server (207 kloc), the Python and Ruby interpreters (300 kloc, 209 kloc), to the Linux 2.6 kernel (3.6 Mloc). For the Linux kernel the actual size of the system depends strongly on the configuration because drivers can be included into the kernel, compiled into modules, or not used at all. We configured a kernel for a small server where all relevant modules are integrated into the kernel.

From each application we extracted the call relation and analyzed it for violations of patterns. We extracted the call relation by building a binary of each application, disassembling it, and analyzing labels and jumps with a small script. This static analysis is fast but misses computed jumps and calls from function pointers. While these are rare in C applications, this simple technique would not work for C++ or Java because method invocation in C++ and Java depends on dynamic dispatch at runtime. For these languages a simple experiment would instrument the code and record method invocations observed at runtime for later analysis.

Table 2.5 reports wall clock times in seconds for the analysis of pattern violations. The analysis was run on a 2 GHz AMD-64 processor on Linux for several levels of minimum support and confidence. For example, analyzing Python for all violations with support of at least 20 instances and confidence of 0.85 or greater took 17.8 s. The analysis of the Linux kernel took about 1 min, while smaller systems could be analyzed within less than 20 s. The analysis is faster for higher confidence and support levels because it must consider fewer blocks; however, the overall impact of these parameters is not prohibitive in any way. The memory requirement was about 100 MB for the Linux analysis and 20 MB for the other systems.

Table 2.5

Time in Seconds to Analyze Call Relations for Pattern Violations Exceeding a Given Support and Confidence with Colibri/ML on a 2 GHz AMD-64 Processor

| Confidence | 0.80 | 0.85 | 0.90 | 0.95 |

| Support ≥ 20 | ||||

| Ruby 1.8.4 | 10.8 | 10.7 | 9.9 | 10.7 |

| Linux 2.6.0 | 68.9 | 73.4 | 68.7 | 73.4 |

| Python 2.4.3 | 19.3 | 17.8 | 19.3 | 19.4 |

| Lua 5.1 | 0.3 | 0.3 | 0.3 | 0.3 |

| Apache 2.2.2 | 3.1 | 3.1 | 2.8 | 2.9 |

| Support ≥ 30 | ||||

| Ruby 1.8.4 | 9.2 | 8.3 | 9.1 | 8.4 |

| Linux 2.6.0 | 50.7 | 55.1 | 55.1 | 50.7 |

| Python 2.4.3 | 15.7 | 14.3 | 15.7 | 14.3 |

| Lua 5.1 | 0.3 | 0.3 | 0.2 | 0.3 |

| Apache 2.2.2 | 2.8 | 2.5 | 2.8 | 2.5 |

| Support ≥ 40 | ||||

| Ruby 1.8.4 | 8.3 | 7.6 | 8.3 | 7.6 |

| Linux 2.6.0 | 43.4 | 47.6 | 43.4 | 46.8 |

| Python 2.4.3 | 14.2 | 12.9 | 14.3 | 12.9 |

| Lua 5.1 | 0.2 | 0.2 | 0.2 | 0.2 |

| Apache 2.2.2 | 2.7 | 2.4 | 2.7 | 2.7 |

Finding pattern violations is efficient.

2.9 Encoding Order

Our analysis of the call relation is control-flow insensitive: all calls from a function are considered, ignoring their order and whether they are actually possible. This is a good fit for our framework because it is based on sets. However, we wish to demonstrate briefly that a flow-sensitive analysis can be encoded as well by discussing the approach of Wasylkowski [9]. Using our framework, he discovered the previously unknown bug #165631 in the AspectJ compiler.

Wasylkowski observed statically for objects of a Java class C sequences of incoming method calls. The order of these calls is encoded as ![]() , denoting “call C.amay precede call C.b.” Each observation is local to a method that uses an instance of C as a parameter or local variable. The result is a relation R over methods (that use class C) and pairs (

, denoting “call C.amay precede call C.b.” Each observation is local to a method that uses an instance of C as a parameter or local variable. The result is a relation R over methods (that use class C) and pairs (![]() ) of methods from class C. An analysis of this relation reveals methods that use class C in an unusual way: for instance, the bug found in AspectJ was detected because the buggy method never called C.hasNext() after calling C.next(), which was a common observation in other methods. Overall, he analyzed within minutes over 35 000 methods that used almost 3000 classes.

) of methods from class C. An analysis of this relation reveals methods that use class C in an unusual way: for instance, the bug found in AspectJ was detected because the buggy method never called C.hasNext() after calling C.next(), which was a common observation in other methods. Overall, he analyzed within minutes over 35 000 methods that used almost 3000 classes.

The example shows that sequences and graphs, which do not lend themselves to characterization by feature sets, may be analyzed for patterns using an appropriate encoding. This particular encoding, however, may grow exponentially, and is thus best suited for small graphs or sequences. An alternative is an encoding ![]() that considers only nodes or events whose distance is bound by n.

that considers only nodes or events whose distance is bound by n.

Sequences may be encoded as relations to facilitate their analysis.

2.10 Inlining

When a function f calls lock but not unlock, this is not necessarily an error: f may call g, which in turn calls unlock. Hence, f calls unlock indirectly, as shown in Figure 2.13. Both f and g violate the pattern {open,close} but we can avoid this false positive by applying inlining. Inlining works for any relation ![]() where

where ![]() holds, as in a call relation. Li and Zhou [2] explain inlining in terms of data flow analysis; we provide here an alternative explanation that works solely on the input relation.

holds, as in a call relation. Li and Zhou [2] explain inlining in terms of data flow analysis; we provide here an alternative explanation that works solely on the input relation.

Inlining derives from an existing relation ![]() a new relation

a new relation ![]() according to the following rules:

according to the following rules:

In the derived relation R1 a function f is related to the function it calls directly (g), as well as to those that it calls indirectly (h) through one intermediate function. Inlining may be repeated to capture indirect calls through two intermediate functions, and so on, to account for even more indirect calls.

So far we have fixed f by attributing close to it, but have not fixed g yet by attributing open to it. This can be easily expressed using the prime operator:

Object set g′ is the set of all functions calling g, and g″ is the set of all functions called by all callers of g. These are attributed to g in R1.

Inlining can be expressed solely on the input relation.

2.11 Related Work

There are many ways to find software defects. The best way is by checking a program against an independent specification or test. A failing test then can be used to locate the defect automatically [10]. We focus on a scenario without such external references. Instead, we aim to identify intrinsic patterns in a software system’s implementation or behavior and deviations from these patterns. Such deviations are then suggested as potential defects.

2.11.1 Mining Patterns

Mining patterns from programs for program understanding, specification, or documentation has inspired many researchers, especially in the domain of temporal behavior. The following approaches do not develop a notion of deviation and therefore are interesting only insofar as they could provide relations that could be mined for deviations.

Finite automata

Cook and Wolf [11] wrote the seminal work on learning finite-state machines from event sequences. ADABU by Dallmeier et al. [12] dynamically mines automata from Java classes that characterize the state of objects as seen through observer methods provided by their interface. A similar approach is that of Xie and Notkin [13], where the object state is observed through the return values of methods. This leads to more detailed but also less general automata. In contrast to these dynamic approaches, Henzinger et al. [14] learn permissive interfaces by repeatedly generating candidate automata that capture legal method sequences and checking them against an abstract program interpretation. While elegant, it works only for a subset of Java.

Dynamic invariants

Dynamic invariants, as conceived by Ernst et al. [15] and mined with DAIKON, represent logical relations between data that held during test executions. Observed relations such as a < b are suggested as program invariants. DAIKON works by checking a list of fixed relations between pairs of variables and a field and thus cannot infer new invariants. However, by checking a long list of relations between many pairs, a considerable variety of patterns can be mined. A simpler variation of DAIKON was proposed by Hangal and Lam [16].

2.11.2 Mining Violations

The most formal and most well established systems for the notion of consistency in software are type systems, and type inference in particular [17]. Undoubtedly, they prevent the introduction of bugs on a routine basis. However, advances in type theory benefit only future programming languages, and type systems of existing languages are often too weak to express consistency. Hence, there is strong interest in mining patterns and violations in existing software with the goal to identify defects.

Sets of sequences

Hofmeyr et al. [18] observed sequences of system calls for intrusion detection. Normal behavior is characterized by a set of short overlapping sequences. Abnormal behavior is detected when unknown sequences occur. This approach was refined by Dallmeier et al. [19] for defect localization in Java programs: the AMPLE tool compares sequences of method calls issued by objects across passing and failing test cases. The class that shows the largest deviation in behavior between passing and failing test cases is suggested as a culprit. Hence, this violation does not imply a detailed fix, unlike the method proposed here.

Cluster analysis

Dickinson et al. [20] employed cluster analysis to separate normal program traces from traces triggering bugs. While this can capture a very wide range of behavioral patterns, cluster analysis has very little explanatory power, unlike the patterns and violations we propose here.

Mining correlations

Liblit et al. [21] mined violations in an abstract sense. They observed a statistical correlation of program failure with return values of functions (which are then used in control-flow statements) and predicates. This correlation has high explanatory power, but depends on a high number of varying program executions to develop a statistical notion of normal behavior.

Pairs of functions

Weimer and Necula [22] learn pairs of function calls (such as open/close) from program traces. They looked specifically for violations of these patterns in error-handling code that misses the call to the second function. They detected a considerable number of defects. Their paper is also remarkable for its comparison with other defect localization approaches. Conceptually, this approach learns purely structural patterns and does not depend on prior knowledge—like us. Unlike us, patterns are ordered pairs, whereas we consider unordered sets of any size.

Checking known patterns

Engler et al. [1] coined the slogan of bugs as deviant behavior and introduced a tool that searches for bug patterns. Each instance of such a pattern expresses an inconsistent view of the state of a program and hence a likely bug. The difference from our formalism is that the formalism of Engler et al. can detect only known patterns of inconsistency and cannot find new ones. On the other hand, searching for known patterns results in high precision.

Mining version history

Livshits and Zimmermann [23] mined patterns from the development history which they represented as a sequence of transactions that add new function calls. For each transaction, they mined (using frequent itemset mining) usage patterns of method calls being added together. These patterns are presented to the user for confirmation as being correct; on the basis of them, dynamic tests search for violations of these patterns. The static mining step for patterns is similar to our approach (and could have used it), whereas violation detection is done dynamically using program instrumentation and test cases. The detection of test cases is limited to pairs of functions, whereas we can detect violations of any pattern.

2.11.3 PR-Miner

pr-Miner by Li and Zhou [2] inspired us to propose concept analysis as a better foundation for their analysis that identifies purely structural sets of features and their violations. pr-Miner is based on frequent itemset mining, and mines closed feature sets. A violation (called a “rule”) is represented as an implication ![]() , where A and B are closed feature sets.

, where A and B are closed feature sets.

A closed itemset corresponds to a pattern in our formalism and also to a block, which has the additional benefit that it includes the instances of the pattern. A rule corresponds to neighboring blocks in our formalism, again with the benefit of also representing all instances, and thus making theory and implementation more uniform. The notion of confidence in both formalisms is equivalent.

The short characterization of pr-Miner above might suggest that blocks and formal concepts provide no added benefit. However, we argue that a precise understanding of what pr-Miner does is greatly enhanced by the theory of formal concept analysis. This seems evident both from our simpler and shorter explanation of mining, algorithms and from the discussion of the block hierarchy and its size. Combining patterns and instances into blocks gives access to a rich algebra and intuitive geometric interpretation which simply does not exist for closed itemsets in isolation.

Li and Zhou reported impressive performance numbers using an off-the-shelf implementation for frequent itemset mining. They clearly benefited from years of development of these tools in the data-mining community. However, we believe that the performance of Colibri/ML provides a viable alternative for practical problems.

pr-Miner implements some data flow analysis to minimize false positives. It is based on the insight that a pattern {a,b} might be implemented not just by calling a and b directly, but also by calling a and c, which in turn calls b. This analysis is independent of the mining, and can be implemented for either system. Indeed, what is expressed as a data flow analysis by Li and Zhou [2] can also be expressed as an operation on the input relation R as in Section 2.10.

pr-Miner analyzes variable declarations in addition to function calls. Again, this is not inherent to the mining, and can be implemented for any system by making these part of the input relation.

2.12 Conclusions

Formal concept analysis provides a practical and theoretical framework to identify structural patterns and violations in binary relations. The analysis assumes no a priori knowledge such as names or predefined patterns—unlike many previous approaches. Pattern violations have been shown to correlate with bugs in software systems. The main benefit over classical frequent itemset mining [24] is that blocks (or concepts) unify a pattern and its instances. Together they form a rich and well-studied algebra; furthermore, they offer a geometric interpretation which provides intuition: violations correspond to imperfect blocks in a cross table.

A relation (such as a call relation) induces a block hierarchy. Each block corresponds to a pattern, and neighboring blocks correspond either to independent patterns or a violation—depending on the associated confidence. This is the main conceptual result of this chapter.

Formal concept analysis gives us complexity results for pattern mining: the number of blocks (or patterns) induced by a relation may grow exponentially. This happens only for dense relations; call relations, at least, tend to be sparse. In addition, only a small fraction of blocks exceeding a minimum support are of interest and can be computed efficiently using an implementation that we provide [5].

Algorithms for formal concept analysis are practical although they lack the performance tuning that went into algorithms and implementations for frequent itemset mining [25]. We provide an open-source implementation, Colibri/ML, that was able to analyze the call relation of the Linux kernel within 1 min, and smaller systems such as the Python interpreter in under 20 s.

Earlier work on detecting anomalies often had a special focus on pairs of function calls [22, 26], rather than the more general patterns we studied. However, we found that most call patterns in open-source projects implemented in C have width between 2 and 3 (see Table 2.4). This is a posteriori a justification for the special interest in such pairs.

The starting point of our analysis is a binary relation. This implies that we analyze sets of features related to objects. This seeming limitation may be overcome using clever encodings, as demonstrated by Wasylkowski [9]. We are also encouraged by the success of code query languages such as CodeQuest [27], which represent software at their core using relations. By extending them with our analysis, we would be able to mine many more source code relations for patterns and violations.

Future work

Patterns and violations as we mine them do not capture intrinsically the notion of program execution. We wish to take advantage of this to provide better support of nonexecutable code. By this we mean configuration files for services such as e-mail, HTTP, or firewalls. They control security-critical applications, but almost no support for them exists beyond syntax checkers and syntax highlighting. Patterns can capture best practices that can be learned from existing configuration files. For example, a pattern may represent a combination of flags in a firewall rule. A system administrator could be warned before deploying an unusual flag combination in a firewall rule. We believe that this kind of support could be provided by editors on a routine basis as is done today for syntax highlighting.

All in all, we have shown that formal concept analysis provides an appealing theoretical and practical framework to identify structural patterns and their violations.