Data Transfer Patterns

In an ideal world, every time we send data from one place to another, we would include all the information required for the current activity, with no waste and no need for the recipient to come back with questions. Anyone who has worked technical support is familiar with the situation: it’s much easier to have the user explain the entire problem at once, with all the relevant detail, rather than have to ask a series of questions to determine whether the computer is on, if an error message was displayed, if the software was actually installed, and so forth. A single support person can handle more problems with fewer headaches.[1]

In Java terms, this ideal could involve transferring a single object

containing a complete bug report, rather than transmitting several

individual objects for each aspect of the report. Of course, we still

have to deal with the trade-off between performance and flexibility.

When objects interact with each other in a local JVM, the overhead of

a method call is generally fairly small, while the overhead of

additional objects, both in terms of developer time and runtime

resources, can be fairly high. When dealing with a class representing

a person, it may make more sense to include a set of address fields

in the Person class itself, rather than creating a

separate Address class to hold address

information. The cost in code complexity of the additional fields may

be balanced by the need to manage fewer objects at runtime.[2]

Once remote resources enter the picture, however, the rules change quickly. Just as with our technical support analogy, communications overhead becomes the chief bottleneck. Calling 5 methods on a remote EJB entity bean to retrieve a single address will rapidly turn a 200-millisecond operation into a 1-second operation. There are real benefits if we can keep the number of calls down, even if it means we aren’t as purely object-oriented as we could be.

Network

overhead isn’t the only bottleneck. In an EJB

environment the server might have to instantiate the bean from

storage multiple times, either by passivating and activating or by

calling ejbLoad( ) again. This process creates

another whole layer of activity that must be finished before any data

can be sent back over the network. While most servers will optimize

against this possibility, excessive bean creation can degrade

performance even over local interfaces. Web services face the same

problem: the overhead of parsing each XML request can be pretty

dramatic.

What’s the moral of the story? The more complex and distributed an application is, the more opportunities there are for performance to degrade. That’s the problem we try to solve in this chapter. The data transfer patterns in the next sections focus on improving performance by reducing the number of interactions between remote objects and their clients.

Data Transfer Objects

The Data Transfer Object (DTO) pattern is simple: rather than make multiple calls to retrieve a set of related data, we make a single call, retrieving a custom object that includes everything we need for the current transaction. When the presentation tier wants to update the business tier, it can pass the updated object back. The DTO becomes the charged interface between the presentation and business tiers, and between the various components of the business tier. We return to this concept in Chapters 8 and 9.

DTOs are primarily used to improve performance, but they have organizational advantages as well. Code that retrieves a single object presenting, say, a customer record, is easier to understand and maintain than code that makes dozens of extraneous getter and setter calls to retrieve names, addresses, order history, and so forth.

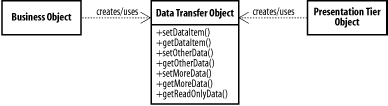

Figure 7-1 shows a potential class diagram for a DTO. The business and presentation tiers can create and manipulate DTOs, and share them with each other. In the class diagram, we’ve formatted the DTO as a JavaBean, but this isn’t a requirement.

Data objects as DTOs

The simplest data transfer objects are simply the data objects used

within the business object itself. Let’s start by

taking a look at a simple EJB that represents a

Patient and associated data. The interface for the

Patient bean is listed in Example 7-1. For the examples in this chapter,

we’re using the same database schema we introduced

in Chapter 6. In this case, we include information

from the PATIENT and PATIENT_ADDRESS tables.

import javax.ejb.EJBObject;

import java.rmi.RemoteException;

public interface Patient extends EJBObject {

public String getFirstName( ) throws RemoteException;

public void setFirstName(String firstName) throws RemoteException;

public String getLastName( ) throws RemoteException;

public void setLastName(String lastName) throws RemoteException;

public Address getHomeAddress( ) throws RemoteException;

public void setHomeAddress(Address addr) throws RemoteException;

public Address getWorkAddress( ) throws RemoteException;

public void setWorkAddress(Address addr) throws RemoteException;

}We also make an assumption that the data model

doesn’t implicitly show: we’re

interested in home and work addresses, specifically. In the database,

we’ll identify these via the ADDRESS_LABEL field,

which can contain “Home” or

“Work” (our bean simply ignores any

other values). The Address class itself is listed

in Example 7-2. We’ve kept it

simple, just using a single serializable object as a holder for a set

of public fields.

import java.io.*;

public class Address implements Serializable {

public String addressType = null;

public String address1 = null;

public String address2 = null;

public String city = null;

public String province = null;

public String postcode = null;

}We could also implement the Address object as a

JavaBean, particularly if we need to perform checks before setting

values. However, the approach of creating a set of public fields

makes it easier to remember that this is merely a transient data

object rather than something capable of performing business

operations or keeping track of object state. Instead, we store

changes to the address by passing a new Address

object to the setHomeAddress( ) and

setWorkAddress( ) methods.

Since we have the Address object,

we’re going to use it internally, as well.

There’s no sense reinventing the wheel, and

leveraging the object in both places means that if we add another

address field, it will automatically be included in both the main

bean and the DTO. Sometimes, of course, the requirements of the DTO

and the business object differ; in those cases, you should never try

to shoehorn the DTO object into the business

object’s internals, or vice versa.

Example 7-3 contains a partial listing of the

PersonBean object that uses the

Address data object, internally as well as for

interacting with the outside world.

import javax.ejb.*;

import javax.naming.*;

import java.sql.*;

import javax.sql.*;

public class PatientBean implements EntityBean {

private EntityContext context;

private Long pat_no = null;

private String fname = null;

private String lname = null;

private Address workAddress = new Address( );

private Address homeAddress = new Address( );

public Long ejbCreate(Long newId) {

pat_no = newId;

homeAddress.addressType = "Home";

workAddress.addressType = "Work";

return newId;

}

public void ejbPostCreate(Long newId) {

}

public Address getHomeAddress( ) {

return homeAddress;

}

public void setHomeAddress(Address addr) {

setAddress(homeAddress, addr);

}

public Address getWorkAddress( ) {

return workAddress;

}

public void setWorkAddress(Address addr) {

setAddress(workAddress, addr);

}

private void setAddress(Address target, Address source) {

target.address1 = source.address1;

target.address2 = source.address2;

target.city = source.city;

target.province = source.province;

target.postcode = source.postcode;

}

public Long ejbFindByPrimaryKey(Long primaryKey) throws FinderException {

Connection con = null;

PreparedStatement ps = null;

ResultSet rs = null;

try {

con = getConnection( ); // local method for JNDI Lookup

ps = con.prepareStatement("select pat_no from patient where pat_no = ?");

ps.setLong(1, primaryKey.longValue( ));

rs = ps.executeQuery( );

if(!rs.next( ))

throw (new ObjectNotFoundException("Patient does not exist"));

} catch (SQLException e) {

throw new EJBException(e);

} finally {

try {

if(rs != null) rs.close( );

if(ps != null) ps.close( );

if(con != null) con.close( );

} catch (SQLException e) {}

}

// We found it, so return it

return primaryKey;

}

public void ejbLoad( ) {

Long load_pat_no = (Long)context.getPrimaryKey( );

Connection con = null;

PreparedStatement ps = null;

ResultSet rs = null;

try {

con = getConnection( ); // local method for JNDI Lookup

ps = con.prepareStatement("select * from patient where pat_no = ?");

ps.setLong(1, load_pat_no.longValue( ));

rs = ps.executeQuery( );

if(!rs.next( ))

throw (new EJBException("Unable to load patient information"));

pat_no = new Long(rs.getLong("pat_no"));

fname = rs.getString("fname");

lname= rs.getString("lname");

ps.close( );

rs.close( );

ps = con.prepareStatement(

"select * from patient_address where pat_no = ? and address_label in " +

"('Home', 'Work')");

ps.setLong(1, load_pat_no.longValue( ));

rs = ps.executeQuery( );

// Load any work or home

while(rs.next( )) {

String addrType = rs.getString("ADDRESS_LABEL");

if("Home".equals(addrType)) {

homeAddress.address1 = rs.getString("addr1");

homeAddress.address2 = rs.getString("addr2");

homeAddress.city = rs.getString("city");

homeAddress.province = rs.getString("province");

homeAddress.postcode = rs.getString("postcode");

} else if ("Work".equals(addrType)) {

workAddress.address1 = rs.getString("addr1");

workAddress.address2 = rs.getString("addr2");

workAddress.city = rs.getString("city");

workAddress.province = rs.getString("province");

workAddress.postcode = rs.getString("postcode");

}

}

} catch (SQLException e) {

throw new EJBException(e);

} finally {

try {

if(rs != null) rs.close( );

if(ps != null) ps.close( );

if(con != null) con.close( );

} catch (SQLException e) {}

}

}

// Remaining EJB methods go here

...

}The setWorkAddress( ) and setHomeAddress(

) methods explicitly update the currently stored addresses

rather than simply storing the addresses passed in as parameters.

This prevents any possibility of crossed-over references when dealing

with EJB local instances, and also gives us a compile-time alert if

the DTO data structure changes dramatically, which would affect the

persistence code as well.

Dedicated data transfer objects

In addition to using data objects as

DTOs,

we can create more complex DTO objects that effectively act as

façades for the entity they’re associated

with. Example 7-4 shows the

PatientDTO object, which can contain a complete

set of information regarding a patient, including their unique

identifier (the primary key for the EJB entity bean).

import java.util.ArrayList;

import java.io.*;

public class PatientDTO implements Serializable {

public long pat_no = -1;

public String fname = null;

public String lname = null;

public ArrayList addresses = new ArrayList( );

}Since this is a dedicated data transfer object,

we’ve named it PatientDTO. We

didn’t use this convention with the

Address object, since that was used for both

exchanging data and managing it internally within the EJB. Of course,

we could rewrite the EJB to use the PatientDTO

object internally; but if we don’t, we achieve some

decoupling that allows us to extend the

PatientBean later on while maintaining

compatibility with presentation tier components that use the original

PatientDTO. When adding new features to the

Patient object, we might create an

ExtendedPatientDTO object, or use the Data

Transfer Hash pattern (discussed below).

Adding support for the full DTO is simple, requiring the following

method for the PatientBean object, and the

corresponding signature in the bean interface definition:

public PatientDTO getPatientDTO( ) {

PatientDTO pat = new PatientDTO( );

pat.pat_no = pat_no.longValue( );

pat.fname = fname;

pat.lname = lname;

pat.addresses.add(homeAddress);

pat.addresses.add(workAddress);

return pat;

}Data Transfer Hash Pattern

Sometimes the data transferred between tiers isn’t known ahead of time, and as a result, it can’t be stored in a JavaBean, since we need to define the JavaBean ahead of time. Sometimes the server half of a client/server application is upgraded, adding support for new data items, but some of the clients aren’t. And sometimes an application changes frequently in the course of development. In these situations, it’s helpful to have a DTO approach that doesn’t tie the client and the server into using the same version of a DTO class, and doesn’t requiring changing class definitions to send more (or less) data.

The Data Transfer Hash

pattern defines a special type of DTO that

addresses these issues. Rather than defining a specific object to

hold summary information for a particular entity or action, a data

transfer hash uses a Hashtable or

HashMap object (generally the latter, to avoid

unnecessary thread synchronization overhead) to exchange data with

the client tier. This is accomplished by defining a known set of keys

to identify each piece of data in the hash. Good practice here is to

use a consistent, hierarchical naming scheme. For the

Patient object, we might have

patient.address.home.addr1,

patient.address.work.addr1,

patient.firstname,

patient.lastname, and so forth.

Using hash maps for transferring data makes it very easy to change data formats, since in some cases you might not even have to recompile (for example, if your hash map generation code builds the map based on dynamic examination of a JDBC result set). It also makes it easier to soft-upgrade an application, adding more data, which can then be safely ignored by clients that don’t support it.

On the other hand, a data transfer hash makes it difficult to

identify what kind of data you’re dealing with. It

also makes compile-time type checking impossible, since a

HashMap

stores undifferentiated objects. This

can get messy with nonstring data. And since data structures

can’t be verified at compile time, there must be

close communication between developers working on the business and

presentation tiers.

We can get around the first drawback by encapsulating a

HashMap in a container object, rather than using

it directly. This step allows us to identify a hash table instance as

a data transfer hash, and also lets us tack on some helpful

identifying information about the information we’re

exchanging. Example 7-5 shows a simple version,

which allows us to specify an arbitrary map type along with the data.

We call this a named

HashMap.

Row Set DTOs

The DTO types we’ve looked at up to this point have focused on single objects, or single objects with a set of dependent objects. However, many applications frequently need to transfer large sets of relatively simple data. This data may not warrant a specific object wrapper, or the exact data structure may not be known ahead of time. And sometimes it’s simpler to work with a set of rows and columns than with an object, particularly when the task at hand involves presenting the data in rows and columns, anyway—a frequent activity on the Web.

The data for this kind of row-centric activity generally comes out of a database, and the native format of the database is row-column based. In these situations, we can build a DTO directly out of a JDBC result set without paying much attention to the data content itself. The DTO can then be sent to the ultimate consumer.

One approach to building a Row Set DTO is to use an object

implementing the

JDBC 2.0 RowSet

interface. Since row sets in this context need to be disconnected

from their original source, this approach means using a

RowSet implementation that completely encapsulates

all the rows in the result set. Obviously, if there are several

thousand rows in the set, this approach can get very expensive.

There are two advantages to building a Row Set DTO using a

RowSet interface. First, an implementation

(WebRowSet

)

is readily available from Sun, although it isn’t

part of the standard JDBC distribution. Second, there are a lot of

presentation tier components, such as grid controls, written to work

with RowSets, so if your presentation tier (or one

of your presentation tiers) is a rich client, you can plug the

results right in.

The problem with the RowSet interface is that it

can get a bit heavy. It supports full updating and navigation, as

well as field-by-field accessor methods. As a result, the

implementation is fairly complicated and resource-intensive. If you

don’t need the full capabilities of

RowSet, a more lightweight implementation makes

sense. Example 7-6 shows a lightweight version of

RowSet that preserves the data-independent nature

of the original.

import java.sql.*;

import java.util.*;

/**

* Provide a lightweight wrapper for a set of rows. Preserve types as best

* as possible.

*/

public class LightRowSet implements java.io.Serializable {

ArrayList rows = null;

int rowCount = 0;

int colCount = 0;

String[] colNames = null;

public LightRowSet(ResultSet rs) throws SQLException {

rows = new ArrayList( );

if (rs == null) {

throw new SQLException("No ResultSet Provided");

}

ResultSetMetaData rsmd = rs.getMetaData( );

colCount = rsmd.getColumnCount( );

colNames = new String[colCount];

for (int i = 0; i < colCount; i++) {

colNames[i] = rsmd.getColumnName(i + 1);

}

while (rs.next( )) {

Object[] row = new Object[colCount];

for (int i = 0; i < colCount; i++)

row[i] = rs.getObject(i + 1);

rows.add(row);

}

rs.close( );

}

/** Return the column names in this row set, in indexed order */

public String[] getColumnNames( ) {

return colNames;

}

/**

* Return an iterator containing all of the rows

*/

public Iterator getRows( ) {

return rows.iterator( );

}

/**

* Return a particular row, indexed from 1..n. Return null if the row

* isn't found.

*/

public Object[] getRow(int index) {

try {

return (Object[]) rows.get(index - 1);

} catch (ArrayIndexOutOfBoundsException aioobe) {

return null;

}

}

public int getRowCount( ) {

return rows.size( );

}

}The implementation of

LightRowSet

is very simple: it accepts a JDBC ResultSet from

an external source and unpacks the data into a set of arrays. It uses

the ResultSetMetaData interface to retrieve the

column count and column names, and makes the header information, as

well as the data, available via a set of getter methods. We conform

to the SQL style of referring to rows and indexes based on a 1 to n

(rather than Java’s 0 to n-1) indexing scheme.

In practice, using the LightRowSet is easy.

Here’s the signature of an EJB session bean that

uses it to return staff directory information:

import javax.ejb.EJBObject;

import java.rmi.RemoteException;

import com.oreilly.j2eepatterns.chapter7.LightRowSet;

public interface StaffDirectory extends EJBObject {

LightRowSet getDirectory(String department) throws RemoteException;

}And here’s a simple implementation of

getDirectory( ):

public LightRowSet getDirectory(String department) {

try {

Connection con = getConnection( );

Statement stmt = con.createStatement( );

ResultSet rs = stmt.executeQuery(

"SELECT * FROM STAFF WHERE DEPT = '" +department + "'");

LightRowSet lrs = new LightRowSet(rs);

rs.close( );

stmt.close( );

return lrs;

} catch (SQLException e) {

e.printStackTrace( );

} finally {

try { con.close( ); } catch (SQLException ignored) {}

}

return null;

}One drawback to the

RowSet

approach is that it exposes the underlying names of the columns in

the table. Database columns aren’t always

appropriate for exposure to the outside world; they tend to be

cryptic at best, and downright misleading at worst. A simple way

around this problem is to assign meaningful column aliases to each

column in the query. Rather then selecting everything from the staff

table, we might prefer to do this instead:

SELECT FNAME as FIRSTNAME, LNAME as LASTNAME, REZPRIADDR AS ADDRESS, TRIM(CITY) AS CITY FROM STAFF WHERE DEPT = 'Oncology'

Writing SQL like this ensures that we have meaningful names for each column in the set. It also assigns a reasonable name to a result field that was generated by a database-side operation. If we didn’t do this, the database would have to generate its own name for the trimmed CITY column.