APPENDIX B

SOLUTION OF EQUATIONS BY MATRIX METHODS

B.1 INTRODUCTION

As stated in Appendix A, an advantage offered by matrix algebra is its adaptability to computer usage. Using matrix algebra, large systems of simultaneous linear equations can be programmed for general computer solution using only a few systematic steps. For example, the simplicities of programming matrix additions and multiplications were presented in Section A.9. To solve a system of equations using matrix methods, it is first necessary to define and compute the inverse matrix.

B.2 INVERSE MATRIX

![]() If a square matrix is nonsingular (its determinant is not zero), it possesses an inverse matrix. When a system of simultaneous linear equations consisting of n equations and involving n unknowns is expressed as AX = B, the coefficient matrix (A) is a square matrix of dimensions n × n. Consider this system of linear equations

If a square matrix is nonsingular (its determinant is not zero), it possesses an inverse matrix. When a system of simultaneous linear equations consisting of n equations and involving n unknowns is expressed as AX = B, the coefficient matrix (A) is a square matrix of dimensions n × n. Consider this system of linear equations

The inverse of matrix A, symbolized as A−1, is defined as

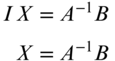

where I is the identity matrix. Premultiplying both sides of matrix Equation (B.1) by A−1 gives

Reducing yields

Thus, the inverse is used to find the matrix of unknowns, X. The following points should be emphasized regarding matrix inversions:

- Square matrices have inverses, with the exception noted below.

- When the determinant of a matrix is zero, the matrix is said to be singular and its inverse cannot be found.

- The inversion of even a small matrix is a tedious and time-consuming operation when done by hand. However, when done by a computer, the inverse can be found quickly and easily.

B.3 THE INVERSE OF A 2 × 2 MATRIX

Several general methods are available to find a matrix inverse. Two shall be considered herein. However, before proceeding with general cases consider the specific case of finding the inverse for a 2 × 2 matrix using simple elementary matrix operations. Let any 2 × 2 matrix be symbolized as A. Also, let

By applying Equation (B.2) and recalling the definition of an identity matrix I as given in Section A.4, it is possible to calculate w, x, y, and z in terms of a, b, c, and d of A−1. Substituting in the appropriate values gives

By matrix multiplication

The determinant of A is symbolized as ![]() and equal to ad − bc.

and equal to ad − bc.

Thus, for any 2 × 2 matrix composed of the elements  , its inverse is simply

, its inverse is simply

B.4 INVERSES BY ADJOINTS

The inverse of A can be found using the method of adjoints with the following equation:

The adjoint of A is obtained by first replacing each matrix element by its signed minor or cofactor, and then transposing the resultant matrix. The cofactor of element aij equals (−1)i+j times the numerical value of the determinant for the remaining elements after row i and column j have been removed from the matrix. This procedure is illustrated in Figure B.1 where the cofactor of a12 is

FIGURE B.1 Cofactor of the a12 element.

Using this procedure, the inverse of the following A matrix is found:

For this A matrix, the cofactors are calculated as follows:

Following the procedure above, the matrix of cofactors is

Transposing this cofactor matrix produces the following adjoint of A:

The determinant of A is the sum of the products of the elements in the first row of the original matrix times their respective cofactors. Since the cofactors were already obtained in the previous step, this simplifies to

The inverse of A is now calculated as

Again, a check on the arithmetical work is obtained by using the definition of an inverse:

B.5 INVERSES BY ELEMENTARY ROW TRANSFORMATIONS

![]() A system of equations can be modified using the following three steps without changing its solution:

A system of equations can be modified using the following three steps without changing its solution:

- The multiplication of every element in any row by a nonzero scalar. This is simply scaling the equation. Thus, if the original equation is 2x = 4 and this equation is scaled by three the resultant equation 6x = 12 still as the same solution of x = 2.

- The addition (or subtraction) of the elements in any row to the elements of any other row.

- Combinations of 1 and 2.

If elementary row transformations are successively performed on A such that A is transformed into I, and if throughout the procedure the same row transformations are also done to the same rows of the identity matrix I, the I matrix will be transformed into A−1. This procedure is illustrated using the same matrix used to demonstrate the method of adjoints.

Initially, the original matrix and the identity matrix are listed side by side:

With the following three-row transformations performed on A and I, they are transformed into matrices A1 and I1, respectively:

- Multiply row 1 of matrices A and I by 1/a11 or 1/4. Place the results in row 1 of A1 and I1, respectively. This converts a11 of matrix A1 to 1, as shown below.

- Multiply row 1 of matrices A1 and I1 by a21 or 3. Subtract the resulting row from row 2 of matrices A and I and place the difference in row 2 of A1 and I1, respectively. This converts a21 of A1 to zero.

- Multiply row 1 of matrices A1 and I1 by a31 or 2. Subtract the resulting row from row 3 of matrices A and I and place the difference in row 3 of A1 and I1, respectively. This changes a31 of A1 to zero.

After doing these operations, the transformed matrices A1 and I1 are

Notice that the first column of A1 is equivalent to the first column of a 3 × 3 identity matrix as a result of these three-row transformations. For matrices having more than three rows, this same general procedure would be followed for each row to convert the first element in each row of A1 to zero, with the exception of the first row of A.

Next, the following three elementary row transformations are done on matrices A1 and I1 to transform them into matrices A2 and I2:

- Multiply row 2 of A1 and I1 by 1/a22 or 4/7 and place the results in row 2 of A2 and I2. This converts a22 to 1, as shown below.

- Multiply row 2 of A2 and I2 by a12 or 3/4. Subtract the resulting row from row 1 of A1 and I1 and place the difference in row 1 of A2 and I2, respectively.

- Multiply row 2 of A2 and I2 by a32, which is 3/2. Subtract the resulting row from row 3 of A1 and I1 and place the difference in row 3 of A2 and I2, respectively.

After doing these operations, the transformed matrices A2 and I2 are:

Notice that after this second series of steps is completed, the second column of A2 conforms to column two of a 3 × 3 identity matrix. Again, for matrices having more than three rows, this same general procedure would be followed for each row, to convert the second element in each row (except the second row) of A2 to zero.

Finally, the following three-row transformations are applied to matrices A2 and I2 to transform them into matrices A3 and I3.

- Multiply row 3 of A2 and I2 by 1/a33 or 7/24 and place the results in row 3 of A3 and I3, respectively. This converts a33 to 1, as shown below.

- Multiply row 3 of A2 and I2 by a13 or 5/7. Subtract the results from row 1 of A2 and I2 and place the difference in row 1 of A3 and I3, respectively.

- Multiply row 3 of A2 and I2 by a23 or −2/7. Subtract the results from row 2 of A2 and I2 and place the difference in row 2 of A3 and I3.

Following these operations, the transformed matrices A3 and I3 are:

Notice that through these nine elementary row transformations, the original A matrix is transformed into the identity matrix and the original identity matrix is transformed into A−1, which can be verified by multiplying it by the A matrix. Also note that A−1 obtained by this method agrees exactly with the inverse obtained by the method of adjoints. This is because any nonsingular matrix has a unique inverse.

It should be obvious that the quantity of work involved in inverting matrices greatly increases with the matrix size, since the number of necessary row transformations is equal to the square of the number of rows or columns. Because of this, it is not considered practical to invert large matrices by hand. This work is more conveniently done with a computer. Since the procedure of elementary row transformations is systematic, it is easily programmed.

TABLE B.1 Inverse Algorithm in BASIC, C, FORTRAN, and PASCAL

BASIC Language

|

FORTRAN Language

|

C Language

|

Pascal Language

|

Table B.1 shows algorithms, written in BASIC, C, FORTRAN, and Pascal programming languages, for calculating the inverse of any n × n nonsingular matrix A. Students should review the code in their preferred language to gain familiarity with the computer procedures.

B.6 EXAMPLE PROBLEM

PROBLEMS

- B.1 Explain when a 2 × 2 matrix has no inverse.

- B.2 Find the inverse of A using the method of adjoints

- B.3 Find the inverse of A in Problem B.2 using elementary row transformations.

- B.4 Solve the following system of linear equations using matrix methods.

- B.5 Solve the following system of linear equations using matrix methods.

- B.6 Compute the inverses of the following matrices.

- B.7 Compute the inverses of the following matrices.

- B.8 Compute the inverses of the following matrices.

- B.9 Solve the following matrix system.

Use the MATRIX software to do each problem.

- B.10 Problem B.4.

- B.11 Problem B.5.

- B.12 Problem B.6.

- B.13 Problem B.7.

- B.14 Problem B.8.

- B.15 Problem B.9.

PROGRAMMING PROBLEMS

- B.16 Select one of the coded matrix inverse routines from Table B.1, enter the code into a computer and use it to solve Problem B.7. (Hint: Place the code in Table B.1 in a separate subroutine/function/procedure to be called from the main program.)

- B.17 Add a block of code to the inverse routine in the language of your choice that will inform the user when a matrix is singular.

- B.18 Write a program that reads and writes a file with a nonsingular matrix; finds its inverse, and writes the results. Use this program to solve Problem B.7. (Hint: Place the reading, writing, and inversing code in separate subroutines/functions/procedures to be called from the main program. Provide a way to identify each matrix in the output file.)

- B.19 Write a program that reads and writes a file containing a system of equations written in matrix form, solves the system using matrix operations, and writes the solution. Use this program to solve Problem B.9. (Hint: Place the reading, writing, and inversing code in separate subroutines/functions/procedures to be called from the main program. Provide a way to identify each matrix in the output file.)