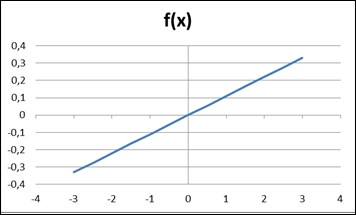

Let's work on a very simple yet illustrative example. Suppose you want a single neuron neural network to learn how to fit a simple linear function such as the following:

|

|

|

This is quite easy even for those who have little math background, so guess what? It is a nice start for our simplest neural network to prove its learning ability!

We;re going to structure the dataset for the neural network to learn using the following code, which you can find in the main method of the file NeuralNetDeltaRuleTest:

Double[][] _neuralDataSet = {

{1.2 , fncTest(1.2)}

, {0.3 , fncTest(0.3)}

, {-0.5 , fncTest(-0.5)}

, {-2.3 , fncTest(-2.3)}

, {1.7 , fncTest(1.7)}

, {-0.1 , fncTest(-0.1)}

, {-2.7 , fncTest(-2.7)} };

int[] inputColumns = {0};

int[] outputColumns = {1};

NeuralDataSet neuralDataSet = newNeuralDataSet(_neuralDataSet,inputColumns,outputColumns);The funcTest function is defined as the function we mentioned:

public static double fncTest(double x){

return 0.11*x;

}Note that we're using the class NeuralDataSet to structure all this data in such a way that they will be fed into the neural network the right way. Now let's link this dataset to the neural network. Remember that this network has a single neuron in the output. Let's use a nonlinear activation function such as hyperbolic tangent at the output with a coefficient 0.85:

int numberOfInputs=1;

int numberOfOutputs=1;

HyperTan htAcFnc = new HyperTan(0.85);

NeuralNet nn = new NeuralNet(numberOfInputs,numberOfOutputs,

htAcFnc);Let's now instantiate the DeltaRule object and link it to the neural network created. Then we'll set the learning parameters such as learning rate, minimum overall error, and maximum number of epochs:

DeltaRule deltaRule=new DeltaRule(nn,neuralDataSet.LearningAlgorithm.LearningMode.ONLINE); deltaRule.printTraining=true; deltaRule.setLearningRate(0.3); deltaRule.setMaxEpochs(1000); deltaRule.setMinOverallError(0.00001);

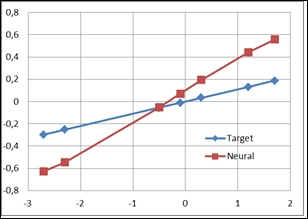

Now let's see the first neural output of the untrained neural network, after calling the method forward( ) of the deltaRule object:

deltaRule.forward(); neuralDataSet.printNeuralOutput();

Plotting a chart, we find that the output generated by the neural network is a little bit different:

We will start training the neural network in the online mode. We've set the printTraining attribute as true, so we will receive in the screen an update. The following piece of code will produce the subsequent screenshot:

System.out.println("Beginning training");

deltaRule.train();

System.out.println("End of training");

if(deltaRule.getMinOverallError()>=deltaRule.getOverallGeneralError()){

System.out.println("Training succesful!");

}

else{

System.out.println("Training was unsuccesful");

}

The training begins and the overall error information is updated after every weight update. Note the error is decreasing:

After five epochs, the error reaches the minimum; now let's see the neural outputs and the plot:

|

|

Quite amazing, isn't it? The target and the neural output are practically the same. Now let's take a look at the final weight and bias:

weight = nn.getOutputLayer().getWeight(0, 0);

bias = nn.getOutputLayer().getWeight(1, 0);

System.out.println("Weight found:"+String.valueOf(weight));

System.out.println("Bias found:"+String.valueOf(bias));

//Weight found:0.2668421011698528

//Bias found:0.0011258204676042108