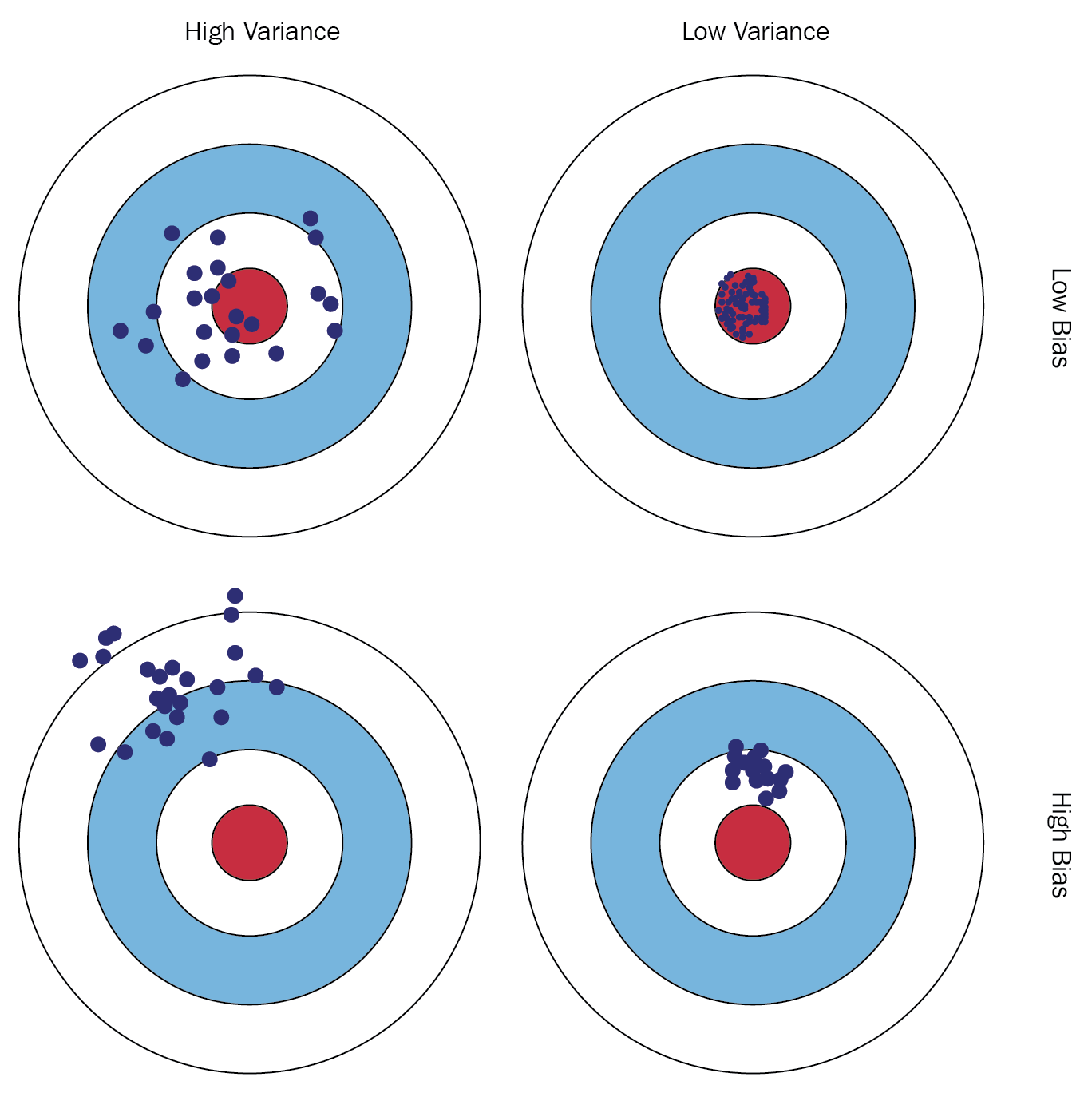

The bias-variance trade-off is the problem of simultaneously reducing the bias and variance errors of a supervised learning algorithm, which prevents the target function from generalizing well beyond the training data points. Let's have a look at the following illustrations:

Readers are encouraged to visit the following links for a better and in-depth understanding of bias-variance trade-off: http://scott.fortmann-roe.com/docs/BiasVariance.html and https://elitedatascience.com/bias-variance-tradeoff.

Consider that we are given a problem statement as: given a person's height, determine his/her weight. We are also given a training dataset with corresponding values for height and weight. The data is shown in the following diagram:

This is an instance of supervised learning problem, more so of a regression problem (see why?). Utilizing this training dataset, our algorithm would have to learn the target function to find a mapping between heights and weights of different individuals.