3.2 Overview of the State of the Art

Modern video transmission and storage systems are typically characterized by a wide range of access network technologies and end-user terminals. Varying numbers of users, each with their own time-varying data throughput requirements, adaptively share network resources, resulting in dynamic connection qualities. Users possess a variety of devices with different capabilities, ranging from cell phones with small screens and restricted processing power to high-end PCs with high-definition displays. Examples of applications include virtual collaboration system scenarios, as shown in Figure 3.2, where a large, high-powered terminal acts as the main control/command point and serves a group of co-located users. This may be the headquarters of an organization and consist of communication terminals, shared desk spaces, displays and various user-interaction devices for collaborating with remotely-located partners. The remotely-located users with small fixed terminals will act as local contacts and provide local information. Mobile units (distribution, surveying, marketing, patrolling, and so on) of the organization may use mobile terminals, such as mobile phones and PDAs, to collaborate with the headquarters.

Figure 3.2 Virtual collaboration system diagram

In order to cope with the heterogeneity of networks/terminals and diverse user preferences, the current video applications need to consider not only compression efficiency and quality but also the available bandwidth, memory, computational power and display resolutions for different terminals. The transcoding methods and the use of several encoders to generate different resolution (spatial, temporal or quality) video streams can be used to address the heterogeneity problem. But the abovementioned methods impose additional constraints such as unacceptable delays, and increase bandwidth requirements due to redundant data streams. Scalable video coding is an attractive solution for the issues posed by the heterogeneity of today's video communications. Scalable coding produces a number of hierarchical descriptions that provide flexibility in terms of adaptation to user requirements and network/device characteristics. However, in error-prone environments, the loss of a lower layer in the hierarchical descriptions prevents all higher layers being decoded, even if the higher layers are correctly received, which means that a significant amount of correctly-received data must be discarded in certain channel conditions. On the other hand, an error-resilient technique, namely multiple description coding (MDC), divides a source into two or more correlated layers. This means that a high-quality reconstruction is available when the received layers are combined and decoded, while a lower-, but still acceptable, quality reconstruction is achievable if only one of the layers is received.

A brief review of scalable video coding techniques is presented in Section 3.2.1, followed by a review of MDC algorithms in Section 3.2.2. The combination of scalable coding and MDC techniques is also reviewed in this section. Combining SVC and MDC improves error robustness, and at the same time provides adaptability to user preferences, bandwidth variations, and receiving device characteristics. For more immersive communication experiences, stereoscopic 3D video content is considered as a source for the scalable multiple description coder. Hence, in Section 3.2.3, stereoscopic 3D video coding based on a scalable video coding architecture is reviewed.

3.2.1 Scalable Coding Techniques

Nowadays video production and streaming are ubiquitous, as more and more devices are able to produce and distribute video sequences. This brings an increasingly compelling desire to send an encoded representation of a sequence that is adapted to user, device and network characteristics in such a way that coding is performed only once, while decoding may take place several times at different resolutions, frame rates and qualities.

Scalable video coding allows decoding of appropriate subsets of bitstream to generate complete pictures of size and quality, dependent on the proportion of the total bitstream decoded. A number of existing video compression standards support scalable coding, such as MPEG-2 Video and MPEG-4 Visual. Due to reduced compression efficiency, increased decoder complexity, and the characteristics of traditional transmission systems, the above scalable profiles are rarely used in practical implementations. Recent approaches for scalable video coding are based on motion-compensated 3D wavelet transform and motion-compensated temporal differential pulse code modulation (DPCM), together with spatial decorrelating transformations. Those techniques are elaborated in the following subsections.

3.2.1.1 3D Wavelet Approaches

The wavelet transform has proved to be a successful tool in the area of scalable video coding since it enables decomposition of a video sequence into several spatio-temporal sub-bands. Usually the wavelet analysis is applied both in the temporal and the spatial dimensions, hence the term ‘3D wavelet’. The decoder might receive a subset of these sub-bands and reconstruct the sequence at a reduced spatio-temporal resolution at any quality. The open-loop structure of this scheme solves the drift problems which typically occure in DPCM-based schemes when there is a mismatch between the encoder and the decoder.

In the research literature, two main coding schemes coexist. They differ in the order of the spatial and temporal analysis steps:

- t + 2D: temporal filtering is performed first, followed by spatial analysis. Motion estimation/compensation takes place in the spatial domain (see Figure 3.3).

- 2D + t: spatial analysis is performed first, followed by temporal filtering. Motion estimation/compensation takes place in the wavelet domain [2] (see Figure 3.4).

The scalable video coding based on 3D wavelet transform has been addressed in recent research activities [3]–[6]. In 2003 MPEG called for proposals for efficient scalable video coding technologies, because of the increased research activity related to scalable video coding. Most of the proposals were based on 3D wavelet transforms [7]. However, the proposed scalable extension of H.264/AVC was selected as the starting point for the scalable video coding standardization as it outperformed other proposals under different test conditions [8].

Figure 3.3 t + 2D wavelet analysis. The input signal is first filtered along the time axis, and then in the spatial dimension. Solid arrows represent temporal low-pass filtering, while dashed arrows represent temporal high-pass filtering

Figure 3.4 2D + t wavelet analysis. Spatial decomposition is carried out first, followed by temporal filtering. Motion estimation and compensation take place in the wavelet domain

3.2.1.2 DCT-based Approaches

The scalable video coding profiles of existing video coding standards are based on DCT methods. Unfortunately, due to the closed loop, these coding schemes have to address the problem of drift, which arises whenever encoder and decoder work on different versions of the reconstructed sequence. This typically leads to the loss of coding efficiency when compared with nonscalable single-layer encoding.

In 2007, the Joint Video Team (JVT) of the ITU-T VCEG and the ISO/IEC MPEG standardized a scalable video coding extension of the H.264/AVC standard [9]–[11]. The new SVC standard is capable of providing temporal, spatial, and quality scalability with base layer compatibility with H.264/AVC. Furthermore, this contains an improved DPCM prediction structure which allows greater control over drift effect associated with closed-loop video coding approaches [9].

Bitstreams with temporal scalability can be provided by using hierarchical prediction structures. In these structures, key pictures are coded at regular intervals by using only previous key pictures as references. The pictures between the key pictures are the hierarchical B pictures, which are bi-directionally predicted from the key pictures. The base layer contains a sequence of the key pictures at the coarsest supported temporal resolution, while the enhancement layers consist of the hierarchically-coded B pictures (see Figure 3.5). A low-delay coding structure is also possible, if the prediction of the enhancement layer pictures is restricted to the previous frame only.

Spatial scalability was achieved by using a multi-layer coding approach in previous coding standards, including MPEG-2 and H.263. Figure 3.6 shows a block diagram of a spatially-scalable encoder. In the scalable extension of H.264/AVC, the spatial scalability is achieved using an over-sampled pyramid approach. Each spatial layer of a picture is independently coded using motion-compensated prediction. Inter-layer motion, residual or intra prediction mechanisms can be used to improve the coding efficiency of the enhancement layers. In inter-layer motion prediction, for example, the up-scaled base layer motion data is employed for the spatial enhancement layer coding.

Figure 3.5 Prediction structure for temporal scalability

Quality scalability can be considered a subset of spatial scalability where both layers have similar spatial resolutions but different qualities. The scalable extension of H.264/AVC also supports quality scalability using coarse-grain scalability (CGS) and medium-grain scalability (MGS). CGS is achieved using spatial scalability concepts with the exclusion of the corresponding up-sampling operations in the inter-layer prediction mechanisms. MGS is introduced to improve the flexibility of bitstream adaptation and error robustness. Furthermore, this improves the coding efficiency of the bitstreams that aim at providing different bit rates.

3.2.2 Multiple Description Coding

In order to mitigate the visual artifacts caused by data losses over unreliable and dynamic transmission links, error-resilient mechanisms are employed in present video communication systems. MDC is one of the prominent error-resilient methods which can be effectively used in combating burst errors in delay-constrained video applications where retransmission is not feasible [13], [14]. MDC tackles the problem of encoding a source for transmission over a communication system with multiple channels. Its objective is to encode a source into two or more bitstreams. The streams and the descriptions are correlated and equally important. This means that a high-quality resolution is decodable from all the received bitstreams together, while a lower-, but still acceptable, quality reconstruction is achievable if only one stream is received. A classification of MDC schemes is given below.

Figure 3.6 Scalable encoder using a multi-scale pyramid with three levels of spatial scalability [12]

- Odd–Even Frames: the odd–even-frame-based MDC schemes have been subjected to detailed analysis by the research community, due to their simplicity in producing multiple streams. They can also easily avoid mismatch at the expense of reduced coding efficiency [15]–[17]. Basically, he odd–even-frame-based MDC includes the odd and even video frames into descriptions one and two, respectively [15]. The redundancy in this MDC technique comes from the longer temporal prediction distance compared to standard video coders. Hence, its coding efficiency is reduced.

- Redundant Slices: an error-robustness feature which allows an encoder to send an extra representation of a picture region at lower fidelity, which can be used if the primary representation is corrupted (e.g. redundant slices in H.264/AVC) [18].

- Spatial MDC: the original signal is decomposed into subsets in the spatial domain, where each description corresponds to different subsets. Examples of algorithms include poly-phase down-sampling on image samples [19] and motion vectors using quincunx sub-sampling [20].

- Scalar Quantizer MDC: in MDC quantization algorithms, the multiple descriptions are produced by splitting the quantized coefficient into two or more streams. The output of the quantizer is assigned two or more indexes, one for each description. Based on the received indexes, the MDC decoder estimates the reconstructed signals. The redundancy and the corresponding side distortion introduced by this MDC algorithm are controlled by the assignment of indexes to each quantization bin. Some of the proposed MDC quantization algorithms are analyzed in [21]–[24].

Scalable MDC methods are introduced to improve the error resilience of the transmitted video over unreliable networks and, at the same time, provide adaptation to bandwidth variations and receiving device characteristics [25]. These methods can be categorized into two main types:

- The first category starts with a MDC coder and the MDC descriptions are then made scalable (e.g. a single MDC description is split into base and enhancement layers [26], [27]).

- The second category starts with an SVC coder and the SVC layers are then mapped into MDC descriptions (e.g. a scalable wavelet encoder can be used to provide MDC streams that can vary the number of descriptions, the rate of each individual description, and the redundancy level of each description using post encoding [28]–[30]).

Furthermore, embedded MDC techniques are introduced which use embedded multiple description scalar quantizers and a combination of motion-compensated temporal filtering and wavelet transform [31]. This can also be used to achieve a fine-grain scalable bitstream in addition to error resilience.

3.2.3 Stereoscopic 3D Video Coding

Stereoscopic 3D video can be used as a video source for the scalable MDC to provide a robust immersive communication experience. Therefore, some background on stereoscopic 3D video is provided in this section.

There are several forms of 3D video in the research literature, including multi-view video and panaromic video [32]. Stereoscopic video is the simplest form of multi-view 3D video and can easily be integrated into broadcasting, storage, and communication applications using existing transmission and audiovisual technologies. Stereoscopic video renders two slightly different views for each eye, which facilitates depth perception of the 3D scene.

3.2.3.1 Types of Stereoscopic Video

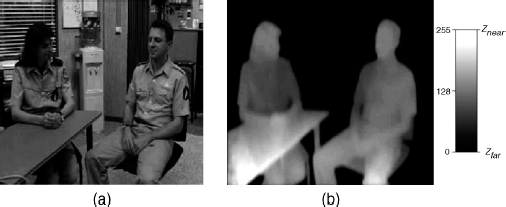

There are several techniques to generate stereoscopic content, including dual camera configuration, 3D/depth–range cameras and 2D-to-3D conversion algorithms [33]. The use of a stereo camera pair is the simplest and most cost-effective way to obtain stereo video, compared to other technologies available in the literature. The latest depth–range camera generates a color image and an associated per-pixel depth image of a scene (see Figure 3.7). The per-pixel depth value determines the position of the associated color texture in the 3D space. A specialized image-warping technique known as the depth-image-based rendering (DIBR) is used to synthesize the desired binocular viewpoint image [34].

Figure 3.7 “Interview” sequence: (a) color image; (b) per-pixel depth image. The depth-images are normalized to a near clipping plane, Znear, and a far clipping plane, Zfar

There are advantages and disadvantages associated with stereoscopic video generated using depth–range cameras instead of the stereo camera pairs [35]. The ‘color-plus-depth-map’ formats are widely used in standardization and research activities. In order to provide interoperability of the content, flexibility regarding transport and compression techniques, display independence, and ease of integration, video-plus-depth-image solutions are standardized by MPEG as MPEG-C part 3 [36]. The ATTEST (Advanced Three-Dimensional Television System Technologies) consortium worked on a 3D-TV broadcast technology using color-depth sequences as the main source of 3D video [37]. The VISNET work on scalable stereoscopic video will focus on coding of color-depth sequences using the scalable video coding architecture. This aims at scaling existing video applications into stereoscopic video with low overheads.