i

i

i

i

i

i

i

i

522 11. Non-Photorealistic Rendering

part of the silhouette, the quadrilateral’s points are moved so that it is no

longer degenerate (i.e., is made visible). This results in a thin quadrilateral

“fin,” representing the edge, being drawn. This technique is based on the

same idea as the vertex shader for shadow volume creation, described on

page 347. Boundary edges, which have only one neighboring triangle, can

also be handled by passing in a second normal that is the negation of this

triangle’s normal. In this way, the boundary edge will always be flagged

as one to be rendered. The main drawbacks to this technique are a large

increase in the number of polygons sent to through the pipeline, and that

it does not perform well if the mesh undergoes nonlinear transforms [1382].

McGuire and Hughes [844] present work to provide higher-quality fin lines

with endcaps.

If the geometry shader is a part of the pipeline, these additional fin

polygons do not need to be generated on the CPU and stored in a mesh.

The geometry shader itself can generate the fin quadrilaterals as needed.

Other silhouette finding methods exist. For example, Gooch et al. [423]

use Gauss maps for determining silhouette edges. In the last part of Sec-

tion 14.2.1, hierarchical methods for quickly categorizing sets of polygons

as front or back facing are discussed. See Hertzman’s article [546] or either

NPR book [425, 1226] for more on this subject.

11.2.5 Hybrid Silhouetting

Northrup and Markosian [940] use a silhouette rendering approach that

has both image and geometric elements. Their method first finds a list of

silhouette edges. They then render all the object’s triangles and silhouette

edges, assigning each a different ID number (i.e., giving each a unique

color). This ID buffer is read back and the visible silhouette edges are

determined from it. These visible segments are then checked for overlaps

and linked together to form smooth stroke paths. Stylized strokes are then

rendered along these reconstructed paths. The strokes themselves can be

stylized in many different ways, including effects of taper, flare, wiggle,

and fading, as well as depth and distance cues. An example is shown in

Figure 11.12.

Kalnins et al. [621] use this method in their work, which attacks an

important area of research in NPR: temporal coherence. Obtaining a sil-

houette is, in one respect, just the beginning. As the object and viewer

move, the silhouette edge changes. With stroke extraction techniques some

coherence is available by tracking the separate silhouette loops. However,

when two loops merge, corrective measures need to be taken or a noticeable

jump from one frame to the next will be visible. A pixel search and “vote”

algorithm is used to attempt to maintain silhouette coherence from frame

to frame.

i

i

i

i

i

i

i

i

11.3. Other Styles 523

Figure 11.12. An image produced using Northrup and Markosian’s hybrid technique,

whereby silhouette edges are found, built into chains, and rendered as strokes. (Image

courtesy of Lee Markosian.)

11.3 Other Styles

While toon rendering is a popular style to attempt to simulate, there is an

infinite variety of other styles. NPR effects can range from modifying real-

istic textures [670, 720, 727] to having the algorithm procedurally generate

geometric ornamentation from frame to frame [623, 820]. In this section,

we briefly survey techniques relevant to real-time rendering.

In addition to toon rendering, Lake et al. [713] discuss using the diffuse

shading term to select which texture is used on a surface. As the diffuse

term gets darker, a texture with a darker impression is used. The tex-

ture is applied with screen-space coordinates to give a hand-drawn look.

A paper texture is also applied in screen space to all surfaces to further

enhance the sketched look. As objects are animated, they “swim” through

the texture, since the texture is applied in screen space. It could be ap-

plied in world space for a different effect. See Figure 11.13. Lander [722]

discusses doing this process with multitexturing. One problem with this

type of algorithm is the shower door effect, where the objects look like they

are viewed through patterned glass during animation. The problem arises

from the textures being accessed by pixel location instead of by surface

coordinates—objects then look somewhat detached from these textures,

since indeed that is the case.

i

i

i

i

i

i

i

i

524 11. Non-Photorealistic Rendering

Figure 11.13. An image generated by using a palette of textures, a paper texture, and

silhouette edge rendering. (Reprinted by permission of Adam Lake and Carl Marshall,

Intel Corporation, copyright Intel Corporation 2002.)

One solution is obvious: Use texture coordinates on the surface. The

challenge is that stroke-based textures need to maintain a relatively uni-

form stroke thickness and density to look convincing. If the texture is

magnified, the strokes appear too thick; if it is minified, the strokes are

either blurred away or are thin and noisy (depending on whether mipmap-

ping is used). Praun et al. [1031] present a real-time method of generating

stroke-textured mipmaps and applying these to surfaces in a smooth fash-

ion. Doing so maintains the stroke density on the screen as the object’s

distance changes. The first step is to form the textures to be used, called

tonal art maps (TAMs). This is done by drawing strokes into the mipmap

levels.

2

See Figure 11.14. Care must be taken to avoid having strokes

clump together. With these textures in place, the model is rendered by

interpolating between the tones needed at each vertex. Applying this tech-

nique to surfaces with a lapped texture parameterization [1030] results in

images with a hand-drawn feel. See Figure 11.15.

Card and Mitchell [155] give an efficient implementation of this tech-

nique by using pixel shaders. Instead of interpolating the vertex weights,

they compute the diffuse term and interpolate this per pixel. This is then

used as a texture coordinate into two one-dimensional maps, which yields

per-pixel TAM weights. This gives better results when shading large poly-

2

Klein et al. [670] use a related idea in their “art maps” to maintain stroke size for

NPR textures.

i

i

i

i

i

i

i

i

11.3. Other Styles 525

Figure 11.14. Tonal art maps (TAMs). Strokes are drawn into the mipmap levels. Each

mipmap level contains all the strokes from the textures to the left and above it. In

this way, interpolation between mip levels and adjoining textures is smooth. (Images

courtesy of Emil Praun, Princeton University.)

Figure 11.15. Two models rendered using tonal art maps (TAMs). The swatches show the

lapped texture pattern used to render each. (Images courtesy of Emil Praun, Princeton

University.)

gons. Webb et al. [1335] present two extensions to TAMs that give better

results, one using a volume texture, which allows the use of color, the other

using a thresholding scheme, which improves antialiasing. Nuebel [943]

gives a related method of performing charcoal rendering. He uses a noise

texture that also goes from dark to light along one axis. The intensity

value accesses the texture along this axis. Lee et al. [747] use TAMs and a

number of other interesting techniques to generate impressive images that

appear drawn by pencil.

With regard to strokes, many other operations are possible than those

already discussed. To give a sketched effect, edges can be jittered [215, 726,

i

i

i

i

i

i

i

i

526 11. Non-Photorealistic Rendering

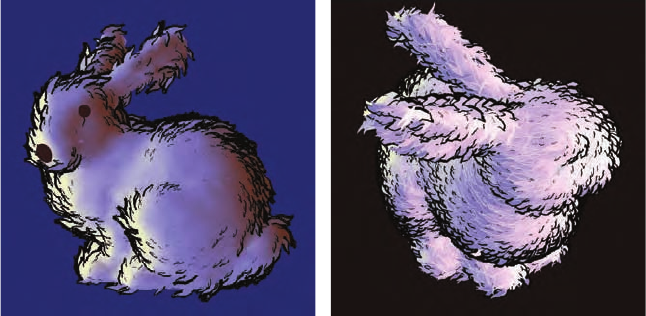

Figure 11.16. Two different graftal styles render the Stanford bunny. (Images courtesy

of Bruce Gooch and Matt Kaplan, University of Utah.)

747] or extended beyond their original locations, as seen in the upper right

and lower middle images in Figure 11.1 on page 507.

Girshick et al. [403] discuss rendering strokes along the principal curve

direction lines on a surface. That is, from any given point on a surface,

there is a first principal direction tangent vector that points in the direction

of maximum curvature. The second principal direction is the tangent vector

perpendicular to this first vector and gives the direction in which the surface

is least curved. These direction lines are important in the perception of a

curved surface. They also have the advantage of needing to be generated

only once for static models, since such strokes are independent of lighting

and shading.

Mohr and Gleicher [888] intercept OpenGL calls and perform NPR ef-

fects upon the low-level primitives, creating a variety of drawing styles. By

making a system that replaces OpenGL, existing applications can instantly

be given a different look.

The idea of graftals [623, 820] is that geometry or decal textures can be

added as needed to a surface to produce a particular effect. They can be

controlled by the level of detail needed, by the surface’s orientation to the

eye, or by other factors. These can also be used to simulate pen or brush

strokes. An example is shown in Figure 11.16. Geometric graftals are a

form of procedural modeling [295].

This section has only barely touched on a few of the directions NPR

research has taken. See the “Further Reading and Resources” section at

the end of this chapter for where to go for more information. To conclude

this chapter, we will turn our attention to the basic line.

..................Content has been hidden....................

You can't read the all page of ebook, please click here login for view all page.