Before we implement our first linear regression model, we will introduce a new dataset, the Housing Dataset, which contains information about houses in the suburbs of Boston collected by D. Harrison and D.L. Rubinfeld in 1978. The Housing Dataset has been made freely available and can be downloaded from the UCI machine learning repository at https://archive.ics.uci.edu/ml/datasets/Housing.

The features of the 506 samples may be summarized as shown in the excerpt of the dataset description:

- CRIM: This is the per capita crime rate by town

- ZN: This is the proportion of residential land zoned for lots larger than 25,000 sq.ft.

- INDUS: This is the proportion of non-retail business acres per town

- CHAS: This is the Charles River dummy variable (this is equal to 1 if tract bounds river; 0 otherwise)

- NOX: This is the nitric oxides concentration (parts per 10 million)

- RM: This is the average number of rooms per dwelling

- AGE: This is the proportion of owner-occupied units built prior to 1940

- DIS: This is the weighted distances to five Boston employment centers

- RAD: This is the index of accessibility to radial highways

- TAX: This is the full-value property-tax rate per $10,000

- PTRATIO: This is the pupil-teacher ratio by town

- B: This is calculated as 1000(Bk - 0.63)^2, where Bk is the proportion of people of African American descent by town

- LSTAT: This is the percentage lower status of the population

- MEDV: This is the median value of owner-occupied homes in $1000s

For the rest of this chapter, we will regard the housing prices (MEDV) as our target variable—the variable that we want to predict using one or more of the 13 explanatory variables. Before we explore this dataset further, let's fetch it from the UCI repository into a pandas DataFrame:

>>> import pandas as pd

>>> df = pd.read_csv('https://archive.ics.uci.edu/ml/machine-learning-databases/housing/housing.data',

... header=None, sep='s+')

>>> df.columns = ['CRIM', 'ZN', 'INDUS', 'CHAS',

... 'NOX', 'RM', 'AGE', 'DIS', 'RAD',

... 'TAX', 'PTRATIO', 'B', 'LSTAT', 'MEDV']

>>> df.head()To confirm that the dataset was loaded successfully, we displayed the first five lines of the dataset, as shown in the following screenshot:

Exploratory Data Analysis (EDA) is an important and recommended first step prior to the training of a machine learning model. In the rest of this section, we will use some simple yet useful techniques from the graphical EDA toolbox that may help us to visually detect the presence of outliers, the distribution of the data, and the relationships between features.

First, we will create a

scatterplot matrix that allows us to visualize the pair-wise correlations between the different features in this dataset in one place. To plot the scatterplot matrix, we will use the pairplot function from the seaborn library (http://stanford.edu/~mwaskom/software/seaborn/), which is a Python library for drawing statistical plots based on matplotlib:

>>> import matplotlib.pyplot as plt >>> import seaborn as sns >>> sns.set(style='whitegrid', context='notebook') >>> cols = ['LSTAT', 'INDUS', 'NOX', 'RM', 'MEDV'] >>> sns.pairplot(df[cols], size=2.5); >>> plt.show()

As we can see in the following figure, the scatterplot matrix provides us with a useful graphical summary of the relationships in a dataset:

Due to space constraints and for purposes of readability, we only plotted five columns from the dataset: LSTAT, INDUS, NOX, RM, and MEDV. However, you are encouraged to create a scatterplot matrix of the whole DataFrame to further explore the data.

Using this scatterplot matrix, we can now quickly eyeball how the data is distributed and whether it contains outliers. For example, we can see that there is a linear relationship between RM and the housing prices MEDV (the fifth column of the fourth row). Furthermore, we can see in the histogram (the lower right subplot in the scatter plot matrix) that the MEDV variable seems to be normally distributed but contains several outliers.

Note

Note that in contrast to common belief, training a linear regression model does not require that the explanatory or target variables are normally distributed. The normality assumption is only a requirement for certain statistical tests and hypothesis tests that are beyond the scope of this book (Montgomery, D. C., Peck, E. A., and Vining, G. G. Introduction to linear regression analysis. John Wiley and Sons, 2012, pp.318–319).

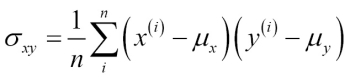

To quantify the linear relationship between the features, we will now create a correlation matrix. A correlation matrix is closely related to the covariance matrix that we have seen in the section about principal component analysis (PCA) in Chapter 4, Building Good Training Sets – Data Preprocessing. Intuitively, we can interpret the correlation matrix as a rescaled version of the covariance matrix. In fact, the correlation matrix is identical to a covariance matrix computed from standardized data.

The correlation matrix is a square matrix that contains the

Pearson product-moment correlation coefficients (often abbreviated as Pearson's r), which measure the linear dependence between pairs of features. The correlation coefficients are bounded to the range -1 and 1. Two features have a perfect positive correlation if ![]() , no correlation if

, no correlation if ![]() , and a perfect negative correlation if

, and a perfect negative correlation if ![]() , respectively. As mentioned previously, Pearson's correlation coefficient can simply be calculated as the covariance between two features

, respectively. As mentioned previously, Pearson's correlation coefficient can simply be calculated as the covariance between two features ![]() and

and ![]() (numerator) divided by the product of their standard deviations (denominator):

(numerator) divided by the product of their standard deviations (denominator):

Here, ![]() denotes the sample mean of the corresponding feature,

denotes the sample mean of the corresponding feature, ![]() is the covariance between the features

is the covariance between the features ![]() and

and ![]() , and

, and ![]() and

and ![]() are the features' standard deviations, respectively.

are the features' standard deviations, respectively.

Note

We can show that the covariance between standardized features is in fact equal to their linear correlation coefficient.

Let's first standardize the features ![]() and

and ![]() , to obtain their z-scores which we will denote as

, to obtain their z-scores which we will denote as ![]() and

and ![]() , respectively:

, respectively:

Remember that we calculate the (population) covariance between two features as follows:

Since standardization centers a feature variable at mean 0, we can now calculate the covariance between the scaled features as follows:

Through resubstitution, we get the following result:

We can simplify it as follows:

In the following code example, we will use NumPy's corrcoef function on the five feature columns that we previously visualized in the scatterplot matrix, and we will use seaborn's heatmap function to plot the correlation matrix array as a heat map:

>>> import numpy as np

>>> cm = np.corrcoef(df[cols].values.T)

>>> sns.set(font_scale=1.5)

>>> hm = sns.heatmap(cm,

... cbar=True,

... annot=True,

... square=True,

... fmt='.2f',

... annot_kws={'size': 15},

... yticklabels=cols,

... xticklabels=cols)

>>> plt.show()As we can see in the resulting figure, the correlation matrix provides us with another useful summary graphic that can help us to select features based on their respective linear correlations:

To fit a linear regression model, we are interested in those features that have a high correlation with our target variable MEDV. Looking at the preceding correlation matrix, we see that our target variable MEDV shows the largest correlation with the LSTAT variable (-0.74). However, as you might remember from the scatterplot matrix, there is a clear nonlinear relationship between LSTAT and MEDV. On the other hand, the correlation between RM and MEDV is also relatively high (0.70) and given the linear relationship between those two variables that we observed in the scatterplot, RM seems to be a good choice for an exploratory variable to introduce the concepts of a simple linear regression model in the following section.